Tachyonism

Member

Why cant those instructions do FSR4?It's just FP8 support for ROCm duties.

Why cant those instructions do FSR4?It's just FP8 support for ROCm duties.

It will likely support FSR4. If I had to bet, that is the reason they are doing this.Why cant those instructions do FSR4?

If they go wider on CCD count, the real question is scheduling and inter chip communication. You can throw more chiplets at it, but gaming and mixed loads get weird if the OS is bouncing threads across die boundaries. A better play might be fewer CCD but bigger per CCD, more cache, better memory controller, and tighter fabric latency.

DDR6 and a new socket would make sense as a platform refresh, but I wouldn’t expect it unless the memory controller and IO side are actually the limiting factor they want to fix.

I'm curious to see how it will perform, CU count isnt much different from a RX 9070, with the benefit of being a newer gen, but power wise probably will be a huge differenceoh look. fun product rumour.

This is going to be a really fast SoC huh

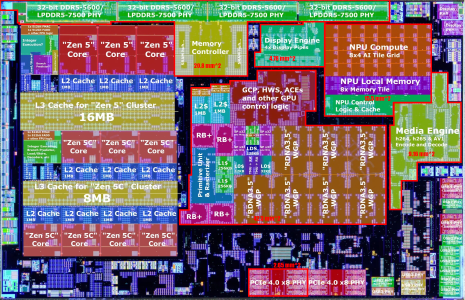

AMD "Medusa Halo" APU to Use LPDDR6 Memory

The next major refresh of AMD's Ryzen AI MAX APUs is still far away, but now we are putting together the pieces of the "Medusa Halo" APU puzzle. According to a famous leaker, @Olrak29_ on X, AMD's next-generation "Medusa Halo" APU will be complemented by LPDDR6 memory. This is one of the first...www.techpowerup.com

for starters it will be costlier than strix haloAlso hoping the brands adopt it more than they did with Strix Halo

It's a victim cache. So it would be more correct to say low thread count non-streaming workloads such as games.Bigger L3 pool will automatically lower latency to low thread count workloads, such as games, which even bigger V-Cache lower further.

It's a victim cache. So it would be more correct to say low thread count non-streaming workloads such as games.Also, L3 helps bandwidth. More bandwidth stays internal to the CCD, more of the external bandwidth it frees up.

oh look. fun product rumour.

This is going to be a really fast SoC huh

AMD "Medusa Halo" APU to Use LPDDR6 Memory

The next major refresh of AMD's Ryzen AI MAX APUs is still far away, but now we are putting together the pieces of the "Medusa Halo" APU puzzle. According to a famous leaker, @Olrak29_ on X, AMD's next-generation "Medusa Halo" APU will be complemented by LPDDR6 memory. This is one of the first...www.techpowerup.com

Medusa Premium could end up being a killer budget gaming chip. If it uses LPDDR6, the memory bandwidth will be close to Strix Halo. 24 RDNA5 CUs could match 40 RDN3.5 CUs too.for starters it will be costlier than strix halo

the other option is medusa premium (half the gpu size of halo). I am hoping shear scale brings the price down. it might take 2030 until open ai to go bankrupt. until then both halo & mini halo would be used for edge AI use cases (such as DGX spark). hence the price of these systems will be at premium level until then

- 20% more GPU CUs

- 3nm rather than 4nm

Medusa Premium could end up being a killer budget gaming chip.

premium

/0budget

PHY's a combo but FP10 pinout means L5x in mobile.If it uses LPDDR6, the memory bandwidth will be close to Strix Halo. 24 RDNA5 CUs could match 40 RDN3.5 CUs too.

Yeah it'll get close to the current 106 tier.I don't know how much stuff they'll actually cut, but Medusa Premium could perform like Strix Halo and be priced closer to Strix Point.

Budget in the context of gaming laptops, competing with RTX 5060 and RTX 6050 laptops in price and performance.

Ah, well that dashes that dream. They could do on-package LPDDR6 for a few laptops, but that increases the price.PHY's a combo but FP10 pinout means L5x in mobile.

No way it's cheaper than a 6050 laptop, given it's the same build quality and such.Budget in the context of gaming laptops, competing with RTX 5060 and RTX 6050 laptops in price and performance.

Oh yeah that's true, they're designated discrete compete parts.Budget in the context of gaming laptops, competing with RTX 5060 and RTX 6050 laptops in price and performance.

No way man, that requires a separate platform basically.They could do on-package LPDDR6 for a few laptops, but that increases the price.

Yeah it will be, AMD will gladly swallow lower margin for design volume increment.No way it's cheaper than a 6050 laptop, given it's the same build quality and such.

They’ve had 64bit versions of it.high end Android flagship have been the first to adopt a new LPDDR version

History went outta the window when fat GPGPU solutions (VR200/Helios) started loading on gobs and gobs of LPDDR.Historically, high end Android flagship have been the first to adopt a new LPDDR version, and laptops have followed 2-3 years later.

My point has already been rendered moot since it sounds like LPDDR6 is off the table for Medusa Premium laptops. I'm curious why you think that though. Medusa Premium could use 60mm^2 less die area from removing the iGPU, and have simpler and cheaper motherboards and heatsinks.No way it's cheaper than a 6050 laptop, given it's the same build quality and such.

oh look. fun product rumour.

This is going to be a really fast SoC huh

AMD "Medusa Halo" APU to Use LPDDR6 Memory

The next major refresh of AMD's Ryzen AI MAX APUs is still far away, but now we are putting together the pieces of the "Medusa Halo" APU puzzle. According to a famous leaker, @Olrak29_ on X, AMD's next-generation "Medusa Halo" APU will be complemented by LPDDR6 memory. This is one of the first...www.techpowerup.com

Uh, looks like Techpowerup made an error. Innosilicon a memory controller provider, whereas Samsung is the memory module makers? They are comparing apples and oranges.Interestingly, memory manufacturers like Samsung and Innosilicon are already supplying LPDDR6 modules to customers for validation. Innosilicon's LPDDR6 modules boast an impressive speed of 14.4 Gbps, significantly faster than Samsung's initial modules, which achieve 10.7 Gbps.

Uh, looks like Techpowerup made an error. Innosilicon a memory controller provider, whereas Samsung is the memory module makers? They are comparing apples and oranges.

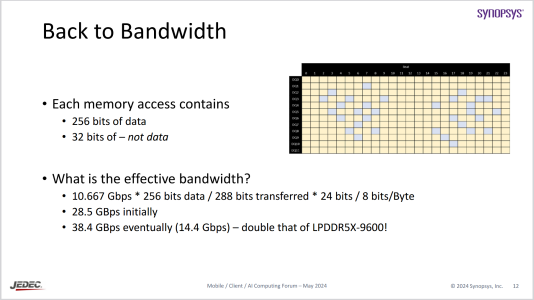

14.4 Gbps is the max JEDEC speed for LPDDR6, whereas modules are expected to start at 10.7 Gbps.

Samsung: Who's talking?LPDDR6-14400, when it arrives, would be equivalent to LPDDR5X-12800 (which AFAIK does not exist)

The big deal is the 50% increased channel width. So going from 128b LPDDR5X-9600 to 192b LPDDR6-10700 is a 50% bandwidth improvement.It is no different than going to LPDDR5X-9600, which I doubt people would be as excited about. Its a decent boost, but unlikely to be noticeable unless you're running benchmarks or running the iGPU at the ragged edge of its frame rate capability.

Atta boy.The big deal is the 50% increased channel width. So going from 128b LPDDR5X-9600 to 192b LPDDR6-10700 is a 50% bandwidth improvement.

That's not how it works. Both of the combo PHYs announced so far keep the amount of channels constant between the memory types, not the width of the interface. Because all the lines other than data lines would need to be increased for more channels, and you'd have to do weird remapping between data lines of adjacent channels for different widths. (Also, because of changes in how LPDDR6 works, a wider bus is cheaper with LPDDR6 than with LPDDR5X.) A device using such a combo PHY that supports 192-bit LPDDR6 can only support 128-bit LPDDR5X.This assumes you use the same overall bus width - which is a given in any design that uses dual LPDDR6/LPDDR5X controllers.

Still only a toy when it comes to the AI use case. Apple shows the way on membw, but at least with Strix Halo you can attach egpu's to give prompt processing and token generation speed a kick in the pants using sparse models.The big deal is the 50% increased channel width. So going from 128b LPDDR5X-9600 to 192b LPDDR6-10700 is a 50% bandwidth improvement.

This assumes you use the same overall bus width - which is a given in any design that uses dual LPDDR6/LPDDR5X controllers.

That's not how it works. Both of the combo PHYs announced so far keep the amount of channels constant between the memory types, not the width of the interface. Because all the lines other than data lines would need to be increased for more channels, and you'd have to do weird remapping between data lines of adjacent channels for different widths. (Also, because of changes in how LPDDR6 works, a wider bus is cheaper with LPDDR6 than with LPDDR5X.) A device using such a combo PHY that supports 192-bit LPDDR6 can only support 128-bit LPDDR5X.