Jan Olšan

Senior member

Wow, nice IPC gain in x264. Is this architecture specifically designed for my CPUs tastes or what?

Zen 5 Variants and More, Clock for Clock

Zen 5 is AMD’s newest core architecture.chipsandcheese.com

Wow, nice IPC gain in x264. Is this architecture specifically designed for my CPUs tastes or what?

Zen 5 Variants and More, Clock for Clock

Zen 5 is AMD’s newest core architecture.chipsandcheese.com

AVX-512 has its uses 😀Wow, nice IPC gain in x264. Is this architecture specifically designed for my CPUs tastes or what?

The processor fetches instructions from the instruction cache in 32-byte blocks that are 16-byte aligned and contained within a 64-byte aligned block. The processor can perform a 32-byte fetch every cycle. The fetch unit sends these bytes to the decode unit through a 24 entry Instruction Byte Queue (IBQ),each entry holding 16 instruction bytes. In SMT mode each thread has 12 dedicated IBQ entries. The IBQ acts as a decoupling queue between the fetch/branch-predict unit and the decode unit.

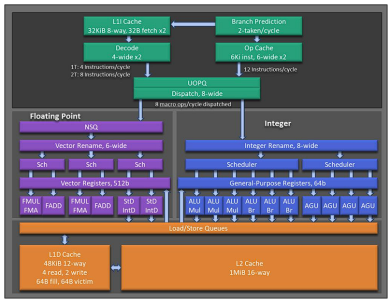

The processor fetches instructions from the instruction cache in 32-byte blocks that are 32-byte aligned. Up to two of these blocks can be independently fetched every cycle to feed the decode unit’s two decode pipes. Instruction bytes from different basic blocks can be fetched and sent outof-order to the 2 decode pipes, enabling instruction fetch-ahead which can hide latencies for TLB misses, Icache misses, and instruction decode. Each decode pipe has a 20-entry structure called the IBQ which acts as a decoupling queue between the fetch/branch-predict unit and the decode unit. IBQ entries hold 16 byte-aligned fetch windows of the instruction byte stream. The decode pipes each scan two IBQ entries and output up to four instructions per cycle. In single thread mode the maximum throughput is 4 instructions per cycle. In SMT mode decode pipe 0 is dedicated to Thread 0 and decode pipe 1 is dedicated to Thread 1, supporting a maximum throughput of eight instructions per cycle.

Up to 96-Mbyte shared, victim L3, depending on configuration.

Yet, it seems possible that it has better IPC and more performance per area on N3 than even Skymont the x64 wundercore and area-king.Yet, they preferred to invest in this 2-BB oddity + super-large BTB instead of investing to INT-PRF/ROB.

Z4The branch misprediction penalty is in the range from 12 to 18 cycles, depending on the type of mispredicted branch and whether the instructions are being fed from the Op Cache. The common case penalty is 15 cycles

The branch misprediction penalty is in the range from 11 to 18 cycles, depending on the type of mispredicted branch and whether or not the instructions are being fed from the Op Cache. The common case penalty is 13 cycles

Mike Clark hinted to this in a recent interview about Zen 5. Now, it would be wise to take MCs comments with a grain of salt, because previously he gushed so much about Zen 5 and the reality, so far, has been far less impressive than he led us to believe, IMO.Looks like AMD put in a lot of experimental tech into Zen 5 and the "low hanging fruits" in Zen 6 will be both making them work and making better use of them.

The biggest challenge of Zen 5 design

TH: What was the biggest challenge you encountered with Zen 5 development?

MC: It was actually dealing with two technologies [designing Zen 5 for both the 4nm and 3nm process technologies], especially a technology that the previous generation was in. And trying to do so much change, and therefore the unavoidable reality that in 4nm it's going to be [consume] more power than it's going to be in 3nm, no matter how smart we are.

But we need that flexibility in our roadmap, and it makes sense. But still that was really hard to try to control having the two technologies and the features, and a feature that looks great in 3nm not looking so great in 4nm because of the power impact of the not-as-efficient transistor and how it affects the floorplan. Normally, we do the architecture in one, and then we port on the next one, and then you have a lot of time to deal in the floor plan with the two technologies. [..] It was just really challenging. But that gives Zen 6 a lot of room to improve.

And we're going to deliver 3nm here in short order with 4nm; basically, they're on top of each other. So the design teams are separate in building those, but we're trying to communicate and work together — it is still the same. We've tried to keep it simple for our own sanity. We have all these designs we have to validate and we have to build, and the more they're different, the more things just get out of control. It drives complexity.

That was a challenge, and one we love because, like I said, now that we've done it, we've learned a lot from it. We're going to be able to do it better the next time. That's what makes this job so fun: constantly learning, constantly new challenges, and new innovation.

I don't want to amend the Zen 5 Info thread yet, but I feel like my hunch wasn't just a hunch. There is a fundamental inclination that Zen 5 has which is server oriented. It may be a growing pain in the future and I wouldn't be surprised if we start hearing more and more about dividing architectures into client and server (and no, not just the uncore).I think the root issue is firstly that client and data center share the same architecture and for that reason they don’t have something specific to client for perf per watt. So trade offs are being made.

Perfect. Someone benchmark a RISC emulator on the thing!If all the stars are aligned, Zen 5 can execute 16 RISC like instructions per clock.

SunnyCove 1+3-Wide decode!* 2019 - public release of Sunny Cove - jump to 4+1-wide machine

.data

align 16

Var1 dd 0

Var2 real4 0.0

Var3 real8 262144.0

.code

Test2 proc

push rbp

push rsi

push rbx

sfence

rdtsc

mov rbp, rax

mov ecx, 1

shl ecx, 18

lea rsi, Var1

align 16

@@:

add eax, [rsi] ; 2

vxorps xmm5, xmm5, xmm5 ; 1

add edx, [rsi] ; 2

vxorps xmm6, xmm6, xmm6 ; 1

add rbx, [rsi] ; 2

vxorps xmm7, xmm7, xmm7 ; 1

xor eax, eax ; 1

cmp rsi, 0 ; 1

jz @end ; 1

vaddss xmm0, xmm0, dword ptr[rsi+4] ; 2

mov rdi, rdi ; 1

vaddss xmm1, xmm1, dword ptr[rsi+4] ; 2

cmp rsi, 0 ; 1

jz @end ; 1

mov rbp, rbp ; 1

vaddss xmm2, xmm2, dword ptr[rsi+4] ; 2

xor edx, edx ; 1

cmp rsi, 0 ; 1

jz @end ; 1

vaddss xmm3, xmm3, xmm3 ; 1

dec ecx ; 1

jnz @b ; 1 ; 22 instructions, 28 uops

sfence

rdtsc

sub rax, rbp

vcvtsi2sd xmm4, xmm4, rax

vdivsd xmm0, xmm4, Var3 ; ~ 2.83 Cycles, 9.98 uops/cycle, 7.7 IPC

pop rbx

pop rsi

pop rbp

@end:

ret

Test2 endp

ENDIs that benchmark code to determine probable IPC of any x86-64 CPU?I was able to squeeze about 9.98 uops from Zen 3 from meaning less code

It's a hand-optimized loop, it doesn't look like a benchmark that gives any kind of comparable results between different architectures, it seems like it was crafted to try to find out the absolute maximum throughput on some machine.Is that benchmark code to determine probable IPC of any x86-64 CPU?

I think retire bandwidth on Zen3 is 8 per cycle, and 7.7 is pretty close to it.IPC was about 7.7, hmm. Not sure I am measuring it correctly. If someone finds the reason for this, let me know.

Interesting, it's a wider range than Intel chips. 2 pipeline difference would be 4-8%, but they are basing the average on the uop cache hit. It would likely be 11 on a hit and 18 on a miss if it applies to all scenarios.Mispredict penalty

13 --> 15 cycles

Z5: 12-18

Z4: 11-18

Not necessarily, I tried to make maximum out of my Zen3. Other architectures have to be tuned differently.Is that benchmark code to determine probable IPC of any x86-64 CPU?

Just curious on this, what kind of limitations in its implementation of decoder arch does AMD have in terms of patents and such? Does Intel (or Arm) have 'foundational' patents in this space?This just confirms they chased the SMT ghost with this weird implementation.

What makes this Zen 5 architectural decision even more WTF-like is the timeline:

* 2019 - public release of Sunny Cove - jump to 4+1-wide machine

* 2020 - unveil of Tremont - duplicated 3-wide Goldmont decoder for 1T

* 2021 - public release of Gracemont - even more efficient clustered decode for 1T

* 2021 - public release of Golden Cove - jump to 6-wide machine

// * 2024 - public announcement of Lion Cove - jump to 8-wide machine(?)

Zen 5 was in development since at least 2018.

Yet, they preferred to invest in this 2-BB oddity + super-large BTB instead of investing to INT-PRF/ROB.

// Btw no "multi-layer" VCache is intended for Zen 5:

But that's not really the case. Zen 5 paid no attention to balance since only 512bit AVX-512 got doubled width (in the ideal case up to doubling compute performance), and scalar integer got expanded (in the ideal case offering up to 35% more compute performance). Everything else is essentially untouched, while the improvement in scalar integer seems to be hard to make use of, and that's the imbalance.I was defending Zen 5 as "probably not that imbalanced, just having a hard time with INT/scalar"

As @LightningZ71 already noted that's actually happening with Zen 5, clearly client oriented dies don't get the server Zen 5 512bit AVX-512 implementation but keep the double pumped 256bit AVX-512 implementation from Zen 4. We can that separation to increase, perhaps similar to what happened with the RDNA and CDNA split for GPUs.Zen 5 may be a mild wake up call for server and client architectures to start being split.

The store queue width was not doubled though. Only its depth was increased.Zen 5 paid no attention to balance since only 512bit AVX-512 got doubled width (in the ideal case up to doubling compute performance),