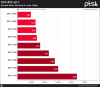

Thanks! These are at-the-wall power measurements in a 1P mainboard (ASRockRack EPYCD8 + RAM, SSD, using Gb-Ethernet), PSU spec not provided. Also included in some of the tests: core clocks and temperatures (while cooled by the Noctua 92 mm tower cooler).

The tests are with workloads which generally seem to scale well to any number of cores; with the exception of page 8 where single-threaded tests were run, which among else tells us power consumption when the 1P server is severely under-utilized. (The molecular dynamics benchmark at the bottom of the page is again a highly threaded test.)

The figures confirm my earlier thought that a substantial upgrade from high-core-count 14 nm server CPUs, if one is power- and/or cooling-constrained, is provided only by the 64 core SKUs. (And the 48 core SKUs to a lesser degree, but there is no 48 core model in the cheaper single-socket-only -P lineup. Also, they have 3-core CCXs which can be a concern in multithreaded workloads, as opposed to multi-process workloads.)

The small print isn't as small if a large enough minimum font size is configured in the browser.

Or 7702P at 2/3rds the price for the processor. Still pricey though.