-

We’re currently investigating an issue related to the forum theme and styling that is impacting page layout and visual formatting. The problem has been identified, and we are actively working on a resolution. There is no impact to user data or functionality, this is strictly a front-end display issue. We’ll post an update once the fix has been deployed. Thanks for your patience while we get this sorted.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Roadmap leaked: Nvidia GeForce GTX 580 to be 20% faster

Page 2 - Seeking answers? Join the AnandTech community: where nearly half-a-million members share solutions and discuss the latest tech.

Sylvanas

Diamond Member

I'm expecting a higher clocked 512 Fermi (probably called a GF110, I'd think the changes over a GF100 would warrant a new part number) with some minor optimisations at the end of the year (like GTX285/75). I doubt Nvidia would go an entirely new design with a ton more CUDA cores at this stage- although I'd like to be surprised. Seeing the efficiencies AMD has made on the same 40nm process with a largely similar architecture is exciting and if Nvidia can pull off a similar product it's going to be an interesting Christmas.

NV just keeps adding more size and more generalized computing power while Ati is trying to slim down and specialize to gpu market. very different path. I almost getting the feeling NV is trying to cram a super computer in there. I think with them pushing Cuda so hard, it's conceivable they are aiming at the cpu market eventually, maybe a NV flavored x86 chip in a few years?

Ares1214

Senior member

Highly doubt its possible. If it is possible, then it must be sub 500 MHz clocks to only get 20% more performance. If they just keep going bigger and bigger, they will have some problems. The 6970 is suppose to arrive with 20-30% higher performance than the 480. So lets say the 580 can tie it. Well, it looks like it will be coming later, obviously have a much larger die size, so likely cost more to make, probably produce more heat, and almost definitely use more energy. This bigger and bigger and bigger and bigger thing wont always pay off for Nvidia like it did with 8800. It hasnt worked at all lately. Besides, lets see them roll out fully unlocked GF104 before the do a dual of it!

NV just keeps adding more size and more generalized computing power while Ati is trying to slim down and specialize to gpu market. very different path. I almost getting the feeling NV is trying to cram a super computer in there. I think with them pushing Cuda so hard, it's conceivable they are aiming at the cpu market eventually, maybe a NV flavored x86 chip in a few years?

I'd say Nvidia see's the writing on the wall that the discrete market will continue to shrink. They need to expand their revenue streams using the same silicon. That means Quadro and Tesla lines.

"maybe a NV flavored x86 chip in a few years?"

Intel will never give nvida a x86 licence. Cuda doesnt run x86 as well as a real cpu.

IF the 580 has more cores than the 480 = it ll use more power.

IF the 580 has higher mem bit bus than the 480 = bigger chip = worse yeilds than 480

Im going with 512 cores, and 300W TDP! 🙂 You know the 580 will be more power hungry (it does have more cores) and hotter than the 480 😛

Intel will never give nvida a x86 licence. Cuda doesnt run x86 as well as a real cpu.

IF the 580 has more cores than the 480 = it ll use more power.

IF the 580 has higher mem bit bus than the 480 = bigger chip = worse yeilds than 480

Im going with 512 cores, and 300W TDP! 🙂 You know the 580 will be more power hungry (it does have more cores) and hotter than the 480 😛

Last edited:

DominionSeraph

Diamond Member

now heres the real kicker... these chips are half size of the 480s... so 2 of these together would sell at the same price as a single 480 card. So buy AMD for same price, get 100% performance increase of what nvidia sells with their 480s.

What kind of retarded fanboi logic makes you think AMD prices by the mm^2? When the 5850 was jacked from its launch price of $259 to $300 and stayed there for the better part of a year, was that because it increased in size? Did it decrease in size three weeks after Nvidia released the (same sized) GTX 460 for $70-$100 less and dropped the prices of the GTX 470 to under the going rate of a 5850? Because that's when the 5850 started (slowly) dropping in price.

Was AMD selling by the mm^2 when the 170mm^2 5770 was jacked up to nearly $200? Are they selling it by the mm^2 now when it's hovering within $10 of the 330mm^2 768MB GTX 460 which blows it away in performance?

AMD prices under the "Intel tax" on the CPU side. That does not mean there is a "Nvidia tax" which they price under on the GPU side. Nvidia has made huge GPUs since the original Geforce. Did that mean they weren't competitive with 3dfx, Matrox, and PowerVR?

Both companies will gouge the heck out of the consumer when they're given a performance/feature point with no competition. Neither is working dilligently day after day for only the feeling of well-being they get by giving you a fast card at a low price. They exist to make a profit, not to be your friend and confidant.

If you need a friend that bad, here, take Chad.

But hands off Rukia. She's mine.

IF the 580 has more cores than the 480 = it ll use more power.

IF the 580 has higher mem bit bus than the 480 = bigger chip = worse yeilds than 480

Weird, because I thought that AMD and Nvidia were using the same exact node process and fab for making their chips and AMD just came out with chips that use more cores and less power per die space ----- so according to your logic, Nvidia can't possibly be able to make improvements like AMD has just demonstrated.

But oh wait, they already did. GF100 has 512 total cores and is 530 mm^2 - 1.03mm^2 of die space per core. GF104 has 384 cores and is 332 mm^2 - 0.86mm^2 of die space per core and it uses less power per core than GF100. Looks like they proved you wrong months before you made this baseless prediction.

RussianSensation

Elite Member

IF the 580 has more cores than the 480 = it ll use more power.

IF the 580 has higher mem bit bus than the 480 = bigger chip = worse yeilds than 480

Im going with 512 cores, and 300W TDP!

Releasing a 512 core part, up from 480 core part, and increasing TDP from 250W to 300W is what I call the most pessimistic outlook ever, unless the back-end (TMUs and ROPs) is doubled.

You really think MIT, Stanford, Waterloo, Berkley engineers would need almost 12 months to add 32 more cores at the cost of another 50W? :\ Considering how poorly GTX470/480 were received by the general market, such a card would be a failure.

Your assumption about GTX580 automatically being bigger than 480 doesn't account for GF100 --> GF104 --> GT110 redesigns.

LOL @ people using GF100 for the power consumption/die size comparison.

----- so according to your logic, Nvidia can't possibly be able to make improvements like AMD has just demonstrated.

Didn't you guys look at history? Only AMD is able to reduce die size while reducing power consumption and keeping similar performance.

I don't know why you both won't admit it -- NV is just a failing company. In case you didn't notice, HD6870 is as fast as a 5 months old factory overclocked GTX460 with a 1st generation tessellation engine. And btw, NV's professional graphics market is next because it will only take a couple driver updates before AMD catches up. I would be shorting their stock like tomorrow.

Last edited:

busydude

Diamond Member

But oh wait, they already did. GF100 has 512 total cores and is 530 mm^2 - 1.03mm^2 of die space per core. GF104 has 384 cores and is 332 mm^2 - 0.86mm^2 of die space per core and it uses less power per core than GF100. Looks like they proved you wrong months before you made this baseless prediction.

I am sorry, but your math is totally wrong. There is no way you can calculate die space/core, you realize that the die is not made up of ONLY the cores?

Lets see,

GF 100 with 384 bit memory bus Vs GF 104 with 256 memory bus.

ROPs: 48 Vs 32

Now, if you would have calculated transistors per mm^2 of die space, then it would make a little bit more sense.

3B/530 ~ 5.66 Million Transistors/mm^2.

1.95B/332 ~ 5.87 Million Transistors/mm^2.

OMFGBBQ, nearly 6 Miilion transistors/mm^2.. that's seriously insane.

MrK6

Diamond Member

The GTX 460 has actually only been out just over 3 months. Not too long of a run for NVIDIA, IMO.I don't know why you both won't admit it -- NV is just a failing company. In case you didn't notice, HD6870 is as fast as a 5 months old factory overclocked GTX460 with a 1st generation tessellation engine. And btw, NV's professional graphics market is next because it will only take a couple driver updates before AMD catches up. I would be shorting their stock like tomorrow.

Exactly, great post! :thumbsup: NVIDIA only cut out a lot of the GPGPU "fat" to create the GTX 460, IIRC.I am sorry, but your math is totally wrong. There is no way you can calculate die space/core, you realize that the die is not made up of ONLY the cores?

Lets see,

GF 100 with 384 bit memory bus Vs GF 104 with 256 memory bus.

ROPs: 48 Vs 32

Now, if you would have calculated transistors per mm^2 of die space, then it would make a little bit more sense.

3B/530 ~ 5.66 Million Transistors/mm^2.

1.95B/332 ~ 5.87 Million Transistors/mm^2.

OMFGBBQ, nearly 6 Miilion transistors/mm^2.. that's seriously insane.

In regards to the OP, only a 20% performance boost, even if they get it out this year, is going to fail miserably. Hell, 20% won't even catch the supposed performance of the 6970, never mind the 6990. Hopefully this is just a rumor/FUD/misinformation, otherwise AMD is going to have zero competition in the high-end.

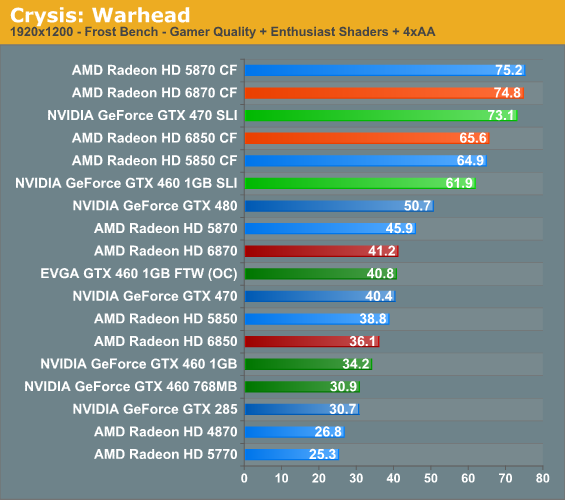

I'd wait to see what the 6900 series brings to the table before proclaiming the 580 uncompetitive. I remember all the AMD fabois salivating over the 6850 and 6870 smoking the 5850 and 5870. Then it turned out the chip didnt bring that to the table. It was basically a side grade with a better price.

Last edited:

GaiaHunter

Diamond Member

NV just keeps adding more size and more generalized computing power while Ati is trying to slim down and specialize to gpu market. very different path. I almost getting the feeling NV is trying to cram a super computer in there. I think with them pushing Cuda so hard, it's conceivable they are aiming at the cpu market eventually, maybe a NV flavored x86 chip in a few years?

Cayman should add more GPGPU.

Barts doesn't have any DP jut like Juniper - it is just a gamer card. And that just isn't there as a bad thing. The broad target audience for these cards is gamers.

blastingcap

Diamond Member

Cayman should add more GPGPU.

Barts doesn't have any DP jut like Juniper - it is just a gamer card. And that just isn't there as a bad thing. The broad target audience for these cards is gamers.

I've been thinking, and the state of the market is okay right now for gamers:

1. AMD and NV hate each other. Hate is bad in general, but in this case it's good. We want hatred and competition rather than too much love and price collusion between the two remaining gaming-grade GPU makers.

2. AMD is still making gaming-oriented GPUs that keep the GPGPU fat low. This sets a ceiling for how much NV can charge for gaming-grade GPUs--regardless of how much GPGPU bloat is in the cards.

3. Thanks to AMD's gaming-GPU price competition keeping a lid on how much NV can charge for GPGPU-bloated gaming cards, it seems that NV's pro graphics users are helping out with the subsidizing of GPGPU bloat, not just gamers. NV doesn't have serious competition in pro graphics so it can make pro graphics buyers subsidize the GPGPU bloat until GPGPU can pay for itself.

4. NV also appears to have learned its lesson from Fermi, so I wouldn't be surprised if eventually it splits its GPU lineup into GPGPU/HPC, pro graphics, gaming-grade GPUs with the unnecessary parts of GPGPU trimmed, and mobile parts.

5. Intel buried Larrabee--for now. We may yet see its resurrection. Eventually, to match AMD's Fusion push, Intel may emerge as a third major competitor in the GPU arena, bringing more competition into the market.

In regards to the OP, only a 20% performance boost, even if they get it out this year, is going to fail miserably.

How much faster is the 6870 over the 5870?

Is it going to fail miserably for being <=20% faster?

Context matters, and you guys seem to be setting up the context here such that no matter what Nvidia does they are assured failure (in your opinions) just for showing up to the game.

If I were a cynic I might be inclined to think I am witnessing two sides to the same coin here...between the "ZOMG Nvidia is teh doomed" threads and the "ZOMG AMD tesselation is teh suckzors" threads.

Luckily for you guys I am not a cynic...😉

6870 is a replacement for the 5770. (AMD played a name changeing game, and instead named it 68xx)

The differnce from a 5770 to a 6870 is around +50% gains in fps in some games.

Now if we assume that the 69xx cards have +50% performance, compaired to the 58xx cards, you can imagine how fast they ll be.

So 5870 + ~50% peformance = the new 6970.

Im assumeing the 6970 will be atleast 33% faster than the 480.

The differnce from a 5770 to a 6870 is around +50% gains in fps in some games.

Now if we assume that the 69xx cards have +50% performance, compaired to the 58xx cards, you can imagine how fast they ll be.

So 5870 + ~50% peformance = the new 6970.

Im assumeing the 6970 will be atleast 33% faster than the 480.

The differnce from a 5770 to a 6870 is around +50% gains in fps in some games.

Isn't it more like +100%, since the 5870 is almost exactly 100% faster than a 5770 and the 6870 is nearly equal to the 5870?

blastingcap

Diamond Member

the 5870 is almost exactly 100% faster than a 5770

No.

Hmm I'd still call it closer to 80% or 90% than 50% at any respectable resolution.. (vs the 5870 I mean)

Last edited:

blastingcap

Diamond Member

Hmm I'd still call it closer to 80% or 90% than 50% at any respectable resolution.. (vs the 5870 I mean)

At 19x12, 5870 = 75% faster with high oc'ability. 6870 = 64% faster with lower oc'ability. 64% isn't that far away from 50% and he said "some" games not all.

5870 is twice the size of Juniper but due to bottlenecks it is not twice the performance.

http://www.techpowerup.com/reviews/HIS/Radeon_HD_6870/29.html

P.S. memory bandwidth appears to be a major bottleneck for Cayman. There may be others. Things rarely scale perfectly. I would not be surprised if Cayman XT were not 50% faster than Cypress XT 50% but more like 30-40% due to some bottleneck or another.

Last edited:

I am sorry, but your math is totally wrong. There is no way you can calculate die space/core, you realize that the die is not made up of ONLY the cores?

Lets see,

GF 100 with 384 bit memory bus Vs GF 104 with 256 memory bus.

ROPs: 48 Vs 32

Now, if you would have calculated transistors per mm^2 of die space, then it would make a little bit more sense.

3B/530 ~ 5.66 Million Transistors/mm^2.

1.95B/332 ~ 5.87 Million Transistors/mm^2.

OMFGBBQ, nearly 6 Miilion transistors/mm^2.. that's seriously insane.

My math is simplified but my point remains absolutely valid - gf104 consumes less power and provides greater performance per mm^2 than gf100. And the fact that gf104 came out right on the heels of gf100 is telling that any other architectural updates nvidia release on 40nm will be accompanied with power draw and performance per mm^2 of die space that amd just demonstrated.

"maybe a NV flavored x86 chip in a few years?"

Intel will never give nvida a x86 licence. Cuda doesnt run x86 as well as a real cpu.

IF the 580 has more cores than the 480 = it ll use more power.

IF the 580 has higher mem bit bus than the 480 = bigger chip = worse yeilds than 480

Im going with 512 cores, and 300W TDP! 🙂 You know the 580 will be more power hungry (it does have more cores) and hotter than the 480 😛

well don't bet on Intel denying NV a x86 license when state department pressures them to open up competition. But they might voluntarily do it since they might just welcome 3 player market more.

I also heard a rumor that 580s will be in 300W range. Now that would truly be a monster. Power hungry monster. But it is also rumored to be about 20% faster so at least you get performance for the added power and heat. I wonder if it'll come standard with water cooling system or some huge Cu cooler on top.

The point was... you can expect the new 6950 and 6970 to be ATLEAST 50% faster than the 5850 and 5870. 🙂

I dont think AMD would put out a new architecture with even more improvements than the 68xx have, and not have equal or better overall effects in performance gains.

Intel and nVidia seem to have a love/hate relation ship going, where they like to play dirty with one another. Nvidia hasnt been nice to intel and vise versa... Why would intel give them a 86x and risk loseing market share (however unlikely it is that nvidia could compete with intel in a cpu race). I almost think relations between intel and amd are better than intels and nvidias ^-^.

and amd is currently a direct competitor to intel (thought it doesnt have much cpu market, but it keeps intel out of trouble haveing them around).

I dont think AMD would put out a new architecture with even more improvements than the 68xx have, and not have equal or better overall effects in performance gains.

Intel and nVidia seem to have a love/hate relation ship going, where they like to play dirty with one another. Nvidia hasnt been nice to intel and vise versa... Why would intel give them a 86x and risk loseing market share (however unlikely it is that nvidia could compete with intel in a cpu race). I almost think relations between intel and amd are better than intels and nvidias ^-^.

and amd is currently a direct competitor to intel (thought it doesnt have much cpu market, but it keeps intel out of trouble haveing them around).

Last edited:

TRENDING THREADS

-

Discussion Zen 5 Speculation (EPYC Turin and Strix Point/Granite Ridge - Ryzen 9000)

- Started by DisEnchantment

- Replies: 25K

-

Discussion Intel Meteor, Arrow, Lunar & Panther Lakes + WCL Discussion Threads

- Started by Tigerick

- Replies: 25K

-

Discussion Intel current and future Lakes & Rapids thread

- Started by TheF34RChannel

- Replies: 24K

-

-