poke01

Diamond Member

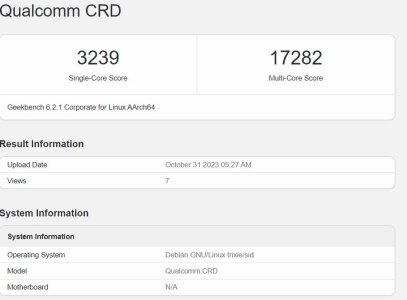

Qualcomm's working on a 2024 PC chip codename "Hamoa" with up to 12 (8P+4E).

Said to the same cache layout as M1. large private L1$, per-cluster L2$ (cluster = 4 cores, 12MB for every cluster) and a lot of LLC.

source:

Said to the same cache layout as M1. large private L1$, per-cluster L2$ (cluster = 4 cores, 12MB for every cluster) and a lot of LLC.

source: