-

We’re currently investigating an issue related to the forum theme and styling that is impacting page layout and visual formatting. The problem has been identified, and we are actively working on a resolution. There is no impact to user data or functionality, this is strictly a front-end display issue. We’ll post an update once the fix has been deployed. Thanks for your patience while we get this sorted.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Discussion Qualcomm Snapdragon Thread

Page 78 - Seeking answers? Join the AnandTech community: where nearly half-a-million members share solutions and discuss the latest tech.

SarahKerrigan

Senior member

I'm not sure any company now gives any detail about branch prediction except some vague terms such as TAGE. I don't think even BTB sizes are known (but I might be wrong on this). This has become so tricky that it looks like black magic with a lots of secret sauce, some covered by patents, some not. Giving too many details, might break some competitive advantage and open the door to patent trolls.

IBM is historically decent about this, at least - there was a whole paper at ISCA about z15's branch prediction structures, for instance, and IBM Systems Journal had an in-depth article about z13 uarch that covered branch prediction as well.

I believe Arm discloses BTB sizes too - though it seems like Arm presentations have been getting a little bit vaguer each year.

EDIT: Repeatedly mangled which papers I was referring to. Clearly, I need to wake up more before posting.

Last edited:

Ghostsonplanets

Senior member

Snap 8G4 is known to cost $250 - 300. So that is a healthy revenue increase for QCOM.

The X Series planned refresh for 2025 and supply for this year is very interesting. We now also know about another segment that'll be covered by the X series: The budget mainstream one.

@FlameTail Another X series SoC discovered: Canim for the $599 - $799 segment.

Hopefully it's still 8C

igor_kavinski

Lifer

There could be another way to improve branch prediction. Store branch prediction information provided by the developer by running a tool from the CPU manufacturer and run through common use cases with their software. Once enough data is collected, it can be "compressed" into a sort of branch prediction "fingerprint" of that particular executable so instead of using a general branch predictor, the CPU can look up the developer populated custom BTB structure instead of trying to use a one-size-fits-all approach. When the developer doesn't populate the custom BTB structure, go with the general BTB. The developer populated BTB can also be much smaller in size.IBM is historically decent about this, at least - there was a whole paper at ISCA about z15's branch prediction structures, for instance, and IBM Systems Journal had an in-depth article about z13 uarch that covered branch prediction as well.

As to how the developer will tell the OS to use the custom BTB, it will require some OS level change to let the OS know before starting execution (maybe some flag in the EXE header) that the executable to be executed has its own "hints" and load the hints from the "BTB hints" portion of the EXE into the CPU's custom BTB structure which will hopefully yield a higher hit rate.

Sorry if I wasn't able to explain that too well.

SarahKerrigan

Senior member

There could be another way to improve branch prediction. Store branch prediction information provided by the developer by running a tool from the CPU manufacturer and run through common use cases with their software. Once enough data is collected, it can be "compressed" into a sort of branch prediction "fingerprint" of that particular executable so instead of using a general branch predictor, the CPU can look up the developer populated custom BTB structure instead of trying to use a one-size-fits-all approach. When the developer doesn't populate the custom BTB structure, go with the general BTB. The developer populated BTB can also be much smaller in size.

As to how the developer will tell the OS to use the custom BTB, it will require some OS level change to let the OS know before starting execution (maybe some flag in the EXE header) that the executable to be executed has its own "hints" and load the hints from the "BTB hints" portion of the EXE into the CPU's custom BTB structure which will hopefully yield a higher hit rate.

Sorry if I wasn't able to explain that too well.

"Statically hint at branch directions and targets discovered during PGO..." Oh yeah! I've seen this one!

SarahKerrigan

Senior member

Moving on from my nostalgia for the EPIC glory days - branch behavior, like memory access behavior, has a tendency to be dynamic. It is not always or even generally predictable at compile time. This is something that gets brought up periodically in various forms - direction and target hints in the instruction stream, branch target calculation distinct from branch instructions (which is what SHmedia does), etc. Generally, it doesn't work as well as its advocates think it will.

Nothingness

Diamond Member

Anything that tries to predict the dynamic behavior of a program at compile time is quite risky (pun intended or not). I won't troll with VLIW and Itanium... No, I won't.

That said, Arm recently introduced a new extension (another one...) that adds hinted conditional branches. It is optional from Armv8.7 and mandatory from Armv8.8.

But that requires knowledge at compile time (gcc/clang builtin __builtin_expect can help the programmer make decisions).

That said, Arm recently introduced a new extension (another one...) that adds hinted conditional branches. It is optional from Armv8.7 and mandatory from Armv8.8.

Branch Consistent conditionally to a label at a PC-relative offset, with a hint that this branch will behave very consistently and is very unlikely to change direction.

But that requires knowledge at compile time (gcc/clang builtin __builtin_expect can help the programmer make decisions).

That’s not point. Multi-day battery life is a good thing.

Why?

I can only assume you feel having "too much" battery life at first is good because when it is degraded after a few years it'll still provide one day of battery life? Because otherwise I see no point to having a battery that still has 60% charge left when you've used it all day.

I wonder if we'd see smaller battery sizes if batteries could be made so that degradation happened 10x slower than it is does now? I'm sure part of the size in devices limited by expected battery life (i.e. phones and laptops like MBA, as opposed to laptops like MBP that have batteries just under the FAA size limit) is to account for the expected decline in battery capacity over time.

FlameTail

Diamond Member

Two new growth drivers for Qualcomm / Qualcommçå©åæ°æé·åè½

The SM8750 (Snapdragon 8 Gen 4), which will enter mass production in 2H24, is expected to be priced 25â30% higher than the current flagshipâ¦

Sounds good.The X Elite and X Plus chips, used for Windows on ARM (WOA), will reach about 2 million unit shipments in 2024, with expected year-on-year growth of at least 100–200% in 2025.

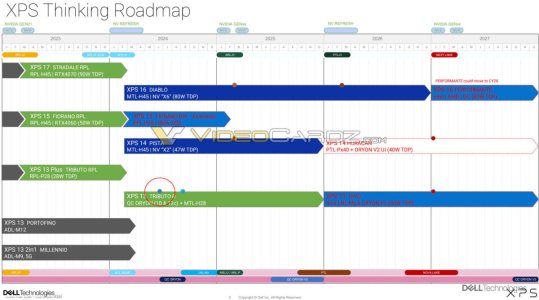

What kind of modificiation can we expect? Also when is X Elite G2/Oryon V2 landing then?The X Elite and X Plus will have modified versions in 2025, with a reduction in end product prices.

Dell leak said 2025H2

Sounds good.Additionally, Qualcomm plans to launch a low-cost WOA processor codenamed Canim for mainstream models (priced between $599–799) in 4Q25. This low-cost chip, manufactured on TSMC’s N4 node, will retain the same AI processing power as the X Elite and X Plus (40 TOPS).

FlameTail

Diamond Member

Having better chip efficiency will also prolong the battery lifespan (less recharge cycles).Why?

I can only assume you feel having "too much" battery life at first is good because when it is degraded after a few years it'll still provide one day of battery life? Because otherwise I see no point to having a battery that still has 60% charge left when you've used it all day.

I wonder if we'd see smaller battery sizes if batteries could be made so that degradation happened 10x slower than it is does now? I'm sure part of the size in devices limited by expected battery life (i.e. phones and laptops like MBA, as opposed to laptops like MBP that have batteries just under the FAA size limit) is to account for the expected decline in battery capacity over time.

FlameTail

Diamond Member

Qualcomm has implemented hardware acceleration for x86 emulation?

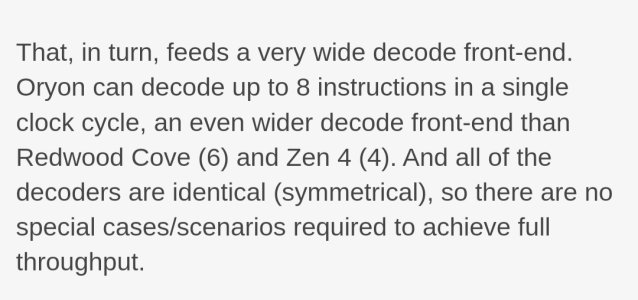

Oryon also has hardware accommodations for x86’s unique memory store architecture – something that’s widely considered to be one of Apple’s key advancements in achieving high x86 emulation performance on their own silicon.

SarahKerrigan

Senior member

Qualcomm has implemented hardware acceleration for x86 emulation?

Anandtech calling the x86 memory model a "unique memory store architecture" is really weird.

Good to see, though. Should make life easier for the WoA emulation folks.

FlameTail

Diamond Member

I wonder if Oryon V2 will be ARMv9... SVE and SME would be nice to have.

FlameTail

Diamond Member

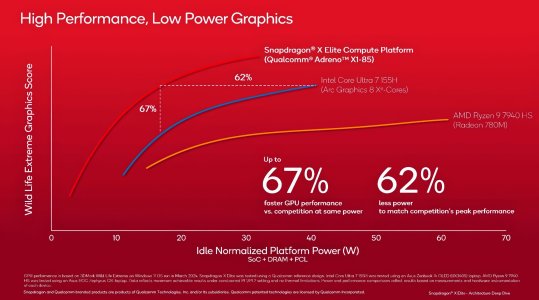

Videocardz has some extra information about Adreno X1 that's not in the Anandtech article;

videocardz.com

videocardz.com

Qualcomm details Adreno X1 GPU: specs, performance and Adreno Control Panel revealed - VideoCardz.com

Qualcomm Adreno X1 revealed Finally, some details on Snapdragon X Elite/Plus GPU architecture. Less than a week before the official launch of the Qualcomm Snapdragon X Series, the company has revealed more details about its graphics architecture. The new Adreno X1 series is the first generation...

FlameTail

Diamond Member

Another app goes native for WoA.

Slack

Slack

The Hardcard

Senior member

Weird as in, “what does he mean, so many other CPU designs have the same architecture “ or as in “x86 so dominates the industry, even things only they implement should be referenced as typical.”Anandtech calling the x86 memory model a "unique memory store architecture" is really weird.

Good to see, though. Should make life easier for the WoA emulation folks.

SarahKerrigan

Senior member

Weird as in, “what does he mean, so many other CPU designs have the same architecture “ or as in “x86 so dominates the industry, even things only they implement should be referenced as typical.”

Weird as in nobody calls it a "memory store architecture." Just strange wording, nothing deeper.

igor_kavinski

Lifer

Yes! You get it!I can only assume you feel having "too much" battery life at first is good because when it is degraded after a few years it'll still provide one day of battery life?

I wonder if we'd see smaller battery sizes if batteries could be made so that degradation happened 10x slower than it is does now? I'm sure part of the size in devices limited by expected battery life (i.e. phones and laptops like MBA, as opposed to laptops like MBP that have batteries just under the FAA size limit) is to account for the expected decline in battery capacity over time.

If the battery is not easily replaceable, I would prefer that it lasted longer and one way to make it last longer is to charge it LESS, which a bigger battery enables you to do.

Plus, if you were to do an experiment where you offer people two laptops for the same price, one with smaller battery and one with larger battery, people would overwhelmingly choose the one with the larger battery, despite the extra weight because if there is no difference in price, why go for less?

It's Apple that started this trend of "small battery is good" with the Macbook Air because for some weird reason balancing a stupid laptop on the palm of our hand somehow makes us wizards of computing. I was fine when phones were fat with replaceable batteries. Now I worry that the phone I use daily and don't want to get rid of, will some day start having battery issues and then I will have to take it to some mobile repair shop for "open surgery" and who knows if the phone will work the same after that. I much preferred replacing the battery myself.

Last edited:

FlameTail

Diamond Member

4P vs 8P cluster, which is better?Interestingly, the Snapdragon X’s 4 core cluster configuration is not even as big as an Oryon CPU cluster can go. According to Qualcomm’s engineers, the cluster design actually has all the accommodations and bandwidth to handle an 8 core design, no doubt harking back to its roots as a server processor. In the case of a consumer processor, multiple smaller clusters offers more granularity for power management and as a better fundamental building block for making lower-end chips (e.g. Snapdragon mobile SoCs), but it will come with some trade-offs with slower core-to-core communication when those cores are in separate clusters (and thus have to go over the bus interface unit to another core). It’s a small but notable distinction, since both Intel and AMD’s current designs place 6 to 8 CPU cores inside the same cluster/CCX/ring.

8P cluster

• Better MT performance due to less core-to-core latency

• Better ST performance becuase an individual core can access more cache (assuming 8P cluster has double the cache of 4P cluster).

4P cluster

• Better power efficiency

igor_kavinski

Lifer

4 cores in 2024? No thanks. Fewer cores would get overwhelmed more easily by heavily threaded applications or heavy multitasking so don't see the point unless you just want to do light tasks. On a tablet or maybe netbook, such a cluster might be fine but in a work laptop? Get the hell out of my work laptop!4P cluster

• Better power efficiency

The Hardcard

Senior member

It isn’t a weird reason, it is a straightforward one. It makes sense to be willing to endure the greater effort and reduced flexibility of thicker, bulkier, heavier devices. i’ve done it myself probably will again.Yes! You get it!

If the battery is not easily replaceable, I would prefer that it lasted longer and one way to make it last longer is to charge it LESS, which a bigger battery enables you to do.

Plus, if you were to do an experiment where you offer people two laptops for the same price, one with smaller battery and one with larger battery, people would overwhelmingly choose the one with the larger battery, despite the extra weight because if there is no difference in price, why go for less?

It's Apple that started this trend of "small battery is good" with the Macbook Air because for some weird reason balancing a stupid laptop on the palm of our hand somehow makes us wizards of computing. I was fine when phones were fat with replaceable batteries. Now I worry that the phone I use daily and don't want to get rid of, will some day start having battery issues and then I will have to take it to some mobile repair shop for "open surgery" and who knows if the phone will work the same after that. I much preferred replacing the battery myself.

But it always has been, and will always be a trade-off, never a feature.

It isn’t a weird reason, it is a straightforward one. It makes sense to be willing to endure the greater effort and reduced flexibility of thicker, bulkier, heavier devices. i’ve done it myself probably will again.

But it always has been, and will always be a trade-off, never a feature.

Yes, it is a tradeoff. That's something the people who are always cheering when battery life is increased and think the world is going to end if it is reduced don't get.

They will say "why does Apple want the iPhone to be thin, I would rather have a device 50% thicker with double the battery life" That's what THEY may want, but that's not what I want. If I could I'd get one that's even thinner. Not because I care about thinness per se, but because I prefer it be lighter. My nearly two year old 14 Pro Max still has 60% or so battery life at the end of my day's use of it. That's down from where it was when it was new, but it could have had a smaller battery without impacting MY use of it.

People who want a thicker phone with longer battery life can buy today's iPhone (or my hypothetical thinner one) and put it in a case that builds in a battery. They get what they want without impeding my choices. That's a little harder to do with say a Macbook Air, since no one puts laptops in a "case", but the Macbook Pro exists for people who want to trade off increased weight for a larger battery.

I'm not saying the Pro Max has too big of a battery - it is too big for me but Apple is choosing it based on their overall customer base. But they don't need to satisfy everyone, because those willing to trade size/weight for battery have a perfectly good DIY option.

TRENDING THREADS

-

Discussion Zen 5 Speculation (EPYC Turin and Strix Point/Granite Ridge - Ryzen 9000)

- Started by DisEnchantment

- Replies: 25K

-

Discussion Intel Meteor, Arrow, Lunar & Panther Lakes + WCL Discussion Threads

- Started by Tigerick

- Replies: 25K

-

Discussion Intel current and future Lakes & Rapids thread

- Started by TheF34RChannel

- Replies: 24K

-

-