Water cooled, top-end thermal paste, undervolt,

and a third party contact frame:

still throttles. You have to delid all that to run properly.

The product isn't fit for purpose, plain and simple. Intel needs to be sued for false advertising and be forced to put a disclaimer on the box "warning, delidding required for correct operation".

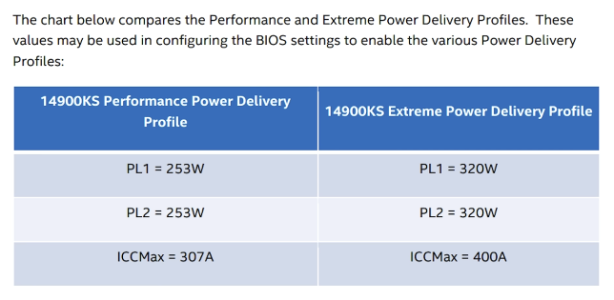

I mean they're basically admitting themselves there's a problem:

Prepare to pay an extra $200 vs a 'standard' Core i9-14900KS model.

www.tomshardware.com

Ship a defective product then charge $200 extra to "fix" it. Who could possibly defend this anti-consumer behavior?

And still not a peep after they were "looking into" why their CPUs fail certain workloads. Much easier to just keep quiet rather than tell reviewers/board vendors to enforce PL1/PL2 and risk lower benchmark charts.