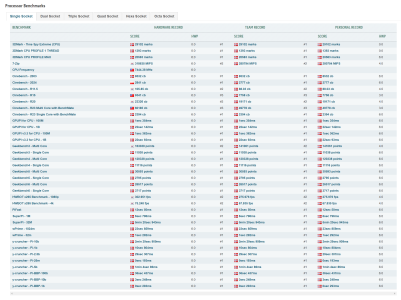

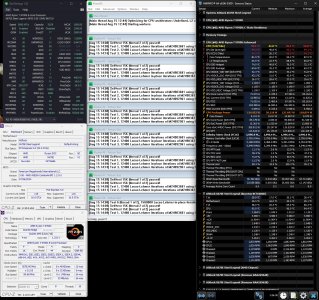

Ok, here we go, first stress test run with the traditional prime 95, latest version blend test. A good way for gaming stability to be established imo & test my cpu mounting efforts.

🙂

All auto bios settings except PBO enabled, FLCK - 2000 & EXPO profile enabled for my ram.

Kind of surprised at the average power draw cause' AVX512 disabled (useless for gaming atm) 118w average as opposed to the publicised 88w max TDP. Perhaps Asrock have implemented agesa 1.2.0.0 in a custom way for Zen 5?

CPU mounted with Thermal Grizzlies thermal guard & Kryonaut extreme TIM, system enclosed in Antec P8 case.

View attachment 105355

![20240809_152625[1].jpg](https://anandtech-data.community.forum/attachments/104/104947-cd55ed14184c635fd045bad553b36976.jpg?hash=zVXtFBhMY1)