busydude

Diamond Member

That track setting looks cool. I did not play dirt 2 deep in to that many levels, was there any forest racing like that ?

Yes, in Malaysia.. and China.

And.. ewww, they are playing that game using a KB.

That track setting looks cool. I did not play dirt 2 deep in to that many levels, was there any forest racing like that ?

Here's a video of the 6990 playing dirt 3.http://www.guruht.com/2011/03/radeon-hd-6990-tested-using-dirt-3-live.html it's hard to tell how loud the card is

Doesn't look very smooth to me. Doesn't look playable.

"This is Colin McRae Dirt 3 on AMDs also upcoming Radeon HD 6990 connected to three Full-HD displays in an Eyefinity setup. While the graphics looked nice and crisp, we don't know if this was the full eye-candy the game has to offer. The gameplay was, however, very smooth on the Radeon HD 6990 aka Antilles."

Here's a video of the 6990 playing dirt 3.http://www.guruht.com/2011/03/radeon-hd-6990-tested-using-dirt-3-live.html it's hard to tell how loud the card is

Elaborate please.Not sure I like the blurred side monitor views, they just look stretched to me!

Personally, I think it's a hack job to ignore the specs. Just shows what you are not capable of engineering.

Besides anything PCI-SIG can or cannot do, what you are doing, when you ignore the spec, is selling a card that other equipment (MoBo's, for example) aren't designed to run with.

Unless the card exhausts all of it's heat outside of the case, you are also dumping an incredible amt of heat into the case. If it does, then we are looking at one hell of a cooler, if it can exhaust all of it's heat, keep the card cool, and not sound like a dustbuster.

Personally, I think it's a hack job to ignore the specs. Just shows what you are not capable of engineering.

I agree with this.

<- educated and experienced engineer

Why is it I feel that this thread is getting derailed into an "armchair engineer" thread again? :hmm:

Well it still strikes me as odd that they would always try to stick under the PCI-E limit and then suddenly just say to hell with it. Thats if these slides are correct.

Explains a lot thanks for the write up.Not sure of your age, and that is only relevant as I'm not sure if you were "on the scene" of computers at the time, but the CPU industry went through a similar hesitation when it came to producing CPU's that produced so much heat that they actually required a fan to air-cool them.

Once the industry, and the consumer, got over their reluctance to the idea the market never looked back and we quickly rushed towards the practical limits of conventional HSF technology.

The same thing happened with GPU's and multi-slot cooler solutions. There was an initial reluctance to "go there"...but once we did then it was fair game.

I see the 300W "boundary" as nothing more. It is an arbitrary value affixed within a spec that served a purpose in its time but that time has come and gone.

I've no doubt there were practical reasons for the 300W limitation, but because of engineering progress in developing cost-conscience solutions that address those original concerns I have no doubt the arbitrary 300W limit will be lifted.

When the DDR2 spec called for a max Vdimm of 1.95V that was with the expectation/assumption that sticks of ram would never have heat-spreaders or active cooling. Then engineering developed heatspreaders and dimm heatsinks with fans (dominator series, etc) and the arbitrary voltage limit of 1.95V no longer had a basis in engineering.

What you guys would claim to be hacks and engineering defeatism is actually the opposite. The spec limits exist because of the lack of engineering solutions to a real problem. When engineers resolve those real problems it is not a hack, it is opportunity.

There may be other downsides to the solutions which results in you personally electing to not purchase the product, but that is a personal decision and nothing more.

I personally had not problem running my Myshkin redlines at 2.2V as spec'ed by Mushkin but in violation of the Jedec DDR2 spec. Why? Because my mobo was designed for it, otherwise I wouldn't be able to set the Vdimm that high, and my PSU was designed for it.

And the existence of >300W video cards is not suddenly going to create an unforseen dynamic inside people's computer cases. People have been tri/quad sli'ing & CF'ing vcards for years, the combined heat output being well in excess of 300W.

This sort of bean-counting of the wattage/PCIe-Slot is silly arbitrary. You scale your PSU and cooling solutions accordingly if you want the product, otherwise you don't because you don't.

Not sure of your age, and that is only relevant as I'm not sure if you were "on the scene" of computers at the time, but the CPU industry went through a similar hesitation when it came to producing CPU's that produced so much heat that they actually required a fan to air-cool them.

Once the industry, and the consumer, got over their reluctance to the idea the market never looked back and we quickly rushed towards the practical limits of conventional HSF technology.

The same thing happened with GPU's and multi-slot cooler solutions. There was an initial reluctance to "go there"...but once we did then it was fair game.

I see the 300W "boundary" as nothing more. It is an arbitrary value affixed within a spec that served a purpose in its time but that time has come and gone.

I've no doubt there were practical reasons for the 300W limitation, but because of engineering progress in developing cost-conscience solutions that address those original concerns I have no doubt the arbitrary 300W limit will be lifted.

When the DDR2 spec called for a max Vdimm of 1.95V that was with the expectation/assumption that sticks of ram would never have heat-spreaders or active cooling. Then engineering developed heatspreaders and dimm heatsinks with fans (dominator series, etc) and the arbitrary voltage limit of 1.95V no longer had a basis in engineering.

What you guys would claim to be hacks and engineering defeatism is actually the opposite. The spec limits exist because of the lack of engineering solutions to a real problem. When engineers resolve those real problems it is not a hack, it is opportunity.

There may be other downsides to the solutions which results in you personally electing to not purchase the product, but that is a personal decision and nothing more.

I personally had not problem running my Myshkin redlines at 2.2V as spec'ed by Mushkin but in violation of the Jedec DDR2 spec. Why? Because my mobo was designed for it, otherwise I wouldn't be able to set the Vdimm that high, and my PSU was designed for it.

And the existence of >300W video cards is not suddenly going to create an unforseen dynamic inside people's computer cases. People have been tri/quad sli'ing & CF'ing vcards for years, the combined heat output being well in excess of 300W.

This sort of bean-counting of the wattage/PCIe-Slot is silly arbitrary. You scale your PSU and cooling solutions accordingly if you want the product, otherwise you don't because you don't.

Yeah those are pretty good rumors, considering techspot thought the 6990 would have 3840SPs and nordichardware says the 590 was suppose to be out last month.

I would trust Napoleon long before any of those sites.

Or they could go the route they did with the gtx295 - use all the shaders but cut down the memory interface. But anyways, is chiphell and Napolean the same site that Silverforce used for all his 6970 destroying the hd5970 claims and also your assertions that the 6970 would be the fastest single gpu or were those rumors from page hit grabbing sites that were being repeated?

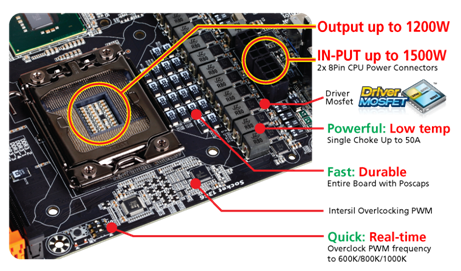

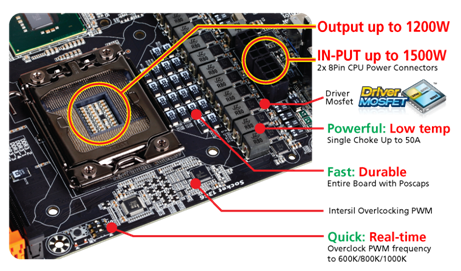

OC-PEG provides two onboard SATA power connectors for more stable PCIe power when using 3-way and 4-way graphics configurations. Each connector can get power from a different phase of the power supply, helping to provide a better, more stable graphics overclock. The independent power inputs for the PCIe slots helps to improve even single graphics card overclocking. For 4-way CrossFireX™, users must install OC-PEG to avoid over current in the 24pin ATX connector. The entire board also features POScaps, helping to simplify the insulation process so overclockers can quickly reach subzero readiness.

I thought it had already pretty much been established consumers didn't want to go there when it comes to power hungry cards that produce tons of heat and noise? I had a gtx 470 and 480 and personally I didn't mind the heat and noise but Nvidia took a beating sales wise and PR wise vs the cooler running 5XXX series. I believe the consumers spoke and nvidia listened.Not sure of your age, and that is only relevant as I'm not sure if you were "on the scene" of computers at the time, but the CPU industry went through a similar hesitation when it came to producing CPU's that produced so much heat that they actually required a fan to air-cool them.

Once the industry, and the consumer, got over their reluctance to the idea the market never looked back and we quickly rushed towards the practical limits of conventional HSF technology.

The same thing happened with GPU's and multi-slot cooler solutions. There was an initial reluctance to "go there"...but once we did then it was fair game.

I see the 300W "boundary" as nothing more. It is an arbitrary value affixed within a spec that served a purpose in its time but that time has come and gone.

I've no doubt there were practical reasons for the 300W limitation, but because of engineering progress in developing cost-conscience solutions that address those original concerns I have no doubt the arbitrary 300W limit will be lifted.

When the DDR2 spec called for a max Vdimm of 1.95V that was with the expectation/assumption that sticks of ram would never have heat-spreaders or active cooling. Then engineering developed heatspreaders and dimm heatsinks with fans (dominator series, etc) and the arbitrary voltage limit of 1.95V no longer had a basis in engineering.

What you guys would claim to be hacks and engineering defeatism is actually the opposite. The spec limits exist because of the lack of engineering solutions to a real problem. When engineers resolve those real problems it is not a hack, it is opportunity.

There may be other downsides to the solutions which results in you personally electing to not purchase the product, but that is a personal decision and nothing more.

I personally had not problem running my Myshkin redlines at 2.2V as spec'ed by Mushkin but in violation of the Jedec DDR2 spec. Why? Because my mobo was designed for it, otherwise I wouldn't be able to set the Vdimm that high, and my PSU was designed for it.

And the existence of >300W video cards is not suddenly going to create an unforseen dynamic inside people's computer cases. People have been tri/quad sli'ing & CF'ing vcards for years, the combined heat output being well in excess of 300W.

This sort of bean-counting of the wattage/PCIe-Slot is silly arbitrary. You scale your PSU and cooling solutions accordingly if you want the product, otherwise you don't because you don't.

Haha oh wow, I'm sorry, but I'm going to want to see a link to this. You're not getting away scott free with statements like these on my watch 😀

How are they going to cram 2 250w gpu's in the 375 2 8 pin limit when the 6990's 200w 6970s barely make the mark? (and they still had to downclock it). How much would the 580's have to be downclocked before they get outperformed by the 6990, and how will they manage to sell the card at any profit if that happens?

I might be wrong, but I don't remember seeing anyone post about how the 590 will absolutely, as a matter of fact, not have 1024 cores, but, in the absence of any kind of confirmation, we just choose to go with our rationale and not by some quarter page, tabloid rumors.

Sure we could shut up about it if we have nothing good to say, but how is that any worse than hyping up a product that we don't know anything about? This is, after all, a speculative debate - we have to guess one way or another *, and right now the best we have is "it's not going to happen".

* - well, at least we would if this was a gtx 590 thread.

Take a look at this new Gigabyte m/b introduced yesterday. Its got all sorts of 'custom' power features.

It adds oc-peg ,

OC-PEG

I wonder if other phases means the 3.3 or 5 V rails ?

GIGABYTE GA-X58A-OC designed for extreme overclocking features

GIGABYTE GA-X58A-OC designed for extreme overclocking features

So you are making a claim and not bothering to back it up will evidence when asked? bye bye credibility 😀

I thought it had already pretty much been established consumers didn't want to go there when it comes to power hungry cards that produce tons of heat and noise? I had a gtx 470 and 480 and personally I didn't mind the heat and noise but Nvidia took a beating sales wise and PR wise vs the cooler running 5XXX series. I believe the consumers spoke and nvidia listened.

Holy mother of god, they've engineered a mobo that can support a CPU chowing down 1200Watts!? Now that is insane 😱

Definitely going after those LN2 8GHz OC'ers. Or maybe Intel aims to have Haswell be a 10GHz 1kW TDP water-cooled monster 😛

Whats the percentage of owners on this forum who actually use 3 monitors for gaming? Has to be less then 5%.

If i was designing a video card these days it would be for the 1920X1080 120hz guys

I thought it had already pretty much been established consumers didn't want to go there when it comes to power hungry cards that produce tons of heat and noise? I had a gtx 470 and 480 and personally I didn't mind the heat and noise but Nvidia took a beating sales wise and PR wise vs the cooler running 5XXX series. I believe the consumers spoke and nvidia listened.