Summarize and/or link please. I do not have all day free to reread that huge thread.I already discussed that with blastingcap in "*Ryan Smith's*' thread.You can check it out.

[TECH Report] As the second turns: the web digests our game testing methods

Page 25 - Seeking answers? Join the AnandTech community: where nearly half-a-million members share solutions and discuss the latest tech.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

I agree with you, but that is not what I asked for. How do you take the human factor into account? We need a method, not excuses.Like I asked earlier, who do we blind test? The general public? Gamers? Enthusiasts? The guys that claim they can see it?

I'd argue that your average person won't see it, because they're ignorant of what they're looking for.

Of course there are flaws. But if it is still the best method, that is what we should must use. Simply pointing out the flaws of one method without providing a better alternative is not very constructive. Either the flaws are small enough that we can use the method, or they are too large to make any study conclusive. And in the latter case this thread can be closed, since no conclusion can be made regarding whether humans are affected by microstuttering. Neither of us want that.There are inherent flaws with the method you've provided. I was merely pointing them out.

Imouto

Golden Member

- Jul 6, 2011

- 1,241

- 2

- 81

Dude I wasn't talking about TR's review methods rather than its findings.Despite that AMD considered their findings to be accurate.Anyway my point was not to cheer lead TR's review but honestly it seems they are only among few sites who can offer more insight with their method.No offence but at this moment AT is lacking in GPU review department compared to many other sites.

Did you read their 660Ti vs 7950 review? Looks like everyone looks at the bars and comes to a conclusion. They blatantly said that one or other card won when, if you think a bit about those numbers, it's almost a tie in almost all benches.

Think about the Medal of Honor bench I mentioned before.

The result is an almost identical finish in every metric, with the slightest of advantages to the 7950 in the latency-focused numbers.

Slight advantage? WTF are you talking about TR? It's the very same and you must to be a frigging robot to notice the difference.

Same happens with the other benches in different degrees.

Same happens with vocals at this forum.

Dramatizing^8

After reading topics about this matter I wonder how ppl live with the uneven frame that all XXHz monitors show from time to time when the FPS isn't exactly XX. Eye cancer prolly.

Whoever said it was the best method? You're assuming it is, which is why I'm pointing out that it's not perfect. It's quite constructive.Of course there are flaws. But if it is still the best method, that is what we should must use. Simply pointing out the flaws of one method without providing a better alternative is not very constructive.

I don't like subjective hardware testing. There are very few exceptions, and graphics cards are not one of them.

You're missing the point. Okay, we're going to do blind testing. Great. Now who do we test? How do we test them? What are the thresholds that would lead to a finding being drawn s conclusive or inconclusive?Either the flaws are small enough that we can use the method, or they are too large to make any study conclusive. And in the latter case this thread can be closed, since no conclusion can be made regarding whether humans are affected by microstuttering. Neither of us want that.

More importantly, who will be conducting these tests? Where is the funding coming from? And how in the world are we going to get a sample size large enough for a strong confidence interval?

There are all kinds of logistical issues with blind testing for microstutter. I think the idea can be ruled out. What we've got is good enough, and the objective testing methods to come are even better. I don't need to suggest an alternative.

I never said it was problem free. But your are the one missing the point here. It is imperative that we evaluate if these observed variations in framerate are humanly observable. Otherwise we are just having high performance for high performance sake. I mean, if all that matters were Fraps numbers, I could just as well unplug my screen since what I see is not important. Again, BrightCandle and I did our homework and it seems like we know how the frame times translates into subjective performance. I suggest you do the same if you do not want to trust blind tests.There are all kinds of logistical issues with blind testing for microstutter. I think the idea can be ruled out. What we've got is good enough, and the objective testing methods to come are even better. I don't need to suggest an alternative.

I already have a good idea of what my tolerances are with frame latency spikes. So the plots actually do have meaning to me. I'm extremely sensitive to not only frame rate variation, but to refresh rates in general. LEDs, for example, can drive me nuts when their refresh rate is too low.

Great! We are finally getting somewhere. Could you please upload the frame times and post the links please? I would like to see how they compare to what I consider good/bad.I already have a good idea of what my tolerances are with frame latency spikes. So the plots actually do have meaning to me. I'm extremely sensitive to not only frame rate variation, but to refresh rates in general. LEDs, for example, can drive me nuts when their refresh rate is too low.

I really wish I could, but I don't game right now. Gaming + school has not worked well for me in the past... and now it seems like internet browsing/forum trolling isn't working to well to my scholastic success either.

In addition, the graphics card I'm using right now is a 6450. My 6950 is out of commission, and I haven't had the motivation to send it in for a replacement. Plus, I have to do a bit of "doctoring" to the card before I send it in.

If this were last year, I'd have happily obliged. I do plan on putting gaming back on the plate eventually, so perhaps I can toss in a contribution later. If I don't get banned, that is.

In addition, the graphics card I'm using right now is a 6450. My 6950 is out of commission, and I haven't had the motivation to send it in for a replacement. Plus, I have to do a bit of "doctoring" to the card before I send it in.

If this were last year, I'd have happily obliged. I do plan on putting gaming back on the plate eventually, so perhaps I can toss in a contribution later. If I don't get banned, that is.

Well, I only started benching "seriously" because I have my baby in my lap and cannot game!I really wish I could, but I don't game right now. Gaming + school has not worked well for me in the past... and now it seems like internet browsing/forum trolling isn't working to well to my scholastic success either.

You're a good guy, just stay cool and you'll be fineIf I don't get banned, that is.

I'm a total douchecanoe on these boards. I don't know how many infractions I have left before they send me packing, but it isn't many.

Anyways, I'd love to contribute. I'll see what I can do, although my collection of 3D games is very limited right now.

Anyways, I'd love to contribute. I'll see what I can do, although my collection of 3D games is very limited right now.

VulgarDisplay

Diamond Member

- Apr 3, 2009

- 6,188

- 2

- 76

You are saying the same thing AMD has been saying ever since Intel slaughtered them.

Hey folks, don't look at meaningless benchmarks.

Look at those average gamers? Lets blind test them, and use their observations instead of numbers.

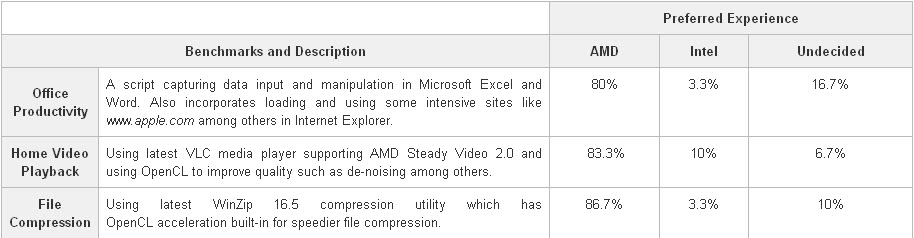

http://amdfx.blogspot.com/2012/04/mobile-trinity-blind-test-amd-clear.html

http://legitreviews.com/article/1838/1/

Frankly I think that's a bunch of stuff, and PR at it's worst - doing damage control insteaf of fixing things.

Because numbers are meaningless only if you pull them out of your ass. Which is hardly the case here.

Frame times coupled with frame variations is what defines your gameplay experience.

In infinitely more objective way, than observations(or lack of) done by Joe, Marry and d3L74#w4rri0R.

And arguably in a much more complete way than FPS alone.

Average FPS(average latency) numbers, done by "computer output graph" has been fine for ages.

So why all of sudden these new numbers: FPS, FPS variations, latency spikes(>50ms) are somehow less worthy, more suspicious, and should be confirmed by "blind testers"?

You never asked for FPS blind test confirmations, or have you?

The point is that no one noticed frame latency when it was worse on Fermi than it is on Tahiti. The first thing reviewers need to do is blind testing to find out at what point frame latency is perceptable to average hardcore PC gamers. Once they can ascertain what that threshold is they can start to reach meaningful conclusions.

What I said will stand until reviewers provide a parameter for perceivable stuttering.

What is really needed is a way to increase and decrease frame latency on demand, have someone watch a scene and increase the latencies until the observer sees the problem. Do that with a hundred or so people to get a good average and then start drawing conclusions. That would be a sound way to make frame time graphs meaningful.

The point is that no one noticed frame latency when it was worse on Fermi than it is on Tahiti. The first thing reviewers need to do is blind testing to find out at what point frame latency is perceptable to average hardcore PC gamers. Once they can ascertain what that threshold is they can start to reach meaningful conclusions.

What I said will stand until reviewers provide a parameter for perceivable stuttering.

What is really needed is a way to increase and decrease frame latency on demand, have someone watch a scene and increase the latencies until the observer sees the problem. Do that with a hundred or so people to get a good average and then start drawing conclusions. That would be a sound way to make frame time graphs meaningful.

What is measurable and perceivable can indeed be 2 different things.

Last edited:

VulgarDisplay

Diamond Member

- Apr 3, 2009

- 6,188

- 2

- 76

What is measurable and perceivable are indeed 2 different things.

That is what so many people are glossing over in this debate. Until reviewers use actual scientific methods to prove without a doubt when their control group starts to see frame latency problems this method of benchmarking GPUs is meaningless.

Now cue people refuting what I have said based on their brand loyalty bias with nothing to back their arguments.

MrK6

Diamond Member

- Aug 9, 2004

- 4,458

- 4

- 81

If you can't handle other people's opinions, you should unplug your ethernet cable.Neither does getting super defensive. You're even worse.

Exactly.What is measurable and perceivable can indeed be 2 different things.

The point is that no one noticed frame latency when it was worse on Fermi than it is on Tahiti.

Worse frame-times on 40nm chips built on year 2010. architecture, than on 28nm 2012. arch?

Newer card being faster is not exactly newsworthy.

Then again Fermi did not exhibit wild local frame-time fluctuations like 7950.

So what's there to notice/post about?

And if you are going to spin this again in "Fermi was worse than Evergreen/Northern Islands" - please don't.

I already looked at TR, and no, there is no pattern supporting such claim.

The first thing reviewers need to do is blind testing to find out at what point frame latency is perceptable to average hardcore PC gamers. Once they can ascertain what that threshold is they can start to reach meaningful conclusions.

I am certainly interested in anything gaming related, so that includes blind-testing.

But that does not answer my question to you:

Why sudden need for blind testing?

Now that we have FPS, FPS graph, FPS variations, FPS spike count, percentile graph etc... it's only now that we need more blind testing.

But when we had had avg FPS alone, anyone not considering it be-all and end-all of gaming was fanboi, sheep and whatnot

I have linked above some hmm... interesting blind-test results.

And I am curious about new ones for all kinds of reasons. So please link blind-tests here or in another thread.

In the meantime, it's not like we need blind-testing to realize that more FPS with steady frame-times output, i.e. small FPS variations,

translates directly into more responsive and smoother gameplay.

Blind-testing FPS threshold, variation threshold, and determining human perception limits is certainly an interesting topic.

But,

gameplay-meaningful, rational quantification of gfx cards is already here.

And it's only going to get better. LOOKING AT YOU PCPER

That is what so many people are glossing over in this debate.

Until reviewers use actual scientific methods to prove without a doubt when their control group starts to see frame latency problems

this method of benchmarking GPUs is meaningless.

Only this method needs "to prove without a doubt..."?

Ewww....I thought you were serious.

And that you are holding avg. FPS to the same standard.

But only perception threshold on latencies need to be proved?

And by the look of it, you want Stanford Uni Math/Physics Deepartment to lead the effort.

scientific methods to prove without a doubt when their control group starts to see frame latency problems

else

this method of benchmarking GPUs is meaningless.

You still don't realize that all TR, AB, soon PCPER are doing, is quantifying gfx cards, albeit more thoroughly than by using avg. FPS alone :\

Last edited:

VulgarDisplay

Diamond Member

- Apr 3, 2009

- 6,188

- 2

- 76

I honestly have no idea what anything you just posted is about.

The need to actually find a measurable number for when frame latencies present a perceivable negative viewing experience isn't too try and prove amd doesn't have a frame latency problem, but to give both companies an actual specification that they need to ensure their cards are capable of running at all times.

If a simulation is made that can actually allow a tester to increase frame latencies on demand the findings could be surprising. It could turn out that the numbers we see for both manufacturers are perfectly fine, or we could find that even nvidia who is currently better in this department also needs to improve for the best possible experience. Why would anyone not want this type of research?

The only reason to not want it is to try and paint amd in a negative light.

I'm not saying what tr, and pcper are testing isn't a great benchmark. It is far better than fps benchmarks. The problem is that they are still yet to provide the most important part of their new testing methodology, which is what is the frame latency number needed for the best possible experience. Find that out and then they will truly have changed the benchmark game. To me the graphs have little meaning until they do find the magic number.

The need to actually find a measurable number for when frame latencies present a perceivable negative viewing experience isn't too try and prove amd doesn't have a frame latency problem, but to give both companies an actual specification that they need to ensure their cards are capable of running at all times.

If a simulation is made that can actually allow a tester to increase frame latencies on demand the findings could be surprising. It could turn out that the numbers we see for both manufacturers are perfectly fine, or we could find that even nvidia who is currently better in this department also needs to improve for the best possible experience. Why would anyone not want this type of research?

The only reason to not want it is to try and paint amd in a negative light.

I'm not saying what tr, and pcper are testing isn't a great benchmark. It is far better than fps benchmarks. The problem is that they are still yet to provide the most important part of their new testing methodology, which is what is the frame latency number needed for the best possible experience. Find that out and then they will truly have changed the benchmark game. To me the graphs have little meaning until they do find the magic number.

Last edited:

Why would anyone not want this type of research?

The only reason to not want it is to try and paint amd in a negative light.

Me, I'm all for research. Huge Stanford fan!

To me the graphs have little meaning until they do find the magic number.

Yeah, I've gathered that already.

Anything other than avg FPS... needs more research :whiste:

Last edited:

Lonbjerg

Diamond Member

- Dec 6, 2009

- 4,419

- 0

- 0

I'm glad to see investigations, reviews and discussions that go beyond just frame-rate.

Not all are it seems...

VulgarDisplay

Diamond Member

- Apr 3, 2009

- 6,188

- 2

- 76

Me, I'm all for research. Huge Stanford fan!

Yeah, I've gathered that already.

Anything other than avg FPS... needs more research :whiste:

You could try reading what I'm posting instead of getting 1/4 of the way through and responding.

I clearly state that these frame time benchmarks are far better than fps benchmarks.

They just need a little more work before they are ready to be the end all be all of benchmarks. Traditional FPS benchmarks will never go away though because they are still important in conjunction with these frametime graphs.

Lonbjerg

Diamond Member

- Dec 6, 2009

- 4,419

- 0

- 0

You could try reading what I'm posting instead of getting 1/4 of the way through and responding.

I clearly state that these frame time benchmarks are far better than fps benchmarks.

They just need a little more work before they are ready to be the end all be all of benchmarks. Traditional FPS benchmarks will never go away though because they are still important in conjunction with these frametime graphs.

This new way of looking at frames are making pure FPS graphs obsolete though....like it or not.

Min, avg and max just dosn't cut it anymore.

VulgarDisplay

Diamond Member

- Apr 3, 2009

- 6,188

- 2

- 76

This new way of looking at frames are making pure FPS graphs obsolete though....like it or not.

Min, avg and max just dosn't cut it anymore.

They won't cut it, but they are still important. A card could have great frame latency and only get get a 20fps average.

A combination of both benchmarks will be best.

TRENDING THREADS

-

Discussion Zen 5 Speculation (EPYC Turin and Strix Point/Granite Ridge - Ryzen 9000)

- Started by DisEnchantment

- Replies: 25K

-

Discussion Intel Meteor, Arrow, Lunar & Panther Lakes + WCL Discussion Threads

- Started by Tigerick

- Replies: 24K

-

Discussion Intel current and future Lakes & Rapids thread

- Started by TheF34RChannel

- Replies: 23K

-

-

AnandTech is part of Future plc, an international media group and leading digital publisher. Visit our corporate site.

© Future Publishing Limited Quay House, The Ambury, Bath BA1 1UA. All rights reserved. England and Wales company registration number 2008885.