SirPauly

Diamond Member

- Apr 28, 2009

- 5,187

- 1

- 0

Amd working on a fix is going to make an already excellent GPU better.

Indeed!

Amd working on a fix is going to make an already excellent GPU better.

Here you had the chance to show the way, for example by showing the numbers and graphs that correspond to what you perceive as annoying, but instead you add just one more personal anecdote to the collection...People who are interested in this research are getting annoyed by AMD fanboys who come into the thread saying "I personally can't see an issue on my PC therefore there can't possibly be an issue".

What they perceive as an individual is totally irrelevant. If someone did a scientific survey that would be interesting, personal anecdotes are not.

Here you had the chance to show the way, for example by showing the numbers and graphs that correspond to what you perceive as annoying, but instead you add just one more personal anecdote to the collection...

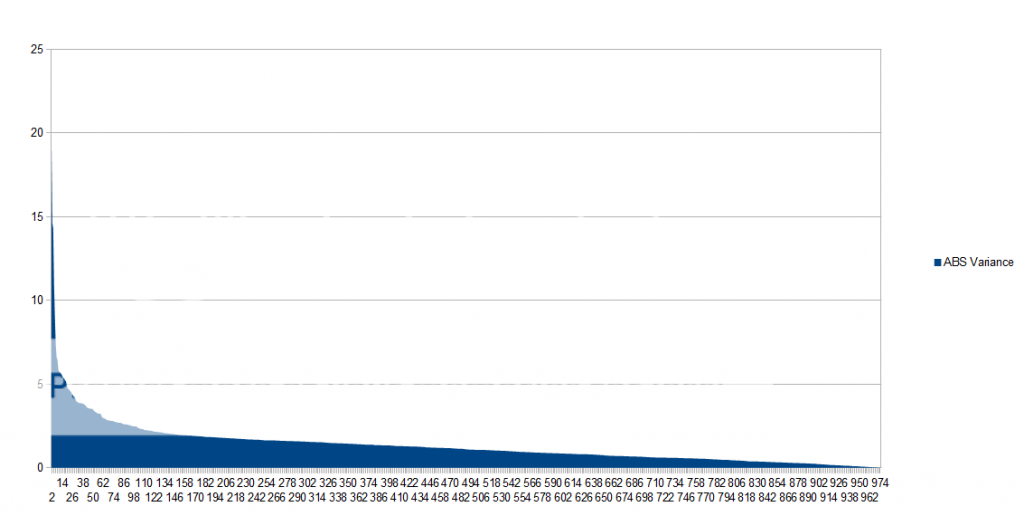

These are the frame times I obtain for the scene I reproduced as near as possible from TR's microstuttering video. The frame times are Gaussian distributed, as you would expect from microstuttering, with a mean of 16.68+-0.79 ms. That can be written as that the microstuttering is 4.7% of the mean value. It contradicts TR's report, so I would very much like to know how they got so bad results. If you do not believe me when I say that I cannot see the microstuttering, maybe these graphs and numbers will convince you? Or is still think this is irrelevant?

Hmm, I didn't add any anecdote?

As TR showed frame latencies differed hugely from area to area. In the area where the 7950 performed poorly even the 660 Ti showed frames over 50ms, so I'm guessing you haven't managed to replicate the conditions.

Now hopefully this suggests that the stuttering is relatively rare. Hopefully we will start to see more sites examining this issue. The more evidence that is built up from a wide range of reputable sources the better.

...what in the world does this have to do with you?I certainly will if you post a constructive reply to my previous posts. Your last previous post was neither constructive nor on topic, but I believe that you can do better.

...what in the world does this have to do with you?

My post was actually constructive. It highlighted the hypocritical change in agenda of AMD apologists that occurred with the release of the HD 7000 series. The fact that Nvidia "zombies" are all riled up is unsurprising.

From the graphs it seems like the faster you complete an individual frame the worse the stutter is.

What is disappointing in this is that problems were reported to AMD in January 2012. Its taken them a year and this review you finally acknowledge the problem. It'll take longer to fix it. That is shameful customer service.

BrightCandle, that was the single most useful post in this thread! I was going nuts over why I could almost reproduce their indoors results, but not this wilderness result. I think you just found out why! In the later tests they did not mention the resolution so I missed it!Your signature suggests you're playing this at 1080p not 2560x1400, is that the case?

If that is the case then that would explain why you see less microstutter than they do, your card simply isn't being pushed anywhere as near as hard. One point the TR guys make in their recent podcast is they are only getting visible problems when the card is actually pushed reasonably hard and then only in the latest crop of games they tested. The old games they had didn't show the problem but the new ones do. They also said dropping the settings can reduce the problem.

So if its the case your running what is 56% of the pixels then its no surprise the results is different, its also consistent with what they found.

Sorry that was not you. Mea culpa.Hmm, I didn't add any anecdote?

What that graph shows is one frame being rendered faster and that makes the next frame slower in reference to the faster one. If the prior frame was rendered at the same interval as the others then the next frame would not appear so slow.

For example, the first "faster" frame has a latency of ~5ms. The next frame is ~24ms. The mean diff between the 2 would be ~14.5ms which is just about exactly where all of the other frames lie.

Sure! Hitman no FXAACan you share the fraps frame times csv? As I say I am very keen on collecting more and more data on this so we can start to get a picture across a broad range of people and what they can and can not see stuttering in.

In another thread I showed the micro structure for a couple of cases. A slow frame is always paired with a fast frame, but the interesting thing is that it is periodical, meaning sometimes you have four frames at 16 ms followed by the microstuttering frame pair, and sequence repeats itself, while other times you have six frames at 16 ms spacing between the slow+fast pair. Their occurance is not random, but the magnitude of these frametimes are Gaussian.The graph you quoted suggests that likely the issue can be resolved without an decrease in overall framerate.

That is, if it is representative of the overall issue.

What that graph shows is one frame being rendered faster and that makes the next frame slower in reference to the faster one. If the prior frame was rendered at the same interval as the others then the next frame would not appear so slow.

For example, the first "faster" frame has a latency of ~5ms. The next frame is ~24ms. The mean diff between the 2 would be ~14.5ms which is just about exactly where all of the other frames lie.

It will be fascinating once PCPer have some results to publish with their more advanced testing.

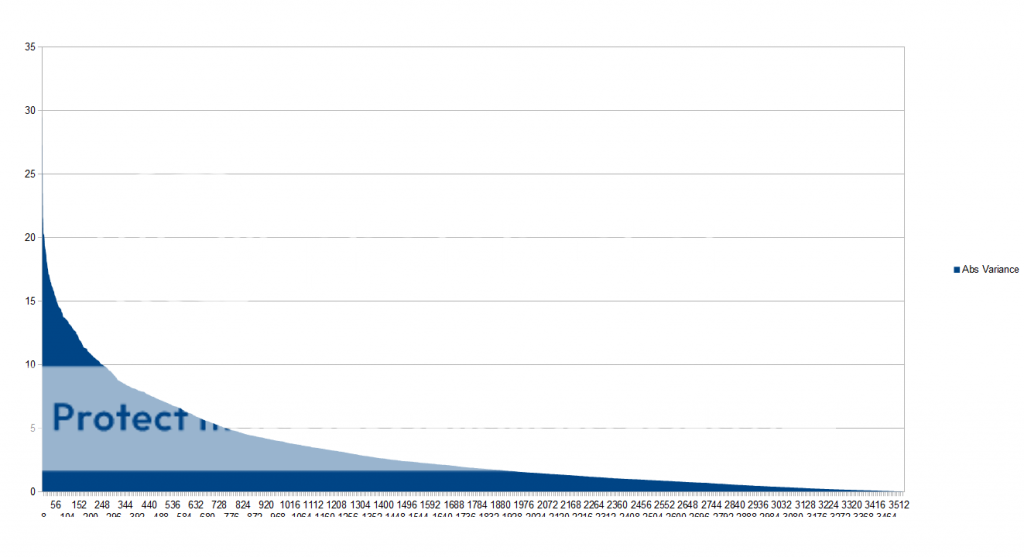

Glad to be of assistance! Like I said, that Hitman file has noticeable microstutter, but it is at the level that I do not feel bothered with it. If I keep FXAA on however, it becomes a stuttering mess (and blurry like renaissance painting).That is a beautiful traceShows a lot of microstutter and stuttering without the frames per second wildly changing.

Well, in theory yes. But in practice the fine sequence is more likeTime of frame 0 16, 32, 48, 64

Pattern 8, -8, 8, -8, 8

Frame time 8 , 8, 40, 40, 72

So even 8 ms is highly destructive to smooth gameplay, it halves the frame rate.

Nah, we had examples of good frame times that still give a stuttering impression. I think the cause of that is different from what we are discussing here, which is the reason why FRAPS is blind to it. Frame times are useful but do not tell the full story I am afraid.I really want to see their analysis, I think its going to move the state of the art on in a big way but I also fear it wont actually give us anything we don't have already, it will simply validate frame times as a valid approach for determining the offset of age of frames delivered to the GPU.

Glad to be of assistance! Like I said, that Hitman file has noticeable microstutter, but it is at the level that I do not feel bothered with it. If I keep FXAA on however, it becomes a stuttering mess (and blurry like renaissance painting).

What do you make of this one? This is the outdoor scene from Skyrim I posted the first graph for. On this one I cannot visually detect any microstuttering.