Yeah, the dice will come off the same wafers. So if AMD has limited orders of N7+ (which inevitably they will; they can't order infinite wafers) and Milan demand ramps up, then anything that bins well enough for in-demand SKUs will go there first. If Milan sells too well or has too much pent-up demand before release, Vermeer supplies will suffer. By how much, we don't know.

This was me pointing out the absurdity of your post. It has always been that way. The best performing chiplets in terms of clock and not being power hungry are going to Milan/Epyc. You don't need the high clocks for Epyc either. You even pointed out yourself they could have been taped out months ago and AMD is milking Zen 2. One day you say that, the next day you say they'll be capacity constrained, and then you go back to your original point.

Packaged and set for retail. And it's mostly based on early UEFI revs, and how many of them popped up in OEM repos once x570 launched. Turned out AMD had all manner of interesting UEFI revs and AGESA versions kicking around before July 7th 2019, including some that had to be crippled to mess with leakers.

That date period only refers to people who went deep diving into revisions. You keep mixing up your reasoning here. AMD was never capacity contained last year in July. They admitted they heavily underestimated the number of sales they would have on the 3900X. The 3900X was never a paper launch, nor was the 3950X.

IC: From our audience, one of the most common questions I need to put to you is about TSMC’s capacity. Can you shed some light into how this might affect AMD?

MP: TSMC is a key partner for us, and they were with us at our second generation EPYC launch. I think that really helped people to understand the scale that TSMC has. The most rapid launch in the history of TSMC was its 7nm process node, which has had an asymptotic volume ramp and that was well ahead of our launch of Rome and EPYC. So we’re getting a great partnership with TSMC, as well as great supply. We did have some shortfalls on chips when we first launched our highest performing Ryzens, and that was simply demand outstripping what we had expected and what we had planned for. That wasn’t a TSMC issue at all.

AMD could "make" 10M chips a month and they would likely still be low on supplies. The global pandemic only caused a portion of the world to work from home. It wasn't everyone. As we move forward and until a vaccine is proven relatively safe and effective, expect shortages due to demand far outstripping production capabilities. Just ask Intel, who've been supply constrained for 3 years.

Others have already addressed this point, but if AMD really wants 5nm for hypothetical Warhol, they could probably get it done if their launch date is late H1 2021. It would certainly be easier for them to stick with N7+.

What does that leave for AMD then? Launch the tech stack and 5nm on Warhol without improvements in performance and bring performance in with Raphael? If you want to talk about constraints then Warhol will cause your constraint. No one knows what Apple's sales figures will be like as they launch more 5nm products. Their first Apple Silicon computers may very well be amazing and cause sales to double or triple. This is purely hypothetical. Apple's supply chain and JIT mantra that Tim Cook has nurtured over decades will help to a point. You may have had a point if GPUs would then come later, provided they were a monolithic die. Except most rumors point towards RDNA3 and NVIdia's hopper being chiplet. One is more expensive than the other to produce at scale due to die size and defects.

Matisse had them, and Summit Ridge also had them. With Matisse, it was mostly AGESA-related.

Matisse was BIOS issues. I'm not familiar with 1st gen Ryzen. Are you referring to segfaults that affected Ryzen mainstream or the mystery bugs that plague Ryzen TR 1st gen? How Windows addressed everything?

They are, but at the same time, we're kind of stuck. Micron and Hynix made significant advancements with e-die and CJR respectively, but Samsung isn't pushing A-die or M-die into the DiY market. Samsung b-die is still the cream of the crop when it comes to enthusiast performance, and it has been for a very long time. We should be seeing enthusiast A-die by now (in improved density no less) but we aren't. I suspect everyone is waiting for DDR5 to push performance memory products.

I'm not familiar with A-Die or M-Die. Though I don't go snorkling in memory chat like some such as yourself. Fact is, early DDR5 may be far too expensive to purchase except by those with deep pockets. DDR4 prices are beginning to slink downward. A 16 GB DDR5 set (assuming capacity can carry over and I'm not 100% well informed on it), you may see prices of $200-300 depending on demand and supply, and low speeds.

I just looked up DDR4 prices and am blown away. Just about 2 months ago a good set of DDR4 3200 16GB would set you back quite a bit. It's around $70 now. The good Crucial sticks dropped 30-60 bucks.

Of course, knowing my damn luck they'll pop up back in price the day before I order my parts.

Core2 launched in 2006. 2005 was the critical period where AMD could have/should have responded by moving past K8. Ruiz infamously dismissed the threat, choosing instead to believe that Intel would stay with Netburst.

Typical design to product is around 3-5 years for a new processor design. If AMD would have responded, they would have had to begin in either 2002 or 2000. If they began in 2005 they wouldn't see their efforts for another few years up to 2010. If you want to give all the glory to Keller in this following example, then he joined AMD in 2012 and left in 2015. Ryzen was announced at the latter end, IIRC, and there was a demo at Hot Chips in 2016 on the Zen uarch. Ryzen hard launched in March 2017. I'll link to it just below this paragraph of text. Ruiz was and still is a moron. Though you're laying a bit of blame on him here. AMD reacting in 2005 would have already been late. Anyone paying attention to Intel would have known about Yonah and what it would bring. It was marketed in early 2005 and then launched the following year in January 2006. Ruiz's complacency and bad decisions drove AMD down.

Why not? The current management team isn't the same as in 2017, at least in terms of behavior. Same people, different actions. I think AMD is getting complacent. They might have much better reason to be so this time around - Intel is really struggling on the tech front. But I would rather they were a bit more paranoid ala Andy Grove. Also AMD really needs to continue punishing Intel with crushing benchmark wins in critical areas where Intel makes their money. Intel is making bank off bad tech. Rome hasn't moved the bar far enough. The sooner they can iterate through Milan to Genoa, the better. AMD has to chase that marketshare and mindshare. In the end, what we all need them to do is overtake Intel and then be a fundamentally better, more consumer-friendly company once they are sitting on top.

As a consumer, I don't think it's unreasonable to expect AMD to remain a technology-focused company that caters to the growing demands of users while making a healthy profit in the process.

That's strange wording. You'd be better off saying "AMD's management team behavior today is a stark contrast to that of 2017."

Semantics aside, why do you think AMD is being complacent? How does AMD benefit from being complacent when Intel is still a threat? Right now we estimate Intel won't be back onto their feet until say 2023-2025. That doesn't mean they might not have a breakthrough. AMD already crushes Intel in benchmarks and power use where Intel makes their money. They fall short on gaming due to core to core latency. CAD/CAM, Photoshop, Premier, etc. are software that prefer frequency due to not being heavily multithreaded. The iGPU for Intel QuickSync also helps when it comes to certain tasks like video rendering. It'll take a very, very, very, very, very long time for AMD to claw back their prior highs in the datacenter. Hardware cycles are longer nowadays and datacenters may still purchase Intel due to their setups and not wanting to validate AMD. Intel is practically giving away high core Xeons if Wendell is to be believed. Epyc is amazing, but it's also a new platform that has yet to prove itself year after year. Milan should establish even more street cred and Genoa should sell a lot. The next 2-3 years should see AMD increase their datacenter share by a factor of 2-2.5x. It really depends on how much power they can extract. Genoa may see a core count increase. I've had the number 96 suggested in a few industry calls. Not quite sure how they'd do that without increasing overall sizes unless layering come into the mix. Possibilities are "endless."

AMD overtaking Intel may take a decade. You'd really need to Intel to not only mess up in DC, but client and anything else they offer. They still have high revenue. Though with bean counters guiding the company, that day may come sooner. I'll put it in simple English.

If Zen 3's 4600X/5600X can give performance numbers of that of a really good 8 core, that's good. If it can do a theoretical 10 core Zen 2 processor or 12 core Zen 2 processor, that's amazing. Here's a simple breakdown of scores in popular mainstream benches to get a rough idea.

Benchmark, test, review, comparison and differences between these CPUs in Cinebench 23 and Geekbench 5

www.cpu-monkey.com

Ignore single core scores. It's likely the difference between single core scores will greatly increase than what's shown, and a mere 1.8-2x increase in multicore wouldn't be accurate as I believe Zen 3 will outstrip that.

Learn to read. I never said they would meet their demise. It's just that they are getting complacent, and soon that will lead to them being greedy. And if someone doesn't challenge them, they'll become the next Intel: rehash products, raise prices, squeeze consumers, etc. We need AMD to stay on a competitive footing at least so they'll keep their focus on continued advancements of technology irrespective of whether another company can seriously compete with them. That's what the semiconductor game has been about in the past, and that's what it should be about in the future until the laws of physics finally say "no". And we aren't there yet.

I can read. Learn to be real. If you think AMD is being complacent based on taking a few months longer while their cadence supports it and the XT lineup, then I can't help you. AMD is in zero position to be complacent. Their financials don't allow them to sit around and do little, especially with their competitor several times stronger than nearly 20 years ago. AMD doesn't have Intel's mind share. They probably won't for a long time. If you want to talk about greed, then releasing Warhol which offers zilch in performance a new tech stack in 2021 with Raphael around the corner for the same launch prices as Zen 3 is greed. Yay, AM5. Yay, new tech stack. Boo, no 5nm. Gotta buy Raphael for those sweet performance gains and 5nm! At increased prices again.

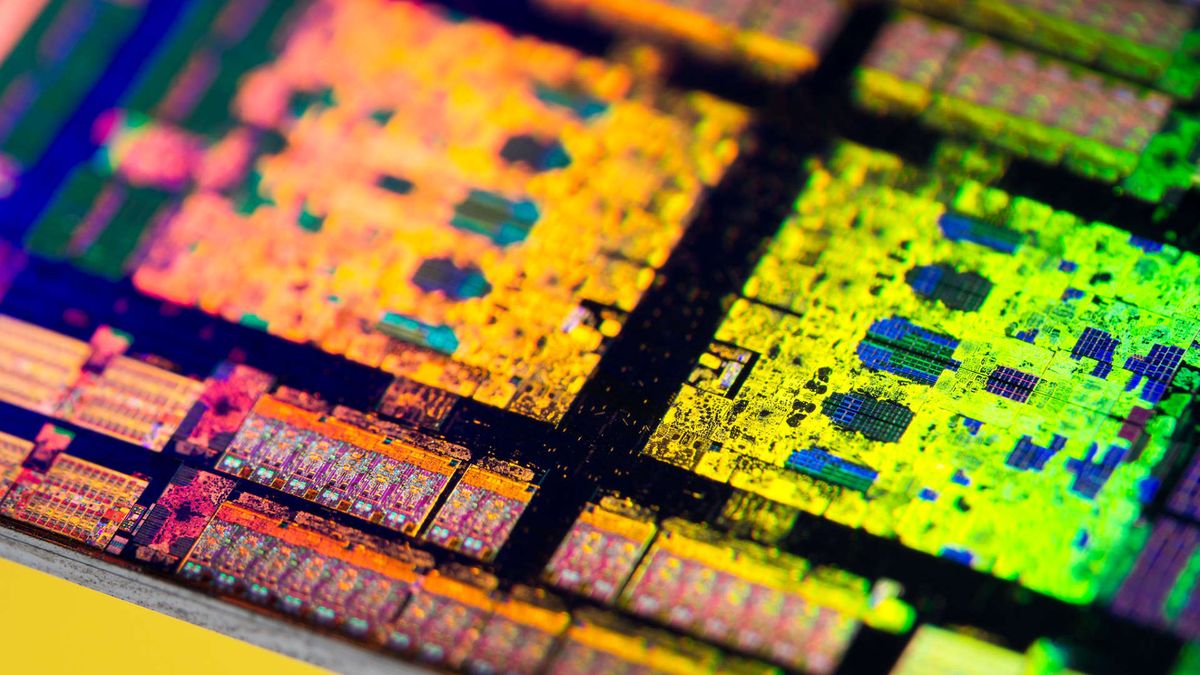

Yes, but TSMC has been known to produce multiple iterations of nodes at the same general feature size. For example:

Previously someone argued this was moot if they're made on the same lines. TSMC is confirmed to be expanding. Someone posted something online yesterday which stated TSMC were looking at building 2 or 3 fabs excluding one built in the center of Taiwan. Samsung's having issues with their nodes but they'll eventually figure it out... hopefully. I say that because it's healthier to have these two fabs compete with one another as it benefits end customers and companies we buy products from. TSMC was also looking into getting into the memory business a while back. I can see a world with TSMC, Micron (Crucial), Samsung and SK Hynix being the top 4 players in memory production.

That gives them N5 and N5P? At least? So Raphael could be N5P while Warhol could be N5.

If it's a new tech stack on top of a new node without significant performance gains, it needs to be priced half of what Vermeer launches at. A new tech stack and node do nothing for the consumer. No real USB4 products on the map. No PCIe5 drive development, just controllers. DDR5 expensive as heck. There is no real incentive. Unless AMD brings out Warhol with a 12-20% IPC increase over Vermeer, and Raphael brings 15-25% IPC increases over Warhol. Warhol would need to not regress on clock speeds from Vermeer, and Raphael would need to increase clock speeds over Warhol. Vermeer is rumored to have a 4.9 Ghz all core... excuse me while I go laugh my guts out.