No one is saying AMD is doing everything right. Just saying that Linear power load considering what the CPU is and how it manages turbo frequencies probably isn't realistic. This thing is figuring out how whats the best clock for max performance within all (not just max W which is ~140w for 105w CPU) perimeters. The assumption that its not doing something right and that AMD should have this correct because well all other 3000 CPU's don't see it (well the 3900x does) is a bad assumption based on bad data and is just as dangerous as saying everything AMD does is right. They still have some things to iron out with Agesa, but don't assume this is one of them, and 1.0.0.4b was written specifically for this CPU (while again ironing other issues). This is like people getting angry they couldn't get max overclocks to match top clock speeds. The 3000 is new and different beast, the 3900x and 3950x take that new setup to the extreme and AMD has been developing a turbo method that maximizes silicon without dramatically affecting yields almost to pefection on this CPU. Whether its the right way to go, thats for people to discuss for years. But its completely new and alien to everyone and its dangerous to assume that because it isn't working like you expect it to that is in fact a problem and not how it was designed.

Secondly. I wouldn't say that out loud. He hasn't made a whole lot of fans. Not everything he says is crap or unreasonable. But he has been dieing on a lot of mole hills he should be smart enough to recognize as worthless.

I don't know much about who you're talking to, I don't really track users and their previous replies. I saw a graph and decided that it would be really interesting to investigate, and had a quick theory on what could happen in the future. Which is fun to add to a discussion to see if it comes true in the future. And to be clear I also didn't say that it must be a bug and must be fixed. I'm not mad.

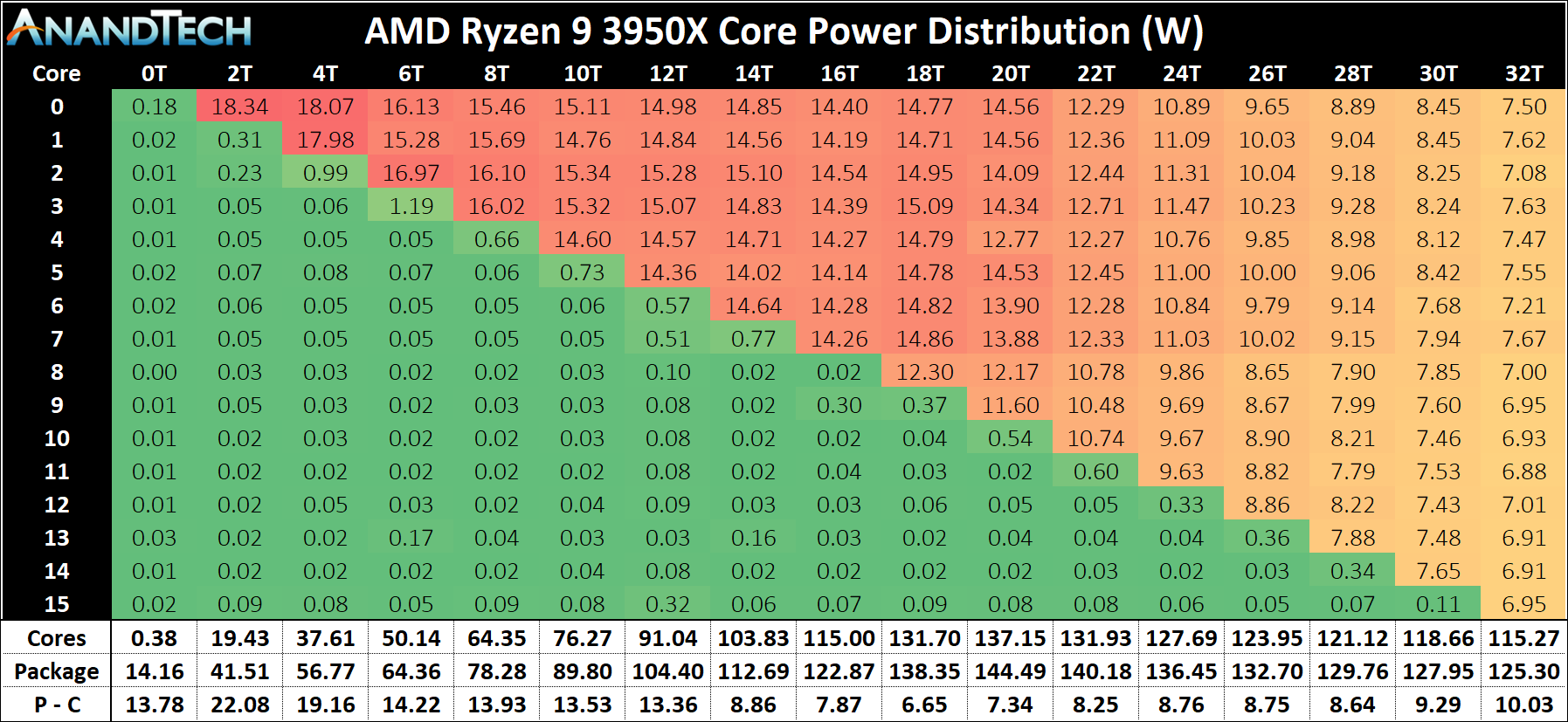

Anandtech's latest article on favored cores and windows is very interesting and goes to show that there is often much more going on under the hood of not just the processors, but the OS/agesa than we could even imagine. Basically AMD is forcing windows to favor a single CCX before spilling into the second CCX. I guess that's obvious they would want to do that, keep data as local as possible. But If you consider that information, it may shed some light on what we're talking about.

Let's look at the 3950 again with that in consideration. In particular consider that each CCX could have it's own power curve, and the transition to adding a second CCX (at 18T), Look specifically at the Core 0 voltage trend.

As more cores are added, watts for core 0 is decreased consistently, until you hit 9 cores (18T), where it jumps up. That is the transition from using 1 CCX to using two. Then we see the watts continue it's downward trend. A bit of a sea-saw. It's almost like the first 2 cores on each CCD are allowed to consume more power, relatively speaking. Note how there is a steap drop off from 2 cores to 3 cores as well.

Though it's confusing because If we can assume that the core number is consistent, and 0-7 are CCX1 (favored ccx), and core 8 is now on the second CCX, the core that's actually on the second CCX is actually consuming less watts (~12w vs ~14w) than the ones on the first CCX. So that throws a spanner in the works for my theory of the first two cores on the second CCX being ran at higher power

Or maybe it's a bug? Afterall, in my small uneducated mind, I would think that a fresh CCD with low core utilization could accept more watts just strickly on thermal headroom.

browser.geekbench.com