Nothingness

Diamond Member

Do you know what laptop that was?View attachment 101208

We got some early benchmaks at 65 watts and 28 watts. Its seems ST decreases a lot when its set to 28 watts. This is lower than base M2 in CB 2024!

Do you know what laptop that was?View attachment 101208

We got some early benchmaks at 65 watts and 28 watts. Its seems ST decreases a lot when its set to 28 watts. This is lower than base M2 in CB 2024!

It’s from Lenovo. Don’t know the modelDo you know what laptop that was?

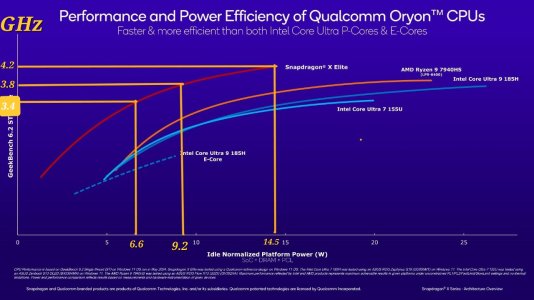

It's fine - great actually - for a part that's on N4/N4P and given where AMD/Intel are. Absolute power consumption topping out at 14W for a 4.2GHz part including full platform vs AMD and Intel where they have a 20-30% lead on them in terms of performance - that gives them both a notable performance gain (or responsiveness) and around a 20-30% energy efficiency gain still at those same power consumptions. This is also their peak.View attachment 101215

The power consumption of X Elite in ST is too high. 15W may be less than Intel/AMD, but it's not a huge enough difference. Apple M3 is not in this graph, but I assume it'll be like 10W.

This is again just about the fundamental curves. They will likely improve the entire thing, but still keep something hitting 10-15W at peak, albeit with 25-40% more performance at that point. IOW, they will, but they're not going to take the power down to like 8W tops for a laptop part considering this is full platform. It doesn't mean the energy efficiency and slope of the curve won't improve.X2 Elite should prioritise reducing this power consumption.

I annotated the above graph by mapping the curve to frequency. It's not perfect though, because the curve is for platform power, not core power.

View attachment 101216

Still, we can see that the power has roughly doubled when going from 3.4 GHz to 4.2 GHz.

It is indeed possible this is the case, I admit it's way more likely X Elite is on N4 than 8 Gen 3. Ming Kuo originally claimed it was N4 as did others.Yeah, it would be pretty suspect if the X Elite is on standard N4, when N4P is available.

The explanation for why X Elite would be using N4, is that it's development had started at a time when N4P was not available. It's a reasonable explanation.

Yeah I don't know the re-spins would be able to literally take it from N4 to N4P. But a porting after an initial N4 run or something I could see given the design rules are constant.But Qualcomm could have easily ported it from N4 -> N4P. According to Semiaccurate, we know that they did multiple re-spins of the Hamoa die...

So that's X1E-78 (which both Yoga Slim and T14s use), the version with no dual core boost. What the results show is that even with that limit, the X Elite power consumption is rather high (though that might also be preliminary bad power curves).It’s from Lenovo. Don’t know the model

FWIW:So that's X1E-78 (which both Yoga Slim and T14s use), the version with no dual core boost. What the results show is that even with that limit, the X Elite power consumption is rather high (though that might also be preliminary bad power curves).

I agree with you, but you are looking at from a technological PoV. What about the market? Intel and AMD are debuting their next gen laptop parts (LNL and Strix) very soon. Those will close the gap with Qualcomm. If the gap is too small then, consumers will feel no reason to buy a Snapdragon X device.It's fine - great actually - for a part that's on N4/N4P and given where AMD/Intel are. Absolute power consumption topping out at 14W for a 4.2GHz part including full platform vs AMD and Intel where they have a 20-30% lead on them in terms of performance - that gives them both a notable performance gain (or responsiveness) and around a 20-30% energy efficiency gain still at those same power consumptions. This is also their peak.

And sure M3 would be 8-10W, and more for the M3 Pro/Max likely. But it's on N3B and Apple is now just iterating year in year out off a base, so. This is QC's first part with custom cores and was saddled with internal drag and lawsuits. Qualcomm just has to consistently be much closer to Apple than the other two, this really isn't complicated.

Then tell me why is the the 4.2 GHz/4.0GHz Boost (10W+) limited to only the top two X Elite SKUs? Every other SKU has no boost and tops out at 3.4 GHz (~7W).This is again just about the fundamental curves. They will likely improve the entire thing, but still keep something hitting 10-15W at peak, albeit with 25-40% more performance at that point. IOW, they will, but they're not going to take the power down to like 8W tops for a laptop part considering this is full platform. It doesn't mean the energy efficiency and slope of the curve won't improve.

I am thinking about it from a market POV! You are crazy if you think Strix will get AMD's curve to match Qualcomm's. If it did, they'd be talking all about battery life, because that floor being 1/2 to 1/3 the power of AMD/Intel current gen and the rest of the curve giving a 20-40% performance gain is a huge energy efficiency advantage in addition to the lower idle power. So if AMD even cleaned up energy in ST by a substantial amount without cleaning up idle power (which, I think these are semi-related at a platform level anyways but) they'd still be running to the hills about that in the demo. And they have when they had advantages or improvements before, before someone claims "oh AMD doesn't talk about perf/W or GB6 composite ST" (LOL that's BS.)I agree with you, but you are looking at from a technological PoV. What about the market? Intel and AMD are debuting their next gen laptop parts (LNL and Strix) very soon. Those will close the gap with Qualcomm. If the gap is too small then, consumers will feel no reason to buy a Snapdragon X device.

Seems like we're about to see a performance and battery champion. That's basically a paradigm shift when interacting with a laptop.RE: The Galaxy Book 4 Edge from the other day:

That thing has a 3K OLED display running at (dynamically refreshed I believe from 60-120Hz, but no advantage over 60Hz stuff), at 120Hz, and 400+nits of brightness, with a 55wh battery.

So 13H of normalized web browsing/streaming + other workload battery at full brightness with speakers on and responsiveness being quite good even at 2.5GHz is.... a really good result for the X Elite. If the battery were 75wh as is increasingly common for 13-14 inch laptops with OLED or higher resolution displays, it'd be more like 17-18 hours.

Granted I think this value will go down at 4GHz, but 3.4GHz should be able to maintain the same energy efficiency, just more performance.

Unclear what you mean here. I said:Then tell me why is the the 4.2 GHz/4.0GHz Boost (10W+) limited to only the top two X Elite SKUs? Every other SKU has no boost and tops out at 3.4 GHz (~7W).

Rare for now. 4GHz X Elite is in the XPS, Galaxy Book, Surface Laptop, so more likely just very SKU specific which is a good use of it.And to top that off, those top two X Elite SKUs are also extremely rare. The vast majority of Snapdragon "X Elite" laptops have the X1E-78-100.

Yep. I couldn't believe it was 55wh, I was assuming it was 70-75 and that would still be a great result. It's honestly ridiculous.Seems like we're about to see a performance and battery champion. That's basically a paradigm shift when interacting with a laptop.

Now one could drive a high-end laptop with amazing features active and don't worry about battery life. OEMs can deliver amazing laptops and new form-factors without being constrained by the CPU.

The future iterations of Snap X series will certainly be something to see.

I understand why. The 4 GHz+ parts exist as flag carriers. They pushed the clocks to the limit of the silicon, as you can see in this power curve how the power shot up by 50% for 3.8 -> 4.2 GHzYou don't seem to actually understand why those SKUs exist. They exist because of binning and because Qualcomm decided they could push clocks some at acceptable power tradeoffs - the X Elite 78 and X Plus SKUs are lower quality and couldn't be pushed due to either inability or unacceptable leakage and power tradeoffs at those frequencies.

They really need to reduce the ST clock difference between the topmost and bottom-most SKUs. 3.4 GHz -> 4.2 GHz is a substantial 23% difference. This kind of difference is never seen in Intel/AMD/Apple parts.That's why I'm saying they'll do it again next time, albeit a node gain and IPC gains along with probably other cache changes will "push" the performance upwards at any given power, and also push the floor SKU clocks upwards. This is why I was mentioning what Apple could do since they don't really frequency bin.

So next time the entire curve will improve, and the base SKUs might be 3.8-4GHz with N3P, and permitting binning and timing constraints for the core, the new top SKUs might be at 4.4-4.6GHz.

Speed demons (x86 cores) vs Wide Monsters (ARM cores).The reason AMD/Intel stuff is higher clocked and can get fine bins still is less because of like core size and timing at this point (Lion Cove is still plenty wide and Zen 5 added some heft) and more because of the transistor choices, which impact the frequency you can hit and also the yields for those frequencies. See why Qualcomm's bottom SKUs are around 3.4GHz and similar for Apple on N4/5, whereas AMD can easily assure 4.8-5.2GHz on N4/N4P mobile parts.

LOL.That power tradeoff from 3.4 to 4GHz is nothing like the BS AMD and Intel do where the slope is nearly flat from 10-25W and at a slow incline.

Assuming a 25% IPC gain and going through some N3P boosts to clocks across the SKUs (and also knowing the core structure itself already allows them to hit up to 4.4GHz at at technical level).They’re also binning P cores with that now, in a horizontal sense, but Qualcomm does that already anyways in addition to the vertical frequency binning. Either way, be it due to Apple’s tools and layout in combination (they’ve recently had notably higher clocks than Cortex Arm competitors and this has even increased in the last few years) yields, if 4.4GHz on mass parts with N3E is possible for Apple and at a power point that Qualcomm already has no issue taking with N4P, then I think we could see substantial clocks gains throughout the range with X2 on N3P. You wouldn’t get as much power savings at these peaks, but it’s still lower than AMD/Intel do at the platform level.

I think that base SKUs for X2 will be running 4GHz, with 4.2-4.6GHz models becoming much more common.

Yes ofc. But also that tradeoff, while bad, won't actually be made for most parts and is still again far better than the tradeoffs made in most cases. It's not worth going nuts over.I understand why. The 4 GHz+ parts exist as flag carriers. They pushed the clocks to the limit of the silicon, as you can see in this power curve how the power shot up by 50% for 3.8 -> 4.2 GHz

View attachment 101223

Again it all hearken backs to the rumours, that X Elite was really supposed to launch in 2023 and compete with M2, but it got delayed for various reasons. Qualcomm had to pull several tricks, such as 4.3 GHz boost and running it in Linux, to match the M3 in GB6 ST.

They really need to reduce the ST clock difference between the topmost and bottom-most SKUs. 3.4 GHz -> 4.2 GHz is a substantial 23% difference. This kind of difference is never seen in Intel/AMD/Apple parts.

Speed demons (x86 cores) vs Wide Monsters (ARM cores).

LOL.

Major IPC increase doesn't come free, without a power increase.Assuming a 25% IPC gain and going through some N3P boosts to clocks across the SKUs (and also knowing the core structure itself already allows them to hit up to 4.4GHz at at technical level).

Doing the math, a 4GHz (about 17%) clock gain over 3.4GHz for the base X2 parts would have them at 3566 GB6 ST.

For the existing 4GHz part going to 4.4GHz, you'd be at like 3850 GB6 ST.

And for 4.2GHz, if they went to 4.6GHz for almost a 10% gain and they could get about a 4038 GB6 ST (again adjusted from their existing score at that frequency).

Yep 😉Too much could happen again, I don't have hopes up, but given GWIII's comment

That depends on what you do, though. And it also depends on what goes on with cache and physical design changes. You're right, but you're just missing that the process gain for the frequency iso-architecture isn't the only change that will occur in tandem to + IPC and + some extra power. If the added IPC took power up 10-15% for the core throughout the whole frequency curve before node changes, but extra L2 and SLC took data movement down, they could be just fine. I know what you're thinking here and I don't disagree but it just depends.Major IPC increase doesn't come free, without a power increase.

You are assuming the node changes are the only form power reductions can come in. Even with Nuvia I bet they have some phydes tweaks and core cache changes. For ex: Apple with the A16 took frequency up by another 10% despite moving from N5P to N4, but with 33% more L2 and some other tweaks they brought core power (iso-performance) down by 20% per their own presentation, and in practice after that and admittedly LPDDR5 (which tbf I think helped for the platform power) they got GB5 scores up at around the same power.If you are using the entirety of the node gains to increase the frequency, then you have no more node gains left to cover for the power increase brought by widening the core.

This is why my opinion is that Qualcomm should should not jack up the clock speed for Oryon V2, but do a clock regression + major IPC improvement. This way, they can deliver a major performance uplift without increasing the power envelope from 15W ST (or maybe even reduce it).

Yep 😉

From a feature standpoint, the Adreno X1 GPU architecture is unfortunately a bit dated compared to contemporary x86 SoCs. While the architecture does support ray tracing, the chip isn’t able to support the full DirectX 12 Ultimate (feature level 12_2) feature set. And that means it must report itself to DirectX applications as a feature level 12_1 GPU, which means most games will restrict themselves to those features.

Here are the six levels of Ray tracing, as defined by Imagination:As previously noted, there is ray tracing support, and this is exposed on Windows applications via the Vulkan API and its ray query calls. Given how limited Vulkan use is on Windows, Qualcomm understandably doesn’t go into the subject in too much depth; but it sounds like Qualcomm’s implementation is a level 2 design with hardware ray testing but no hardware BVH processing, which would make it similar in scope to AMD’s RDNA2 architecture.