Your data is confusing. Afterburner says 1.2v, then you have a "min" VRM voltage of 1.08v but it maxes out a 1.167v. Is that 1.08v your idle voltage?

No, you can see my GPU usage is 99% loaded when I took that screenshot and has been running at 99% for an extended period of time to show you I am not faking it. This is why the minimum and current are the same since I opened it to take a screenshot for you while still running 99%. My card averages 1.088-1.092V at full load and peaks at 1.174V at most (which is what I have in my sig). The idle voltage is 0.804V.

Here is idle clocks of 300mhz and voltage drops to 0.804V.

http://imageshack.us/photo/my-images/849/7970idle.jpg/

MSI Afterburner or Sapphire Trixx only set your target voltage, not your actual voltage.

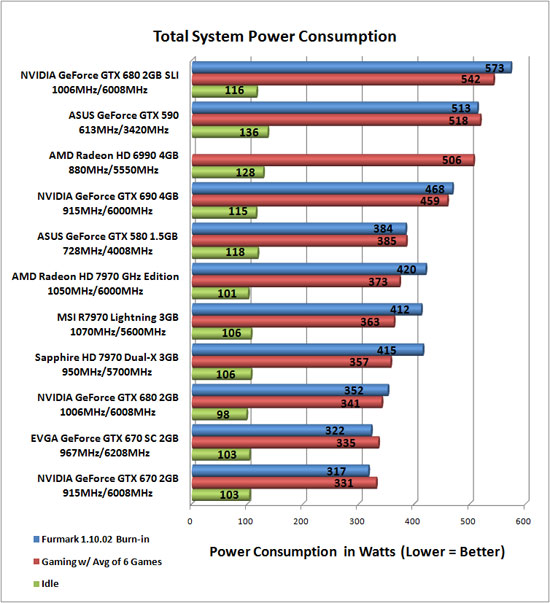

The point is if you drop memory clocks even lower, you can come in at 170-180W at 99% GPU load and even an average 7970 doesn't need 1.25V to reach 1150mhz. So you can have a rig with a bunch of 7970 cards mining and it will use a reasonable amount of power.

Actually at 1.174 volts your only a mere 0.026v away from the 1.2 sickB says. You are "around" 1.2volts.

No, 1.174V is max at random peaks. That is not even remotely close to 1.175V average or 1.25V of 7970 GE reference cards used in reviews. The average for me is 1.088-1.092V over 24/7, and I am guessing Elfear's is around 1.08V. These are not golden samples. A golden sample 7970 will hit 1280-1310mhz on air at under 1.3V in MSI AB.

http://www.legitreviews.com/article/1962/15/

To get an average of 1.175V, you would likely need to put in 1.25V into MSI AB or flash your card with the 7970 GE June 2012 BIOS AMD sent out where it forces the voltage to stay above 1.21V at all times, with a peak of 1.25V. They did this to ensure

every single reference 7970 hits 1050mhz, even the ones with ASIC of 50-55%. This is why the BIOS was sent out. Any HD7970 reference board will run 1050mhz with the GE BIOS if you care to flash it. Of course, hardly any enthusiast will bother with that BIOS since with manual overclocking you have more control over the voltage and that's exactly what allows you to narrow down 1150mhz @ 170-180W power consumption @ 99% GPU load.

In any event, with both of my cards I need 1.225v to hit 1180mhz. My ASIC quality is 87% on one of the cards as well.

Keep in mind you are comparing ASIC of 87% among Pitcairn chips. You can't compare ASICs across 2 different families of chips: Pitcairn XT vs. Tahiti XT. The data applies to a specific chip family and comparing it to a database of these chips. Pitcairn XT is not compared to Tahiti Pro (7950) or Tahiti XT chips. 87% on Pitcairn is not necessarily better than 70% on Tahiti XT. Also, other factors come into play such as your GPU and VRM temperatures.

EDIT: Wait, Sickbeast referring only to his Pitcarin, I thought he bought a 7950 too. Why are we even discussing this, as Elfear pointed out - if he is basing his clocks on Pitcarin, well then that solves the question of the voltage to clock ratio.

Ya. Pitcairn has its own parameters and other chips have their own. Just because a Pitcairn needs 1.225V to hit 1180mhz, doesn't mean that can be extrapolated to a completely different chip made on 28nm, even if it's made by AMD. Comparing how much voltage Pitcairn needs for a certain clock vs. Tahiti would be no different than comparing Pitcairn to GK104.

Regarding your question of why my memory is stuck at 1000mhz, ya you don't need it. I just did a full reinstall and for some reason unofficial overclocking mode 2 isn't working on these latest Cats 12.9s and AB 2.2.4s. Any ideas? I might need 2 missing .dll files from Cats 12.2 and earlier.

==============

If anyone finds more info on GTX780, please link it!