NVidia has a much larger product line up than just cutting edge parts. They are still cranking out 16nm Switch parts, embedded GPUs and Tegras on older processes, and they brought the Samsung 14nm 1050ti back from the dead to fight the GPU shortages. There's parts that they could move over to 12FDX in order to expand volume (e.g. entry level GPUs), if the process were as good as you claim.

The issue isn't if the process is as good as GlobalFoundries' or Samsung's claims. But, rather it is suffering from "it is not out now syndrome" or "it doesn't scale with what generation the shrinks are on currently"

Nvidia would be specific to Samsung not GlobalFoundries, the nodes are:

28FDS [2015] // 28FD Xtors

28FDS Gen2 [2017] // 28FD+ Xtors == no numbers from Samsung whatsoever.

18FDS [2019] // 14FD Xtors

18FDS is not area or performance competitive with 14nm Family nodes at Samsung.

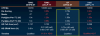

18FDS = 90CPP/64Mx, effectively succeeding 20LPP from Samsung.

14LPP = 78CPP/64Mx, ~30% more performance and ~0.786x lower area than 18FDS.

For a shrink going forward it has to be 10nm FDSOI which targeted 64CPP/48Mx(at STM) which is in between 10LPP's 68CPP/48Mx and 8LPP's 64CPP/44Mx.

Relative to GlobalFoundries, Samsung other than power optimization over performance(compared to STM). Samsung is 1:1 with STMicroelectronics roadmap but delayed including using non-pluses first.

18FDS Gen2 at earliest is 2021 and 8FDS Gen1 at 2023.

Switch is EOL on 16nm, btw. With NX2/Dane/Shield2, Embedded and Tegras being 8nm, with prior stack rest-their-souls being stone-cold-dead-not-being-fabbed. So the stack of value is still more or less 8nm-only going forward.

----------------------

On the AMD-side, they are required to fab at GlobalFoundries and they don't need the shrink.

28nm IP as-is is good enough, but what is available to 22FDX: LDMOS for PMIC intergration, RF for RF integration, Analog for Audio Codec integration. Means that the effort to go from 28nm to 22FDX means lower costs to board manufacturers. However, I believe the above will most likely be pushed to semi-custom. The idea is to go from good-enough on 28nm to excelling on 22FDX with newer IP hence ULP Archs. Which AMD can do and since the bottom stack is QM215, RK3566, BCM2711, JH7110, PicoRiov3, PowerPI, etc. There isn't any push to use 7nm/5nm leading-edge for competing against them. So, it is better to opt to use 22FDX instead.

Rather than finesse with a 150 mm2/170 mm2 14nm/6nm leading-edge die and to push for low cost-low power. There is cost-savings from refactoring from the 107 mm2/125 mm2 28nm trailing-edge dies to push for low cost-low power.

Refactor Jaguar-Excavator into a single ULP CPU arch. With Jaguar's client/embedded-focused FE,Core,LS,FPU,BU,L2 rather than Excavator's HPC/server-focused FE,Core,LS,FPU,BU,L2.

Refactor GCN to ULP GPU Arch. Value-ended integrated GPU is going more aggressively half compute[FP64/INT8]-half gaming[RT processor-inclusion].

Refactor system IP to fit into sub-100 mm2.

----------------

The rant:

Upscaled 14FD+ w/ 36 Masks to upscaled 14++ w/ >47 masks.

Then, there is 22FDX+ in second half 2020 with RF+ in Q1 2021. With improved digital performance: "With both digital and RF enhancements, the new 22FDX RF+ solution is optimized to boost the performance of front-end-module (FEM) designs." With no mention of how much the digital aspect even improved, either....

https://www.globalfoundries.com/sites/default/files/22fdx-product-brief.pdf <== Updated in 2019, still used 2016-2017 transistor performance instead of 2018-2019 transistor performance.

https://gf.com/sites/default/files/2021-10/GF21-22FDX 0927.pdf <== Updated in 2021, still using 2016-2017 numbers, doesn't even mention 22FDX+ which they launched the year before. Also, has an error built in with 28FDSOI instead of 28BULK.

Compared to the 28nm generation, it is very easy to get performance numbers for: 28HP -> 28HPP -> 28SHP/28A -> 28HPA. Getting performance numbers for every little transistor improvement for 22FDX is pretty difficult, since GlobalFoundries isn't bragging about them.

22FDX Risk Production transistor which is shown up above in the GF22FDX versus everything and in the PDFs. The 22FDX Volume Production transistor which appeared in Q3 2019, then there is the 22FDX+ transistor which appeared in Q3 2020. Basically, two major spins of 22FDX not benched at all other than in paywalled IEEE papers.

22FDX RP Xtor has an expected Tsi of 7nm, BOX=20nm.

22FDX VP Xtor has an expected Tsi of 6nm, BOX=20nm.

Which has relative huge implications on performance, since Tsi^2 and Tsi is in the equations for electrostatics. There is also the source/drain perf booster, gate implant/oxide perf booster, and MOL contact lower resistance/capacitance perf booster. Which were not present in 22FDX risk, but were when 22FDX went volume in Q3 2019.

22FDX+ from STMicroelectronics Roadmap has BOX=15nm and Tsi reduced by 15%-10%, which means Tsi is 5.1nm~5.4nm.

Which is involved with this:

www.st.com

There is also a new "Nano-thin SOI" transistor to better exploit upcoming Tsi=3.5/Box=10, demonstrating back at IBM days a planar Lg/Effective Gate of ~11nm. Which is supposed to be introduced last in 22FDX+, but first in 12FDX; effectively CTI from 90nm/65nm generation =

https://www.anandtech.com/show/2018 .. Replacing the Ultra-Thin SOI transistor which was built for Lg=100-50nm and Tsi=50-10nm.

-----

Back to AMD:

Start in early production for most obsolete implementation or start in mature production for least obsolete implementation.

22FDX 2019 -- DDR4/LPDDR4 only

22FDX 2021-2022 at least has DDR5(Open-Silicon IP)/LPDDR5(Innosilicon{TSS-OPENEDGES} IP).

Even though A10 Micro-6700T/a8-7410 embedded versions are still technically available for purchase up to 2023. It probably would be more in the open if it also had DDR4 capability.

Products formerly Elkhart Lake product listing with links to detailed product features and specifications.

ark.intel.com

Dual/Four cores @ 1.5 MB L2, no L3. = $37 to $80 RCPs.

There is still a case for no L3.

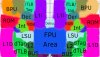

Starting off with Jaguar's FPU: A single Jaguar FPU is 0.39 mm2, with 2cJag being 5.42 mm2 if you take out the two FPUs. Also, when you have a Jaguar core and flipped horizontal Jaguar core:

The FPU area is directly next to each other. 2x FP-Mul and 2x FP-Add, the only thing needed is a bridge unit for FMAs, and the addition of AVX2-support. Then FPU unit-wise it is similar to Zen.

Was to lazy to hack the rename parts which probably would be fused like Zen3's 2x FPU unit. Zen3's FPU diagram(Zen3 Recap/Zen3 overview slides) show a single PRF, but as you can see on die, Zen3 is two 160?-entry PRFs tied to three 256-bit units.

Zen1, single 160-entry large PRF tied to four 128-bits units:

Flat shrink via measurement tools;

If early node promises actually followed the graph, green-line 22FDX and purple-line 22FDX+(22FDX-FBB=22FDXPlus-ZBB):

Scaling from the >52% slide:

From a refactoring and improvement side:

Jaguar being 5-tiled, Zen/Zen2 20-tiled, Excavator 63-tiled. Leans towards refactoring up from Jaguar being the cheapest.

-----

1x Single Core = 1x Area, 1x Perf

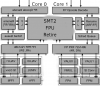

2x Thread in SMT = ~1.05x Area, ~1.3x Perf

2x Core in CMP = 2x Area, ~1.7x perf

2x Cluster in CMT = ~1.5x Area, ~1.8x perf

^-- Actual scaling that CMT was meant to do.

-----

Q4 2009--Q1 2018 only positive N.I. = $7.631B

Q4 2020--Q3 2021 N.I. = $12.778B

Risk is lower than ever, since in a continuous period of less than one year, AMD made more than they made discontinuously over nine years.

22FDX -> 12FDX == Totally a GlobalFoundries route.

12LP+ -> ???? == Not a GlobalFoundries route.

22FDX w/ Zen-like and RDNA-like performance has a shrink available down the road.

12LP+ w/ Picasso-(plus sized) Zen3 and RDNA2 at reduced performance does not have a shrink available.

(Monet = 4C Zen3 + A couple of WGPs, which is in-line with;

https://www.amd.com/en/products/apu/amd-ryzen-3-3350u

https://www.amd.com/en/products/apu/amd-ryzen-5-3450u

Which happens to also be Mendocino's target range as well with Zen2+RDNA2 on TSMC's cost-effective 6nm.

Cost per layer compared: SADP/SAQP layers = 0.71x/1x each layer && EUV layer = 0.57x each layer, basically 7EUV(6nm) costs the same as 14DUV(14/12) in HVM.))

For GlobalFoundries it is better to have AMD have a CPU-GPU-APU-SCBU product line on their highest returning node, rather than a single APU on their lowest return node. In this case AMD is buying 22FDX for 12FDX down the road. (If extra capacity is needed apart from Fab 1/Bernin II, there is Fab 7/Pasir Ris and Fab 8/O'Fallon.)

Whereas until JFIL springs to life, there is no 7LP coming from buying 12LP+. In a really bad cross-translated news article: Canon will strive to apply the NIL mass production technology to multiple chip fields, including PC, CPU, DRAM and other chip equipment. Canon stated that this technology can be applied to the manufacturing of 5nm chips within 2025, that is, within the next 4 years.

---

Finding LV cache: The 22FDX Low-Power CPU/GPU architectures' L2 caches are apparently using the the 0.65V 6T LV Cell (in a custom cache design) from that 22FDX v. 22FFL slide.

Three CPUs categories and their most current introduction family: Performance(Zen), Value(Jaguar), Pervasive(Bobcat)

GPU are split in three as well and their most current implementations: Compute(CDNA), Gaming(RDNA), Quiet/Value(Not yet revealed)

Value-Pervasive => ULP CPU Arch

Value-Quiet => ULP GPU Arch

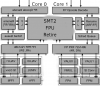

Performance is sub-categorized: Zen4(Performance Cache) - Zen4c(Dense Cache)

ULP Arch's cache design is also suppose to be scaled up to Zen[x][?](Low-voltage Cache) and CDNA[x][?](Low-voltage cache). I assume these two will be released as reduced TDP versions for low cost cooled server solutions. CPU-side Full Core Count: 85W/99W/115W and GPU-side Full CU Count: 100W/125W/150W.

TSMC 7nm SRAM Vmin is 0.5V

TSMC 5nm SRAM Vmin is 0.4V

LV Cache is meant to operate near those voltages but the more near the more large the cache design gets. If the L2/L3 operate at those, so will the CPUs, Zen2 64C/128T seems to operate at 0.85v on average. In this case, with LV cache+shrinked ULP BPU, the core would be pushing 0.65V or lower nominally. The range does appear to go straight to Vmin, opening up 0.4V through sub-0.75V.

96c/192c w/ Reduced Capacity/LV Cache = sub-150W target

96c/192c w/ Performance Cache = 200W+ up to 600W

128c/256c w/ Dense Cache = 200W+ up to 400W?

This hints at the low voltage SKU on 22FDX being 1/4th the TDP of normal voltage SKU of 28nm(Bhavani/Beema and Stoney).