-

We’re currently investigating an issue related to the forum theme and styling that is impacting page layout and visual formatting. The problem has been identified, and we are actively working on a resolution. There is no impact to user data or functionality, this is strictly a front-end display issue. We’ll post an update once the fix has been deployed. Thanks for your patience while we get this sorted.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

News AMD Announces Radeon VII

Page 18 - Seeking answers? Join the AnandTech community: where nearly half-a-million members share solutions and discuss the latest tech.

DrMrLordX

Lifer

I kind'a have to agree with Peter here though.

Seriously?

https://www.anandtech.com/show/12910/amd-demos-7nm-vega-radeon-instinct-shipping-2018

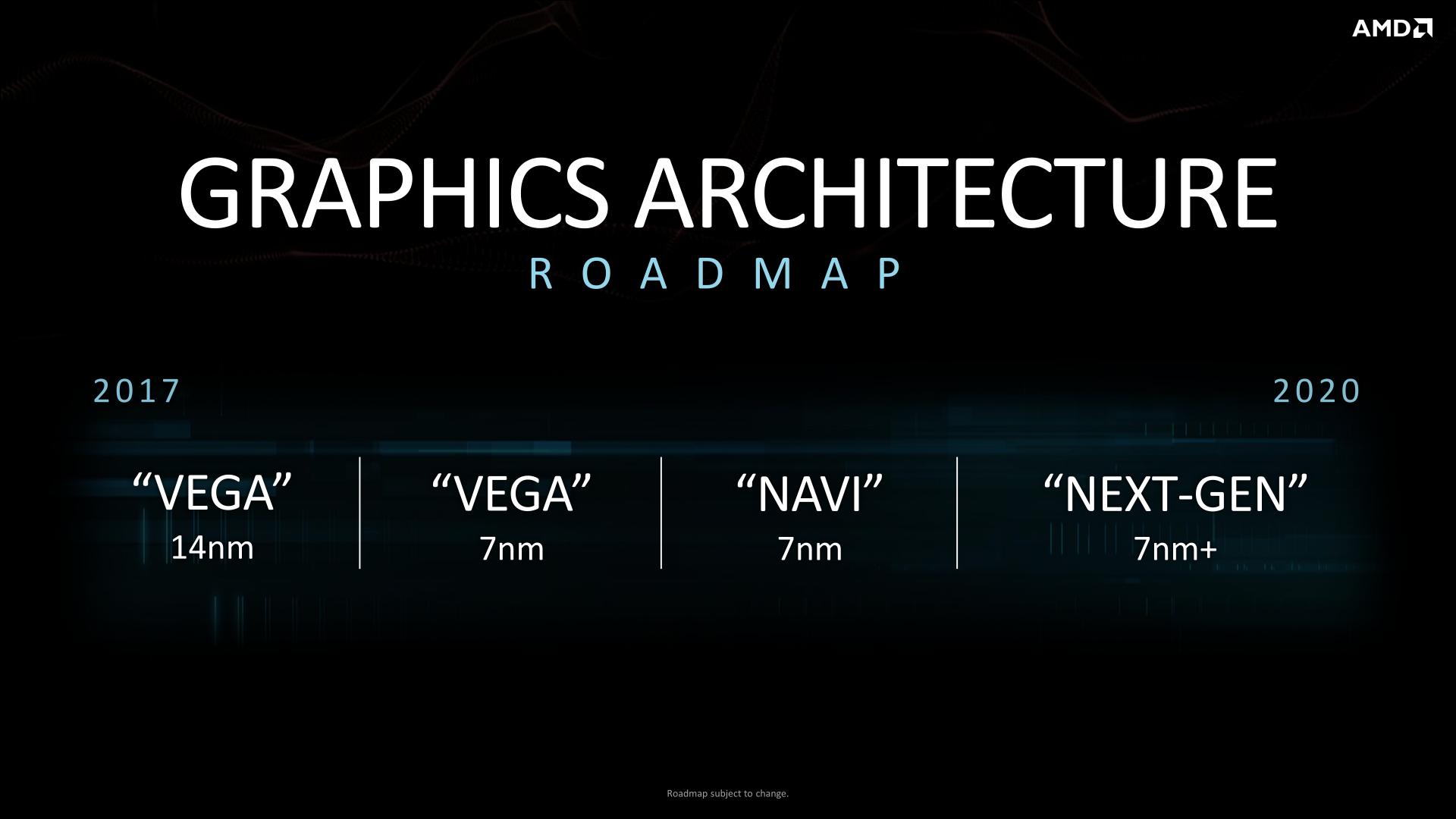

Look at the roadmap! There's no consumer Vega20 on there, period. Are you asking people to disprove a negative here? Do you think AT was wrong? Cmon now. This is getting ridiculous.

Show me one single quote where AMD said this.

Done with you. You can have the truth in your face all day, and you will pick nits or ask for the absurd.

Not even sure why its such a big deal if AMD said they would or would not release a consumer version.

Because some people around here think that AMD will actually make money selling these things as Radeon VII, and that they could actually sell them for less if they really wanted to, which is probably not true on either count.

It's only a big deal to certain individuals. I for one like a market with options but it seems some people on this forum don't.

You mean like the ones that will be pre-ordering one soon? Oh wait. How does that work?

Personally I think I am very fortunate to have the opportunity to buy one of these cards. Consumer Vega20 did not appear on any roadmaps. AMD blew whatever chance they had at a 12nm Vega refresh, and Navi still looks to be heading our way in Q4 2019 with products that may only be suitable to replace Polaris. I wanted a Vega refresh, and now I get one. Hooray for me!

Meanwhile, the rest of the world wanted dGPU prices to come down. AMD instead launches a card that can't realistically be sold for anything less that helps cement $700 as a starting point for high-end cards.

If Nvidia had released the RTX cards with a normal pricing structure, I don't think AMD would have released a card tying the 2080 for a couple hundred additional $. The implication is that the higher than expected prices allowed this to happen. It's not like they have a large ability to cut prices with a 4 HBM2 stack 7nm die.

Correct. AMD could not justify Radeon VII under any other circumstance. Well okay, they could justify it if NV had not released RTX cards at all. Who knows what they would charge under those circumstances. Regardless, that did not happen.

PeterScott

Platinum Member

Seriously?

https://www.anandtech.com/show/12910/amd-demos-7nm-vega-radeon-instinct-shipping-2018

Look at the roadmap! There's no consumer Vega20 on there, period. Are you asking people to disprove a negative here? Do you think AT was wrong? Cmon now. This is getting ridiculous.

Disprove a negative? Are you referring to the absence of evidence argument? It goes like this: "Absence of evidence, is not evidence of absence". In fact this what people who leaped to the conclusion that there would be no 7nm Vega for gaming, were guilty of. There was no specific mention of 7nm Gaming Vega, so they assumed there would be none (contrary to previous precedent and obvious reasons why it wouldn't be mentioned yet).

Yes, let's look at the roadmap from the computex story you linked:

Note the total lack of Pro/Data Center/Consumer divisions. This Roadmap fits exactly what we have today with a consumer 7nm Vega 20.

Done with you. You can have the truth in your face all day, and you will pick nits or ask for the absurd.

Yeah, how dare I ask for some actual evidence, for the baseless conclusions you jumped to. 🙄

Because some people around here think that AMD will actually make money selling these things as Radeon VII, and that they could actually sell them for less if they really wanted to, which is probably not true on either count.

If they can make money selling $400 14nm Vegas, why couldn't they make even more money selling $700 7nm Vegas??

As I already pointed out before, cost/transistor always goes down. 7nm Vega dies will cost less 14nm dies.

Logic is such a nit picky thing. 😉

Last edited:

mattiasnyc

Senior member

Seriously?

https://www.anandtech.com/show/12910/amd-demos-7nm-vega-radeon-instinct-shipping-2018

Look at the roadmap! There's no consumer Vega20 on there, period. Are you asking people to disprove a negative here? Do you think AT was wrong? Cmon now. This is getting ridiculous.

Like Peter said 'disproving a negative' is really the opposite.

Other than that I'll just say that my post explained why I have the opinion I have. You curiously omitted all my reasoning. So, 'whatever'. Look, clearly I'm trying to be pretty accurate and picky with language for the sole reason that Lisa Su is picky with language as well. If you want to understand her (and thus AMD) you need to think like her and listen to her, not to mention follow the history of AMD and look at what products they've produced and how. I feel I'm "offending" equally here because I criticized Peter for not being picky enough when it came to what AdoredTV's Jim said and didn't say...

So... 'whatever'...

coercitiv

Diamond Member

Can we find some middle ground here and move on? I'd rather see fights on other hills.

What we know for certain at this point:

What we know for certain at this point:

- Vega as arch was built with both markets in mind.

- Vega on 14nm clearly had issues with regards to pricing, and whether it was production capacity, production cost or both, similar issues are bound to affect Vega II as well.

- AMD clearly stated their first 7nm Vega based products were to be aimed at the pro/compute market.

- AMD never made public any roadmap that confined Vega II to the pro market only.

PeterScott

Platinum Member

Personally I'm inclined to believe AMD isn't making any money with Radeon VII in the current launch conditions, but I'm increasingly convinced this has more to do with economies of scale rather than intrinsic costs, hence Vega II being scheduled internally for the consumer market was more a question of timing than anything else.

Agreed. When I was arguing for a Vega 20 consumer card back in August, I suggested a staged roll-out, with consumer card coming when 7nm yield/volume increased. Eventually Vega 20 cards should be cheaper to produce than Vega 10 cards.

IMO the only thing the high priced RTX cards may have enabled would be an earlier release, with more memory.

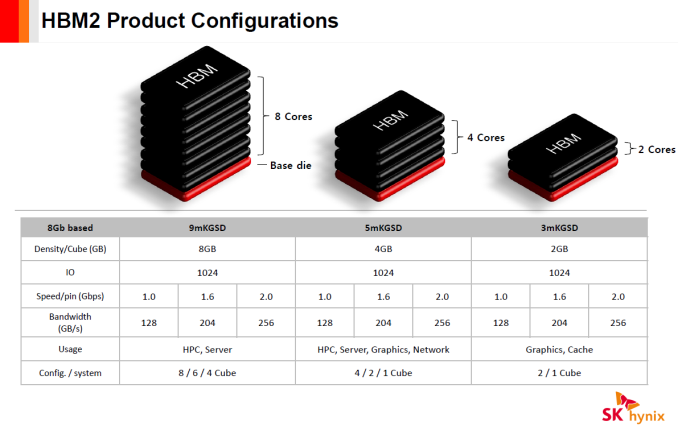

Even if they don't want to partially populate the stacks, with HBM 2, you have 2GB, 4GB, and 8GB stacks:

That means with 4 full stacks and the same bandwidth you can have 8GB, 16GB, and 32GB cards. We have already seen the later two.

I still expect at some point we will see less expensive 8GB Vega 20 gaming card (cheaper to produce than Vega 64), which was probably the original plan, but the high price of RTX meant AMD could just pass on the 16GB cards to consumers earlier than originally planned.

darkswordsman17

Lifer

I will walk back agreeing with JHH in many ways. He said some really stupid things. Sorry, but running lower resolution and upscaling is not "crushing" anything. And your RTX performance hasn't exactly been "crushing" anything but your stock value. And RTX isn't the only way to do ray-tracing (AMD showed Vega 20 doing ray-tracing, granted not the half-baked real time gaming ray-tracing consumers are getting which non-RTX stuff will be able to do, but still ray-tracing, on a slide, think it was maybe at the New Horizons event or maybe it was CES). And Vega 20 has AI feature support (considering that was apparently the focus of Vega 20 development...just makes me scratch my head at what he's talking about, only thing I can guess is he's trying to act like Tensor Cores is the only way of doing AI or something, or just his usual attempt at talking trash). And Radeon VII will likely clobber everything but Titan V (and some older Titans and AMD stuff) in DP/FP64 performance.

I just realized you're ignoring several examples of chips that AMD didn't use multiple markets. I'll even ignore consoles (Gamecube, Wii, 360, and Wii U all got custom dGPU chips not offered anywhere else). Vega M, Vega 12 (which I believe is just in Pro 20 and 16, which it likely was just repurposed Vega Mobile but apparently the only taker was Apple for the Macbook Pro; and I have a hunch the cost of HBM pushing the overall cost was probably a major contributing factor to its limited uptake). And Polaris 30 isn't in a Pro product (which maybe it will be, which that's the usual path for AMD GPUs, consumer then pro; Vega 20 is by far the biggest exception they've ever made for that, and I think that kinda nullifies your "AMD ALWAYS DOES THIS!!!" argument; interestingly the only other exception for that I can remember was Vega 10 - with rumors that AMD was seriously considering not releasing it as a consumer product because of its high cost and mediocre performance which inhibited their ability to adjust pricing in the event of poor sales, now why does that sound familiar?).

CPUs are not even comparable. By that logic, what happened to Navi being AMD's "scalable" multi-GPU chiplet working as one monolithic one that was all the talk a few years back? Also, uh, by that logic, you're basically saying that well AMD is going to cover the entirety of the GPU market with a single die? But they've NEVER done that. So much for the "AMD gets every niche out of a single die", huh?

And? She'd also only EVER mentioned it as a datacenter GPU prior to that, and AMD has to the best of my knowledge NEVER released a GPU as an HPC/Pro product before consumer, so this whole line of speculation on your part has as many caveats as the one you're arguing against.

Another issue, and why I think this "Vega 20 was always a gaming chip" is just spin is that, she also says its not just a shrink of Vega 10. Which, is technically true, but for the most part also is not true. For the GPU design itself, there's very little (if anything) changed from Vega 10. The main changes are they got it fully validated for ECC, and they added two memory controllers. And then they added some new ops (which I believe is just they expanded the software support, Vega 10 likely could do those as well). Those are not major changes. So yes, she's technically right that its not a literal shrink of Vega 10, but it is still largely just Vega 10 on 7nm. It does go to show how strong Vega is in compute, which is why it could largely be Vega 10 with more bandwidth. It unfortunately also highlights how mediocre it is for graphics.

As opposed to you ignoring how AMD has never release a GPU for the Pro market first? Limited niche that likely sells more units than the other limited niche (gamers shelling out $700)? Seriously high end gaming market is a more limited niche for AMD than the pro market.

Its not that expensive yet its not used in any products outside of enthusiast and higher? Cheapest product its been on was probably Vega M and that was just a single stack for what appears to be a pretty tailored product (frankly was likely mostly as a prototype showing that Intel could make something like it) that was not exactly high volume. Nvidia, who probably has as much if not more need for it in their GPUs, doesn't use it on anything outside of HPC products. AMD could certainly use it in a variety of products, where its power savings and compact size would prove especially beneficial.

If you're just looking purely at the literal cost of when fabbing it. But that's ignoring the drastically increasing cost/transistor of designing and engineering the chip, something that you cannot ignore when talking about a company's cost of making chips.

Maybe, but that doesn't matter. 100% guarantee that the finished products that Vega 20 is on cost more because of HBM2 memory. Plus, that's a very bizarre argument. Are you trying to say that because of the similar price points, that should indicate that the costs of both are about equal?

Except I think that would have severely limited its performance improvement compared to Vega 10 products, which would mean it'd be hard to sell customers on a much more expensive card with very little performance improvement, and so they'd have to have lower pricing (margins might be about the same, but then it'd probably be competing with maybe the 2070 and AMD would look more foolish that their "high end" 7nm GPU is competing with the lower "mid" tier of Nvidia. I personally think Vega 20 die likely costs significantly more, and then using twice as much HBM2, which required a new interposer, and the costs would've added up. I wouldn't be surprised if Vega 20 products cost close to twice as much to produce as Vega 10 ones right now (for the whole card). Don't forget full ECC costs more as well.

I don't think that's in serious dispute at this point. But I also don't know what that has to do with anything. We know AMD is willing to take less margins than Nvidia. We also know that costs severely hampered AMD being able to adjust Vega products' pricing to better fit its relative competitive place in the market, to the point that they questioned releasing Vega 10 to consumers/gamers because their cost coupled with its performance meant much lower margins than AMD would like. But then mining took off which raked in the money for AMD (to the point that they then go criticized for trying to help people get Vega cards for MSRP instead of the ultra-gouged mining prices). Vega 20 didn't radically change that, and while mining demand had flatlined, Nvidia's pricing gave AMD some leeway. Now, I don't doubt that AMD can drop the price some and still make money. Personally I doubt they make much money from gamers on Radeon VII. I think most sales will be to users that can use the compute capability.

AMD repeatedly mentioned Vega 20 as datacenter. It was literally the only language they used around it until CES this year. I looked some but haven't been able to find the articles that I read where they were specifically asked about it being a gaming chip and AMD said no, that Navi would be their 7nm chip for gamers. There were rumors about Vega II, but most of that was I think back in 2017 when things pointed to 7nm Vega, but then AMD completely reworked their roadmaps and from then on Vega 20 only showed up with regards to Instinct cards. If I recall, for all of 2018 Vega 20 was only listed for Instinct.

Except they always released it as a consumer product before pro. There's been two exceptions to that I'm aware of. Vega 20 and Vega 10. I'm not sure Vega 10 counts as the Frontier Edition was "prosumer", but I think it does offer some insight. Vega 10 had issues.

So Anandtech is just a blog now? Because the GPU writer for Anandtech got that impression from being at AMD events. Why did everything that AMD stated about Vega 20 only mention Instinct until CES this year? It wasn't that they said it'd be datacenter first, they literally talked about nothing but datacenter with regards to it other I think they said a couple of times it'd show up as Radeon Pro as well (which uh, where is that?). Why did they go "we'll have 7nm gaming cards", right around when they were talking about Vega 20, but deliberately not mention Vega 20? Why is Vega 20 the complete opposite of every other AMD GPU release, going HPC/enterprise market first (if I'm not mistaken, that was generally the last iteration they'd do of a given GPU)? Frankly, I think that might be smart, but it also tells me that gaming was not really considered until later. And they can spin it any number of ways. I actually think they might have said that Vega 20 was just for datacenter in 2017 when they first adjusted their roadmaps and removed the 14+/12nm Vega versions.

The same reason people believe conspiracy theories. Jumping to conclusions on no evidence just like you did. The FACTS are:

1) AMD has NEVER done a pro only GPU chip.

2) AMD is the king of squeezing every niche out of a single die. Just look at Ryzen, everything from lowly quad cores to 32 core enterprise on a single die.

3) Lisa Su has stated publicly that it was always intended to be a consumer gaming GPU, you know like every GPU AMD has ever made.

OTOH we have "feelings" because AMD reps didn't state the obvious that their GPU chip would also be used in gaming card, like every single other GPU chip they ever made, that for some unknown reason, they would limit this GPU chip to a limited niche and not release it as a gaming card.

I just realized you're ignoring several examples of chips that AMD didn't use multiple markets. I'll even ignore consoles (Gamecube, Wii, 360, and Wii U all got custom dGPU chips not offered anywhere else). Vega M, Vega 12 (which I believe is just in Pro 20 and 16, which it likely was just repurposed Vega Mobile but apparently the only taker was Apple for the Macbook Pro; and I have a hunch the cost of HBM pushing the overall cost was probably a major contributing factor to its limited uptake). And Polaris 30 isn't in a Pro product (which maybe it will be, which that's the usual path for AMD GPUs, consumer then pro; Vega 20 is by far the biggest exception they've ever made for that, and I think that kinda nullifies your "AMD ALWAYS DOES THIS!!!" argument; interestingly the only other exception for that I can remember was Vega 10 - with rumors that AMD was seriously considering not releasing it as a consumer product because of its high cost and mediocre performance which inhibited their ability to adjust pricing in the event of poor sales, now why does that sound familiar?).

CPUs are not even comparable. By that logic, what happened to Navi being AMD's "scalable" multi-GPU chiplet working as one monolithic one that was all the talk a few years back? Also, uh, by that logic, you're basically saying that well AMD is going to cover the entirety of the GPU market with a single die? But they've NEVER done that. So much for the "AMD gets every niche out of a single die", huh?

And? She'd also only EVER mentioned it as a datacenter GPU prior to that, and AMD has to the best of my knowledge NEVER released a GPU as an HPC/Pro product before consumer, so this whole line of speculation on your part has as many caveats as the one you're arguing against.

Another issue, and why I think this "Vega 20 was always a gaming chip" is just spin is that, she also says its not just a shrink of Vega 10. Which, is technically true, but for the most part also is not true. For the GPU design itself, there's very little (if anything) changed from Vega 10. The main changes are they got it fully validated for ECC, and they added two memory controllers. And then they added some new ops (which I believe is just they expanded the software support, Vega 10 likely could do those as well). Those are not major changes. So yes, she's technically right that its not a literal shrink of Vega 10, but it is still largely just Vega 10 on 7nm. It does go to show how strong Vega is in compute, which is why it could largely be Vega 10 with more bandwidth. It unfortunately also highlights how mediocre it is for graphics.

As opposed to you ignoring how AMD has never release a GPU for the Pro market first? Limited niche that likely sells more units than the other limited niche (gamers shelling out $700)? Seriously high end gaming market is a more limited niche for AMD than the pro market.

It's not that expensive unless you believe recent nonsense about HBM pricing.

While cost/area of silicon increases at each process node shrink, the costs/transistor DOES NOT. In fact it generally continues downward.

So with similar transistor count to TU104 it should have similar costs, or lower, especially as time goes by.

IF HBM is really that expensive they could do cheaper 12GB or 8GB model (though with the loss of memory bandwidth), by simply leaving out an HBM stack or two. A two stack Vega7 shouldn't really cost much more to build than Vega 64.

Its not that expensive yet its not used in any products outside of enthusiast and higher? Cheapest product its been on was probably Vega M and that was just a single stack for what appears to be a pretty tailored product (frankly was likely mostly as a prototype showing that Intel could make something like it) that was not exactly high volume. Nvidia, who probably has as much if not more need for it in their GPUs, doesn't use it on anything outside of HPC products. AMD could certainly use it in a variety of products, where its power savings and compact size would prove especially beneficial.

If you're just looking purely at the literal cost of when fabbing it. But that's ignoring the drastically increasing cost/transistor of designing and engineering the chip, something that you cannot ignore when talking about a company's cost of making chips.

Maybe, but that doesn't matter. 100% guarantee that the finished products that Vega 20 is on cost more because of HBM2 memory. Plus, that's a very bizarre argument. Are you trying to say that because of the similar price points, that should indicate that the costs of both are about equal?

Except I think that would have severely limited its performance improvement compared to Vega 10 products, which would mean it'd be hard to sell customers on a much more expensive card with very little performance improvement, and so they'd have to have lower pricing (margins might be about the same, but then it'd probably be competing with maybe the 2070 and AMD would look more foolish that their "high end" 7nm GPU is competing with the lower "mid" tier of Nvidia. I personally think Vega 20 die likely costs significantly more, and then using twice as much HBM2, which required a new interposer, and the costs would've added up. I wouldn't be surprised if Vega 20 products cost close to twice as much to produce as Vega 10 ones right now (for the whole card). Don't forget full ECC costs more as well.

AMD has been selling HBM consumer cards since 2015, Vega 56 and Vega 64 had launch prices of $399 and $499.

If AMD can make money on an HBM consumer card with two stacks of HBM for $399, they can certain make it on $699 card with 4 stacks, especially since as time goes by the cost of HBM is almost certainly declining.

I don't think that's in serious dispute at this point. But I also don't know what that has to do with anything. We know AMD is willing to take less margins than Nvidia. We also know that costs severely hampered AMD being able to adjust Vega products' pricing to better fit its relative competitive place in the market, to the point that they questioned releasing Vega 10 to consumers/gamers because their cost coupled with its performance meant much lower margins than AMD would like. But then mining took off which raked in the money for AMD (to the point that they then go criticized for trying to help people get Vega cards for MSRP instead of the ultra-gouged mining prices). Vega 20 didn't radically change that, and while mining demand had flatlined, Nvidia's pricing gave AMD some leeway. Now, I don't doubt that AMD can drop the price some and still make money. Personally I doubt they make much money from gamers on Radeon VII. I think most sales will be to users that can use the compute capability.

I kind'a have to agree with Peter here though.

First of all, the quote isn't a quote of what AMD said officially, but rather what a reporter thought AMD was planning (or not planning). Huge difference. And the other article talks about the cards, not the actual GPUs.

So Peter has a point here when he says AMD has been pretty consistent recently with reusing the same architecture up and down the product lines, from server/compute down to consumer. Because of that consistency it's not a far-fetched guess that they'd eventually roll out 7nm Vega "below" the Instinct line.

Of course it's also possible that it is as (ironically) I think Jim @ AdoredTV said...

AMD repeatedly mentioned Vega 20 as datacenter. It was literally the only language they used around it until CES this year. I looked some but haven't been able to find the articles that I read where they were specifically asked about it being a gaming chip and AMD said no, that Navi would be their 7nm chip for gamers. There were rumors about Vega II, but most of that was I think back in 2017 when things pointed to 7nm Vega, but then AMD completely reworked their roadmaps and from then on Vega 20 only showed up with regards to Instinct cards. If I recall, for all of 2018 Vega 20 was only listed for Instinct.

Except they always released it as a consumer product before pro. There's been two exceptions to that I'm aware of. Vega 20 and Vega 10. I'm not sure Vega 10 counts as the Frontier Edition was "prosumer", but I think it does offer some insight. Vega 10 had issues.

Show me one single quote where AMD said this.

You are just looking at some blogs interpretation of AMD computex events and assuming there would never be a gaming card.

This is exactly how the meme got started. Everyone read interpretations of AMD's silence, and assumed. There was no statement.

So Anandtech is just a blog now? Because the GPU writer for Anandtech got that impression from being at AMD events. Why did everything that AMD stated about Vega 20 only mention Instinct until CES this year? It wasn't that they said it'd be datacenter first, they literally talked about nothing but datacenter with regards to it other I think they said a couple of times it'd show up as Radeon Pro as well (which uh, where is that?). Why did they go "we'll have 7nm gaming cards", right around when they were talking about Vega 20, but deliberately not mention Vega 20? Why is Vega 20 the complete opposite of every other AMD GPU release, going HPC/enterprise market first (if I'm not mistaken, that was generally the last iteration they'd do of a given GPU)? Frankly, I think that might be smart, but it also tells me that gaming was not really considered until later. And they can spin it any number of ways. I actually think they might have said that Vega 20 was just for datacenter in 2017 when they first adjusted their roadmaps and removed the 14+/12nm Vega versions.

darkswordsman17

Lifer

I was just thinking a while ago, that I should have made a "leak" video back in August when I was arguing that a consumer Vega 20 7nm, would probably come in around RTX 2080 performance even if it was just 64 CUs with no gaming enhancements. 😉

By all means you should. Seriously, its all just speculation and rumor til its not so it doesn't really matter.

It's only a big deal to certain individuals. I for one like a market with options but it seems some people on this forum don't.

I don't think most people really care that much, its just discussion for the sake of it. We could point out how technically, Vega is offering a "Poor Volta" by being an HPC card for cheap since that's all Volta ever was. We could discuss the complete and total zero information we have about Navi other than it is something AMD is calling a GPU and its supposed to supposedly be out this year made on TSMC's 7nm process. Maybe we could speculate more on Zen 2 + Navi APUs that AMD has already said aren't happening this year. Maybe we should talk about the name. Radeon VII, so is it Radeon Vega II, Radeon 7 (what happened to the other six?). Why was it RX Vega?

Except most people are frustrated because all of the options are lacking, most specifically the high prices.

AtenRa

Lifer

The insane pricing of the RTX series made room for this card.If RTX had come in at expected prices, the VII would not be here. Simples.

It would be here at lower price.

Its 300M to port to 7nm. Most of the cost must be up front. Marginal cost must be lower than we assume. Eg lower hbm2 cost. Whatever.

There is solid reason looking into a 300M cost up front to share that with consumer side.

It makes sense when Lisa says it was the plan from the get go.

There is solid reason looking into a 300M cost up front to share that with consumer side.

It makes sense when Lisa says it was the plan from the get go.

PeterScott

Platinum Member

So Anandtech is just a blog now? Because the GPU writer for Anandtech got that impression from being at AMD events.

That is a ridiculously sloppy Appeal to Authority. Someones "impression" is not evidence of anything. We can all see the same evidence, and absolutely nothing conclusive was said.

Why did everything that AMD stated about Vega 20 only mention Instinct until CES this year? It wasn't that they said it'd be datacenter first, they literally talked about nothing but datacenter...

Again this is starting to sound like a conspiracy theory house of cards. The high tech product game is all about silence and secrecy until they are ready to reveal. Keeping unannounced products secret is SOP for practically every product maker.

DrMrLordX

Lifer

So Anandtech is just a blog now?

Apparently.

You curiously omitted all my reasoning.

Many people's reasoning is also curiously absent. Fact is that none of our mental gymnastics really matter here. The roadmap didn't have the product on it, and the press people who report on this stuff for a living all concluded from AMD's statements that there would be no consumer Vega20 product. We can retroactively try to justify the move, but that's still making some iffy assumptions, such as that AMD can actually sell Vega20 for less than $700.

Maybe I still trust roadmaps too much? AMD has stuck to most of their roadmaps. This isn't Intel we're talking about here, with bandaid products launching left and right.

Last edited:

NostaSeronx

Diamond Member

imho, Navi 1.0 was canned.

Thus, we get Radeon 590 and Radeon VII as stop gaps.

As AMD fuses Next-gen and Navi 2.0.

-> Super-SIMD

-> OoO Graphics

-> Secondary SIMDs for Path-tracing.

All of the above, responding to GeForce RTX. There is also the Arctic Sound GPU from Intel to worry about as well. Which has the Radeon Group Leader(Koduri) and Chief engineer of Vega-M/Navi(Torres).

Just imagine if the PS5 is 8-core Sunnycove and uses Artic Sound dGPU instead of 8-core Zen and Navi. Would be a pretty crippling blow for AMD.

---

Vega20 was purely made for Enterprise/Instinct. As the Navi10 also including Navi10x2 would have fit better for consumers. Which for consumers would have been cheaper than one Vega20 die and faster than one Vega20 die.

Hence, the cancellation of Navi10 and Navi10duo lead to both Radeon 590 and Radeon VII. Where Navi10 and Navi10duo would have launched.

2x110 mm^2 80CU with GDDR6 vs 1x400 mm^2 60CU with HBM2.

Thus, we get Radeon 590 and Radeon VII as stop gaps.

As AMD fuses Next-gen and Navi 2.0.

-> Super-SIMD

-> OoO Graphics

-> Secondary SIMDs for Path-tracing.

All of the above, responding to GeForce RTX. There is also the Arctic Sound GPU from Intel to worry about as well. Which has the Radeon Group Leader(Koduri) and Chief engineer of Vega-M/Navi(Torres).

Just imagine if the PS5 is 8-core Sunnycove and uses Artic Sound dGPU instead of 8-core Zen and Navi. Would be a pretty crippling blow for AMD.

---

Vega20 was purely made for Enterprise/Instinct. As the Navi10 also including Navi10x2 would have fit better for consumers. Which for consumers would have been cheaper than one Vega20 die and faster than one Vega20 die.

Hence, the cancellation of Navi10 and Navi10duo lead to both Radeon 590 and Radeon VII. Where Navi10 and Navi10duo would have launched.

2x110 mm^2 80CU with GDDR6 vs 1x400 mm^2 60CU with HBM2.

Last edited:

Greyguy1948

Member

It should be interesting to see Radeon VII in this tensor-flow tests:

Tensorflow test

Vega Frontier is not bad but Radeon VII should be better!

Tensorflow test

Vega Frontier is not bad but Radeon VII should be better!

DrMrLordX

Lifer

It should be interesting to see Radeon VII in this tensor-flow tests:

Tensorflow test

Vega Frontier is not bad but Radeon VII should be better!

I'd be happy to run the bench if I can figure out how to set it up correctly. Once I have the card, that is.

imho, Navi 1.0 was canned.

.

Maybe? I thought I remember some indication recently that AMD had Navi9, Navi10, Navi12, and Navi16 coming up? Also I don't think Navi10 and Radeon VII are related.

PeterScott

Platinum Member

Apparently.

Many people's reasoning is also curiously absent. Fact is that none of our mental gymnastics really matter here. The roadmap didn't have the product on it, and the press people who report on this stuff for a living all concluded from AMD's statements that there would be no consumer Vega20 product. We can retroactively try to justify the move, but that's still making some iffy assumptions, such as that AMD can actually sell Vega20 for less than $700.

Maybe I still trust roadmaps too much? AMD has stuck to most of their roadmaps. This isn't Intel we're talking about here, with bandaid products launching left and right.

AMD GPU Roadmaps are just Architecture Roadmaps until they announce specific products, you know just like the Roadmap from Computex I linked early from the show where some people jumped to the conclusion there would be no gaming Vega 20.

Tell me one specific Navi product on the Roadmap. You can't because no Navi product exists on the roadmap.

Greyguy1948

Member

PeterScott

Platinum Member

That isn't an AMD roadmap. That is a page sums up the rumors so far.

exquisitechar

Senior member

That was wrong/misinterpreted by Videocardz, those weren't chip codenames.Maybe? I thought I remember some indication recently that AMD had Navi9, Navi10, Navi12, and Navi16 coming up

railven

Diamond Member

Maybe I still trust roadmaps too much? AMD has stuck to most of their roadmaps. This isn't Intel we're talking about here, with bandaid products launching left and right.

This is really hard to tackle. On the one hand, I feel roadmaps are essentially all we can real go on. Outside specific quotes from people in the company, the rest is interpretation (often times assumptions) based on people reporting on rumors/speculations/expectations. (EDIT: Even this is now an issue, I recall reading some where that Lisa Su said Radeon 7 will have 128 ROPs, so does that mean there is still another card some where? Or a miscommunication?)

Just look at Radeon 7. Anandtech to me is FAR MORE than any "blog" but at the same time, they will get things wrong, and have. Rewind to their Zen coverage, and how they discovered in their benching suite forcing on the clock for Intel affected results.

Without AMD directly stating they were gonna make Vega 20 a consumer version, you only have speculation and expectations to fall on. Being right or having AMD follow their previous moves doesn't make it a given - just means your interpretation of the data was correct.

Reason why I stopped giving roadmaps as much weight as I use to comes from Intel's constant slip ups. At this point I just see roadmaps as "what we expect to accomplish, chances are stuff won't make it or other stuff will get out, but here is an idea of where we plan on going."

Either way, with so many hands in the cookie jar now, you can basically say anything and find someone that wrote an article/blog post/vlog on it and thus validate you, doesn't mean you're right (anti-vaxxers/flat eathers/etc etc)

DrMrLordX

Lifer

Either way, with so many hands in the cookie jar now, you can basically say anything and find someone that wrote an article/blog post/vlog on it and thus validate you, doesn't mean you're right (anti-vaxxers/flat eathers/etc etc)

That's a fair statement, if ever there was one.

What remains to be seen is if AMD intends Radeon VII to usher in a whole new line of products or to be nothing more than a high-end unicorn product for those that want an alternative to RTX 2080 (and possibly an ultra-cheap TensorFlow card).

Interesting. I kinda of hate AVFS on my VegaFE since it makes it hard to hand-set voltage and clockspeed.

That was wrong/misinterpreted by Videocardz, those weren't chip codenames.

Hmm, okay. Guess we'll just have to keep our eyes open for Navi codenames, if any.

railven

Diamond Member

That's a fair statement, if ever there was one.

What remains to be seen is if AMD intends Radeon VII to usher in a whole new line of products or to be nothing more than a high-end unicorn product for those that want an alternative to RTX 2080 (and possibly an ultra-cheap TensorFlow card).

If it can handle DXR (or whatever new stuff is being thrown out) it can act as a building block for future GPUs (if Navi isn't so far along these features can't be added that is). But personally, I feel Vega in its entirety has been a consumer hail mary by AMD. Too far in development to change course, but resources so dry they had to put something out. As a gaming card, it's power draw has been an issue from the start. And this goes all the way back to Fiji. It's interesting that AMD stuffed so much stuff into their "gamer" cards that resulted in very little gains and here we now see NV doing the exact same thing. Monkey see, monkey do.

It would be here at lower price.

Probably not. We already know that this thing is expensive to produce and there aren’t fat margins for AMD to eat through.

Lowering prices also increases demand meaning that AMD would need to repurpose even more dies that could have gone into a more profitable MI-50 card instead.

If anything, this gets released as. Frontier Edition card just like previously with Vega. The implication is that eventually there are follow up products at a lower price, but those never come and everyone hopefully forgets about it when Navi information becomes more solid.

PeterScott

Platinum Member

If it can handle DXR (or whatever new stuff is being thrown out) it can act as a building block for future GPUs (if Navi isn't so far along these features can't be added that is).

Unless AMD can handle DXR at competitive frame rates (which seems extremely unlikely) it's in their best interest to not add DXR support to drivers.

TRENDING THREADS

-

Discussion Zen 5 Speculation (EPYC Turin and Strix Point/Granite Ridge - Ryzen 9000)

- Started by DisEnchantment

- Replies: 25K

-

Discussion Intel Meteor, Arrow, Lunar & Panther Lakes + WCL Discussion Threads

- Started by Tigerick

- Replies: 25K

-

-

-