Hans Gruber

Platinum Member

Just think how great a 6800xt would be on 5nm silicon. You can take 20% off those power numbers in your chart.@MrPickins didn't fully follow through with the reasoning, he should have stopped at ISO performance.

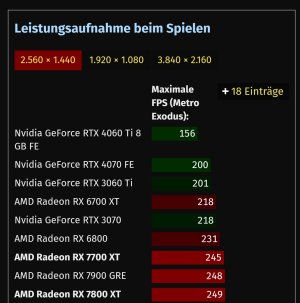

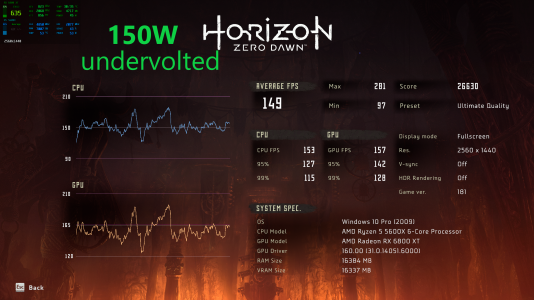

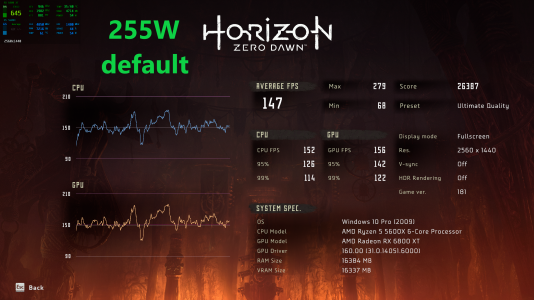

Consider this, the Asus TUF 7800XT is 7.5% faster than 4070, while consuming roughly 275W versus 200W. Here's what happens when you're willing to give up 5-6% performance on an 6800XT by lowering clocks, no undervolt. The workload is an UE4 game.

View attachment 85722

There we go, ~70W delta.