poke01

Diamond Member

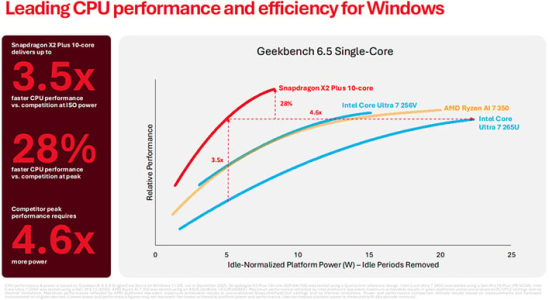

No ISA is inherently better. There’s plenty of ARM designs with worse efficiency thanARM was designed with efficiency in mind. x86 not so much. I mean ARM was designed from the start to be a full 32 bit RISC architecture, no need for uops. AMD and Intel have been working around that limitation since the PowerPC and still are!

x86 designs.

Im looking forward to nova lake with APX but I believe it won’t be till Unified core that Intel can totally beat ARM and even by then who knows what those Cambridge folks cook up to counter it.