for scaler code yes not for SIMD Code with wide vectorsWe're still there years after it's been demonstrated that Apple ST performance is excellent even for workloads compiled by users.

-

We’re currently investigating an issue related to the forum theme and styling that is impacting page layout and visual formatting. The problem has been identified, and we are actively working on a resolution. There is no impact to user data or functionality, this is strictly a front-end display issue. We’ll post an update once the fix has been deployed. Thanks for your patience while we get this sorted.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Question Zen 6 Speculation Thread

Page 391 - Seeking answers? Join the AnandTech community: where nearly half-a-million members share solutions and discuss the latest tech.

Nothingness

Diamond Member

Yes, but that has nothing to do with "Apple SW stack".for scaler code yes not for SIMD Code with wide vectors

poke01

Diamond Member

I mean so does Intel, they have them the size of snorlax and fatter doesn’t mean it’s automatically better. You still need competent design.stack" is a meme. Apple just has fatter cores

So does AMD?If you're comparing to Apple, then AMD of course stands no chance against a competitor who has owns all of their stack.

adroc_thurston

Diamond Member

Atoms are perfectly reasonable (power aside).I mean so does Intel, they have them the size of snorlax and fatter doesn’t mean it’s automatically better. You still need competent design.

poke01

Diamond Member

sure if we talking about Skymont. But Apple made a better Skymont with its M core. All that hype about that architecture in 2024, only to get beaten by a souped up watch cpu core.Atoms are perfectly reasonable (power aside).

poke01

Diamond Member

In any case x86 cores this year will diverge from ARM cores, especially Intels ( with atoms getting AVX10.2)

No point comparing cores that are heavily focused on SIMD to ones made for scalar. A Zen6 core will be fast, skinny and have SIMD capabilities that no ARM chip can match.

No point comparing cores that are heavily focused on SIMD to ones made for scalar. A Zen6 core will be fast, skinny and have SIMD capabilities that no ARM chip can match.

adroc_thurston

Diamond Member

Heyyyy they're not *that* skinny. Zen3 was. But we're past that.A Zen6 core will be fast, skinny and have SIMD capabilities that no ARM chip can match.

int IPC-wise Z6 should be right about Cortex x4-ish (it just clocks twice as high).

Cmon. AMD's cores aren't like the A64fx cores lol. Integer perf is still extremely important for AMD.No point comparing cores that are heavily focused on SIMD to ones made for scalar.

The FPU being massive is common in even ARM P-cores, because the FPU just takes a ton of area in general, even if its not as proportionally as large as it is in AMD's cores.

There's plenty of reasons to compare AMD and ARM cores. They still compete in many intersecting markets.

Idk about that. I expect Skymont to still have better perf/mm2. Apple as a whole doesn't seem to have great perf/mm2 cores vs other cores, until you get to the caches.But Apple made a better Skymont with its M core.

And Qualcomm, who uses the same cache hierarchy as apple, then proceeds to spank them in perf/mm2 too lol.

adroc_thurston

Diamond Member

Apple trades area for power, yes. But not much of it, no. And they're a sizeable chunk more efficient, thus.And Qualcomm, who uses the same cache hierarchy as apple, then proceeds to spank them in perf/mm2 too lol.

poke01

Diamond Member

When Apple, Qualcomm and the client ARM cores include SVE2 with 512 datapaths like AMD does (and soon Intel) then it’s a fair comparison IMO.Cmon. AMD's cores aren't like the A64fx cores lol. Integer perf is still extremely important for AMD.

The FPU being massive is common in even ARM P-cores, because the FPU just takes a ton of area in general, even if its not as proportionally as large as it is in AMD's cores.

There's plenty of reasons to compare AMD and ARM cores. They still compete in many intersecting markets.

Otherwise there’s always a “yeah but AMD does better SIMD” argument to be made.

? 12 Apple M cores in M5 Pro/Max are faster than 16 Skymont cores in the 285K while being much more efficient, like much more.Idk about that. I expect Skymont to still have better perf/mm2. Apple as a whole doesn't seem to have great perf/mm2 cores vs other cores, until you get to the caches

I don't. If they can be the best performer at 6.0Ghz within the DT market (lets say by 20%), that's as far as they will clock.... until Intel catches up that is.Personally, I'm inclined to believe that, if they can achieve 7Ghz with a decent non-zero percentage of chips, they will release a product that features it. Overall, since they aren't going to have a core count even close to NVL's top SKUs, they're going to push Fmax as hard as they can to wring the most they can out of SMT. I'm FAR more interested in what they can achieve with all-core boost. I want them to get up to6.3- 6.4Ghz all core as I don't think that Intel will be able to get anywhere near that in all-core.

Very true, but see the next post below:AMD currently mostly beats Intel while being a node behind, with less L2 and a much smaller core.

I really do think ARL has some messed up fabric issues that we can be certain will be freed up in NVL. That might lead to some unexpected jumps.My bigger concern for AMD is Intel getting their (explitive deleted) together with respect to their fabric and L3 performance. Arrow lake doesn't have bad single core throughput. Not amazing, but not bad.

I am betting it isn't even 6.5Ghz. Likely 6.3Ghz. Still, nothing to sneeze at. 5.7-6.3 is a pretty good bump.Reminder to everyone, AMD has set extremely aggressive clock targets for pretty much the entire Zen line. They always miss the target. They probably will this time too. 6.5 would still be pretty nice.

Ok, so you are at least not trying to explain a 30-45% IPC uplift from Zen 5 to Zen 6 🙂. I'm with you on the 10-15% IPC uplift though.Not exactly a 7GHz believer, but personally, anything less than about 6.5GHz on desktops would be disappointing as hell with only 10-15% IPC, Zen5's mediocrity and AMD's cadence being taken into consideration. If they can't muster that much, they may as well throw in the towel. Apple, Qualcomm and stock ARM cores will just keep lapping them.

adroc_thurston

Diamond Member

Not how any of that works.If they can be the best performer at 6.0Ghz within the DT market (lets say by 20%), that's as far as they will clock.... until Intel catches up that is.

Intel is not relevant for competitive comparisons for anything Zen6 period.

Gideon

Platinum Member

Yeah, but you could have made the same argument for generations in AMD vs Intel discussions (zen1 vs Skylake, Zen2 vs 11th gen).When Apple, Qualcomm and the client ARM cores include SVE2 with 512 datapaths like AMD does (and soon Intel) then it’s a fair comparison IMO.

Otherwise there’s always a “yeah but AMD does better SIMD” argument to be made.

I would vastly prefer higher INT IPC or 256bit FP perf over that.

Wide datapaths aren't that useful for client workloads (new helpful 256 bit wide instructions in AVX-512 are another matter, but there ARM has analogues)

Skymont on decent bit behind node refinement and horrible uncore we don't have an Apples to Apple comparison pun not intended insert core on windows vs core on MacOS.12 Apple M cores in M5 Pro/Max are faster than 16 Skymont cores in the 285K while being much more efficient, like much more.

When Apple, Qualcomm and the client ARM cores include SVE2 with 512 datapaths like AMD does (and soon Intel) then it’s a fair comparison IMO.

Otherwise there’s always a “yeah but AMD does better SIMD” argument to be made.

Apple has no incentive to support wide SIMD like that, because they already support such calculations in multiple ways:

1) they've been able to handle four 128 bit wide NEON instructions per cycle for a while now. That's equivalent (for most stuff, there are exceptions like rotate) to one 512 bit wide AVX instruction per cycle

2) they have SME, which is very fast if it is matmul that you want, but for SVE type (i.e. SSVE) they haven't put any effort into making that fast - perhaps because they consider the functionality redundant

3) they have on die GPU on every Apple Silicon die which essentially handle SIMD from 128 to 1024 bits at a per core performance level several times higher than anyone's CPU core, though it can't do all the weird bit twiddling stuff that NEON/AVX do (stuff which is mostly appropriate for crypto or video encoding which Apple covers with custom units)

Basically there are three tiers with increasing performance, and increasing latency. If you ignore SME (since it is not as general purpose as either NEON or the GPU) you can look at it as one is appropriate for shorter code sequences where the time to go out to the GPU and back would dominate execution time, and the other is appropriate for when execution time dominates making latency irrelevant.

The GPU won't show up on anyone's CPU benchmarks but EVERY ARM Mac has it and Apple has built in frameworks to access it so developers are MUCH more likely to make use of it than they might a GPU on a PC where you don't know if you will have a GPU (i.e. servers) and if you do what kind it is. So AMD can notch all the wins they want in benchmarks that can make use of AVX512, and Apple will not have any incentive to add like functionality to the CPU because the gap between 4xNEON and the GPU where that might be useful is too small for it to be worthwhile.

Gideon

Platinum Member

I felt my initial post sounded a bit too much like my goal was to dunk on AMD, which certainly wasn’t the point I was trying to make.Yeah, but you could have made the same argument for generations in AMD vs Intel discussions (zen1 vs Skylake, Zen2 vs 11th gen).

I would vastly prefer higher INT IPC or 256bit FP perf over that.

Wide datapaths aren't that useful for client workloads (new helpful 256 bit wide instructions in AVX-512 are another matter, but there ARM has analogues)

I’m just a bit salty that this is where most of their transistor budget (and probably development effort) went for Zen 5, right after a very strong 256-bit implementation in Zen 4. And particularly since the Zen 5 implementation is of the "use it or lose it" variety, per Mystical:

While there are workloads that benefit from 512-bit wide vectors on both the consumer and server side, it’s a niche in both (though a bit less so on the server side, and the gains can be larger).512-bit is required for significant performance gain.

Zen5's improvement to the AVX512 is that it doubles up the the width of (nearly) everything that was 256-bit to 512-bit. All the datapaths, execution units, etc... they are now natively 512-bit. There is no more "double-pumping" from Zen4 - at least on the desktop and server cores with the full AVX512 capability.

Consequently, the only way to utilize all this new hardware is to use 512-bit instructions. None of the 512-bit hardware can be split to service 256-bit instructions at twice the throughput. The upper-half of all the 512-bit hardware is "use it or lose it". The only way to use them is to use 512-bit instructions.

As a result, Zen5 brings little performance gain for scalar, 128-bit, and 256-bit SIMD code. It's 512-bit or bust.

So sorry to disappoint the RPCS3 community here. As much as they love AVX512, they primarily only use 128-bit AVX512 - which does not significantly benefit from Zen5's improvements to the vector unit.

To make matters worse, in the vast majority of these (already niche) consumer workloads, 256-bit wide AVX-512-capable floating-point units (like Zen 4) still deliver 80–90% of the performance. Yes There can certainly be some consumer workloads that differ more, but these are far from the common case. Speeding up 128- or 256-bit datapaths would affect far more use cases; improving integer performance would benefit essentially everything.

Mostly things that speed up browsers or electron (or tauri) apps, which are most modern desktop apps (like Spotify, Discord, Most IDE-s and devtools, etc ...)

Stuff made by Daniel Lemiere for instance, like his UTF-8 / UTF-16 decoding/encoding libraries (simdutf) and json parsing json libraries (simdjson) used in nodejs and bun, that could benefit in-browers workload as well (as long as the json and strings are in the hundreds of megabytes). Some hashmap implementations (like SwissTable and hashbrown) come to mind, but then again 256-bit already gives most of the benefits. Software video encoding most certainly does (FFmpeg, HandBrake) but is not something sane people do 24/7.

Stuff made by Daniel Lemiere for instance, like his UTF-8 / UTF-16 decoding/encoding libraries (simdutf) and json parsing json libraries (simdjson) used in nodejs and bun, that could benefit in-browers workload as well (as long as the json and strings are in the hundreds of megabytes). Some hashmap implementations (like SwissTable and hashbrown) come to mind, but then again 256-bit already gives most of the benefits. Software video encoding most certainly does (FFmpeg, HandBrake) but is not something sane people do 24/7.

Bottom line:

Thus i really hope Zen 6 does not "waste" too much resources on optimizing ultra-wide FP paths further, but rather makes better use of the existing resources for 128/256-bit datapaths.

I hope most of their IPC gains this time around come from integer workloads. There are certainly low hanging fruits (of small benefit) on the decoder side, like finally allowing decoding 2 branches for a single thread, or bringingback some Zen-4 optimizations. Not that decoding is even the main bottleneck:

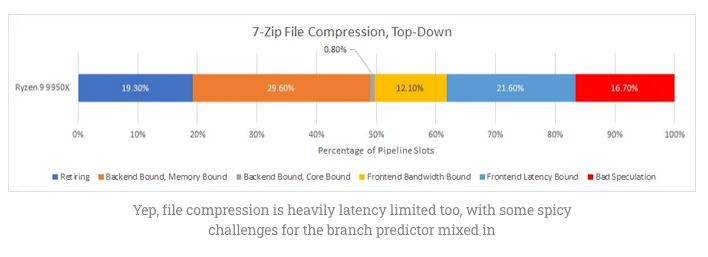

Zen 5’s integer register file didn’t get large enough. Clock speed increases are minor compared to prior generations. AMD’s first clustered decode implementation can’t have both clusters work on a single thread. Widening the core may have been premature too. Much of the potential throughput offered by Zen 5’s wider pipeline is lost to latency, either with backend memory accesses or frontend delays.

Really hoping Zen 6 delivers on the integer side - and if Zen 5 fell short on its integer performance hopefully more can be recovered from "fixes" to that.

I wouldn't mind 7 GHz on top of that at all, but this is the part I'm more interested in.

Last edited:

adroc_thurston

Diamond Member

It's low teens IPC there and low teens IPC in general.Really hoping Zen 6 delivers on the integer side

adroc_thurston

Diamond Member

20%, really.But 38% higher clock! /runs

Kaffeekenan

Member

20%, really.

So you are saying Zen 6 reaches about 6,8 GHz?!

Bulldozer's FPU was not a problem, though. It was so starved on the memory side that it was not really possible to saturate the FPU execution units on any realistic load.Bulldozer's FPU isn't a problem because you can offload that to the APU

Narrator: They did not

It's weird how the narrative around that core worked out. It was a bad design, but the parts that made it a bad design (combination of write-through L1 and slow-as-molasses L2), were harder to see on a block diagram than the shared FPU, so everyone concentrated on that even though it was basically never the bottleneck.

adroc_thurston

Diamond Member

Well, yeah, the same way phones will reach 5GHz later this year. you're welcome.So you are saying Zen 6 reaches about 6,8 GHz?!

L3 was also dogwateringly slow there.It's weird how the narrative around that core worked out. It was a bad design, but the parts that made it a bad design (combination of write-through L1 and slow-as-molasses L2)

It was a mess.

I still feel kinda weird about AMD having better caches than Intel. This really wasn't a thing like, ever.

Well, caches in Bulldozer were exceedingly bad and killed a lot of the processor's potential. They had to do a full redesign for first Zen. They learned the lesson.Well, yeah, the same way phones will reach 5GHz later this year. you're welcome.

L3 was also dogwateringly slow there.

It was a mess.

I still feel kinda weird about AMD having better caches than Intel. This really wasn't a thing like, ever.

Hulk

Diamond Member

While I understand your point, and I would agree the efficiency of Apple's M core is astounding, I don't think it is better at running x64 code than Skymont.sure if we talking about Skymont. But Apple made a better Skymont with its M core. All that hype about that architecture in 2024, only to get beaten by a souped up watch cpu core.

It's like comparing a car built for road racing to one built for off-road. Sure they are both vehicles but they run on completely different infrastructures. Apple has a tightly controlled ecobase. No DIY builds, very limited upgraded, limited software base, no third party hardware, limited third party software (only though Apple store, etc..), not required to run apps from 50 years ago, etc... It's a tightly controlled, perfectly paved race track with no bumps. Due to their tightly controlled system they've even been able to totally start from scratch with their code base a few times over the years.

x64 on the otherhand is a jungle of thousands of third party hardware parts and software apps spanning 50 plus years that has to work across generations and generations of processors and hardware. CPUs built for x64 have to be able to handle wild terrain.

Both have advantages and disadvantages. I'm saying that the comparison is not as straightforward as it might seem on the surface. I'm sure if AMD and Intel could "start over" with a completey new instruction set and OS they could do what Apple has done with the M series.

Last edited:

TRENDING THREADS

-

Discussion Zen 5 Speculation (EPYC Turin and Strix Point/Granite Ridge - Ryzen 9000)

- Started by DisEnchantment

- Replies: 25K

-

Discussion Intel Meteor, Arrow, Lunar & Panther Lakes + WCL Discussion Threads

- Started by Tigerick

- Replies: 25K

-

Discussion Intel current and future Lakes & Rapids thread

- Started by TheF34RChannel

- Replies: 24K

-

-