DisEnchantment

Golden Member

Speculate at will

Last edited:

A bit confused here about how your DC software works. For high performance servers (high load) it’s always been about ST * Cores for net performance. That’s the mistake some RISC vendors made that ceded the High performance crown in servers to Intel. Does DC software run differently (F@H DIDN'T, except for Big Units where a minimum number of cores needed to be used).and what does gaming have to do with desktop ? Yes, its a PART of desktop, but there are others. Some want office, some want mini workstations, some participate in DC or things that require a lot of cores. Why do you think DIY owns gaming ? Consoles ? handhelds ? Gaming is all over, but they don't own DIY.

Lets put it this way. My Genoa farm kills everything for performance, especially when avx-512 is used, which the recent PG race proved. My 4 Genoa + 6 7950x + 2 7763 Rome plus 2 7V12 Rome, beat everything except 1620 older Xeon cores. Yes, its ST * cores, and Genoa has both. But on the flip 9554 Genoa and 64 cores runs at 3.5 g side... 64 cores of Genoa in one chip = 2.7 7950x (16 cores), so ST works, but who wants 3 times as many boxes ???? 64 core 9554 runs at 3.5 ghz fully loaded, so its a good compromise.A bit confused here about how your DC software works. For high performance servers (high load) it’s always been about ST * Cores for net performance. That’s the mistake some RISC vendors made that ceded the High performance crown in servers to Intel. Does DC software run differently (F@H DIDN'T, except for Big Units where a minimum number of cores needed to be used).

Thanks, I understand your point now. Wish I had the dosh to get back into DC. Electricity rate in NH have gone through the roof in recent years. Coal and Nuclear plant shutdowns, along with increased NG prices are killing us (on top of hardware costs).But on the flip 96554 Genoa and 64 cores runs at 3.5 g side... 64 cores of Genoa in one chip = 2.7 7950x (16 cores), so ST works, but who wants 3 times as many boxes ???? 64 core 9554 runs at 3.5 ghz fully loaded, so its a good compromise.

Its not cheap for me either, even with Hydro and windmill as the sources (There might be others also). That month cost me $1000. But efficiency is also king. One 320 watt Genoa almost equals 3 142 watt 7950x's (all my 7950x's are ECO mode set)Thanks, I understand your point now. Wish I had the dosh to get back into DC. Electricity rate in NH have gone through the roof in recent years. Coal and Nuclear plant shutdowns, along with increased NG prices are killing us (on top of hardware costs).

Your "fix" does not make sense.Fixed it for you.

It is my experience too with engineering applications that a mixture of ST _and_ MT computing is a common case, with both portions taking up notable fractions of the overall run time. Sometimes the presence of intense ST computing can be chalked up as the usual lack of optimization, because software product management sets other priorities. Other times it really is because the particular sub-problem is technically very difficult to parallelize.I am beyond certain many if not most engineering loads need a combination of both. Engineering workloads are vast and diverse.

We have a large bunch of AMD EPYC 9374F clusters and we choose this specific SKU due to the high boost it has among the 4th gen family. Sometimes I wish it has more cores but the number ST passes in our loads are high that currently we are looking to migrate to 2P 9374F clusters instead of 1P 64C clusters.

In the bigger picture, Distributed Computing is all about embarrassingly parallel computing. It entirely depends on the ability to divide a huge computing task in to a very large number of small tasks which can be solved almost independently of each other, with very little communication happening between work distribution/ result collection server and compute client, and no communication at all between compute clients. The clients can be small or big, slow or fast; they can be online 24/7 or down for much of the day or week. From the POV of the science project, the sum of performances of all these clients makes up the project performance.A bit confused here about how your DC software works. For high performance servers (high load) it’s always been about ST * Cores for net performance. That’s the mistake some RISC vendors made that ceded the High performance crown in servers to Intel. Does DC software run differently (F@H DIDN'T, except for Big Units where a minimum number of cores needed to be used).

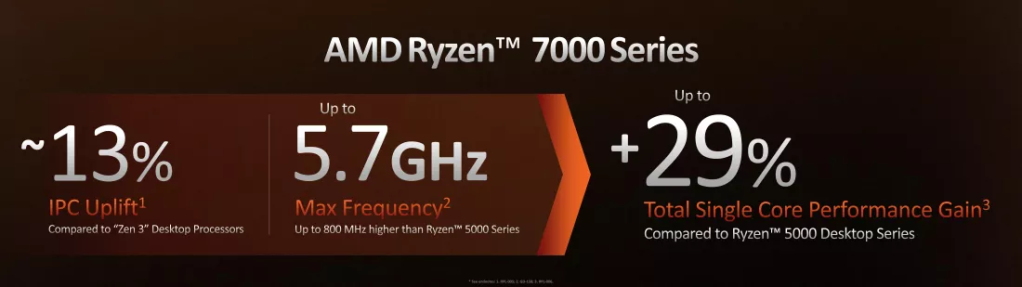

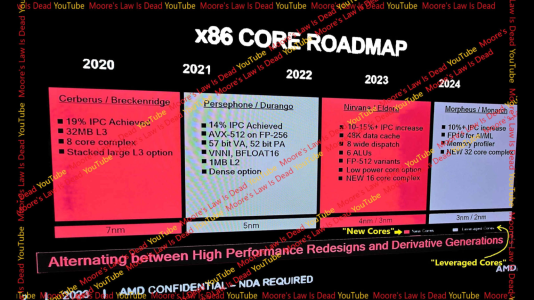

That's SIR n-copy for Turin-D with SMT on, yes, that's how it sits at .99 of Genoa vCPU perf.Internal AMD documents show 10-15% IPC increase for Zen 5

Presented without comment.Source

MLID brought the receipts. It’s way different than what many people were claiming with the 32% IPC increase.

Internal AMD documents show 10-15% IPC increase for Zen 5. There’s also leaked details of the new core arch.

This document is from 2023. It doubled IPC expectations within the year?That's SIR n-copy for Turin-D with SMT on, yes, that's how it sits at .99 of Genoa vCPU perf.

Veeeeeerry old slideware with old timelines.

So doubling down then?

Lol, it's old stuff for projections.This document is from 2023

1t IPC isn't N-copy SIR IPC.It doubled IPC expectations within the year?

Of course, AMD has underpromised and overdelivered with every single Zen generation.So doubling down then?

It’s no more than 9 months old.Lol, it's old stuff for projections.

It doesn’t state that anywhere. The numbers it provides for Zen 3 & Zen 4 are the proper IPC. Why would they give the legit values for previous arch but sandbag Zen 5 with a Turin-D core in a suboptimal scenario for an internal document? That doesn’t make any sense.1t IPC isn't N-copy SIR IPC.

A bit older.It’s no more than 9 months old.

All server IPC projections for all vendors tend to be N-copy for both IPC and perf total.It doesn’t state that anywhere

Those are specifically server numbers.Why would they give the legit values for previous arch

Yes it does when you know Turin SIR perf numbers.That doesn’t make any sense.

In the bottom left corner of the document it’s dated 2023.A bit older.

All server IPC projections for all vendors tend to be N-copy for both IPC and perf total.

Those are specifically server numbers.

I know.In the bottom left corner of the document it’s dated 2023.

Yea, you only have a tiny little bit left to wait for the first three parts.Guess we’ll know when it launches. I’ll anxiously await the 32% IPC increase.

In the bottom left corner of the document it’s dated 2023.

Suuureeee. Guess we’ll know when it launches. I’ll anxiously await the 32% IPC increase.

Me, it's based off 96c Turin numbers AMD gave.Who claimed 32% increase?

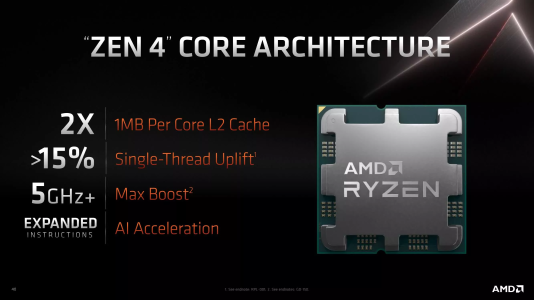

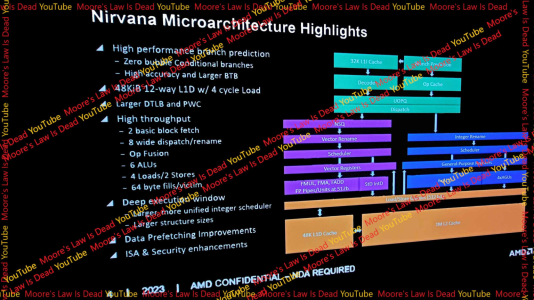

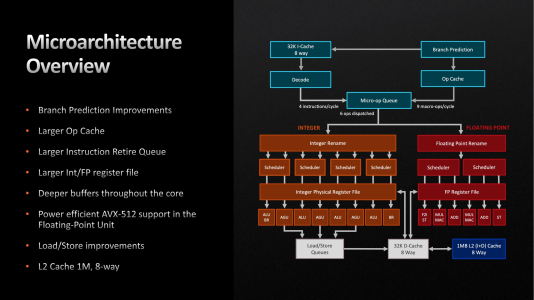

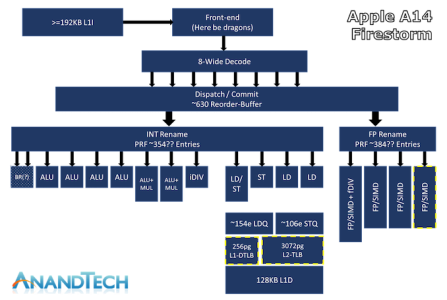

Yes, Zen5 looks for the most part similar to Nuvia Phoenix or Apple Firestorm/Avalanche/Everest 'cept the non-baby mode FPU.Zen 4 has 4 ALUs vs 6 for Zen 5.

Zen 4 has 6 op dispatch vs 8 for Zen 5.

Zen 4 has 3 load, 2 stores vs 4 loads, 2 stores for Zen 5.

Zen 4 has 32 kib L1D cache vs 48 kib for Zen 5.

Hmm, Have I misunderstood it. I always thought i'ts two 256-bit loads and one 256 bit store:Zen 4 has 3 load, 2 stores vs 4 loads, 2 stores for Zen 5.

This is likely because AMD didn’t implement wider buses to the L1 data cache. Zen 4’s L1D can handle two 256-bit loads and one 256-bit store per cycle, which means that vector load/store bandwidth remains unchanged from Zen 2. The Gigabyte leak suggested alignment changed to 512-bit, but that clearly doesn’t apply for stores.

Bigger.It seems a bit like the Comet Lake->Alder Lake jump in terms of core resources.

It's. A. Firestorm.the core ends up being much more similar to Alder Lake than I anticipated

It kinda cutely mentions twice the i$ fetch bandwidth.There are no mentions of any decoder changes, surely it would be an absurd bottleneck,

Zen 5 can compete of they can hit 6.2 gjz.. pr arrow lake wins easilySo, if the slides are to believed (and they look quite authentic), the core ends up being much more similar to Alder Lake than I anticipated. But still noticeably fatter.

The biggest unknown for me is how do they plan to feed the beast? There are no mentions of any decoder changes, surely it would be an absurd bottleneck, if not changed?

- The same 12-way 48KB L1 cache as Colden Cove (hopefully without the latency penalty)

- 8-wide dispatch (+2 vs Alder Lake and Zen 4)

- 6 ALUs (+1 vs Alder lake +2 vs Zen 4)

- 4 loads / 2 stores per cycle (vs 3/2 for Golden cove, 2 /1 for Zen 4)

- - if I'm reading this right, these are 512bit (64 byte) ? That's a massive uplift from Zen 4 if true (4x the throughput in ideal AVX-512 scenarios)

Anyway looking forward to comparisons to the Arrow Lake core. In the end, they couuld end up pretty similar in width - so it would all come down to execution.