poke01

Diamond Member

nothng beats a M4 mini in perf/w65W gaming PC.

nothng beats a M4 mini in perf/w65W gaming PC.

Partly because every single keyboard, mouse and proper (speedy) usb keys are still all stupidly USB A.How in the love of god are people still shipping USB A ports?

Becsuse usb-c is a brittle and bad port for desktop peripherials? Any longer and heavier cable (like high end display cables) and it's super easy to bend/pull out. It's great for phones and thin laptops, but not desktopsHow in the love of god are people still shipping USB A ports?

Partly because every single keyboard, mouse and proper (speedy) usb keys are still all stupidly USB A.

Also because keyboard/mice are low speed peripherals. Unless you want to designate a few USB-C ports as low speed you're going to waste I/O links providing a bunch of full speed USB-C ports only to have them used for slow HID devices. If you took the capacity for one USB-C port you could split it into three USB-A ports for storage drive, keyboard and mouse and because the keyboard/mouse are so slow your storage drive will still be running at full speed.

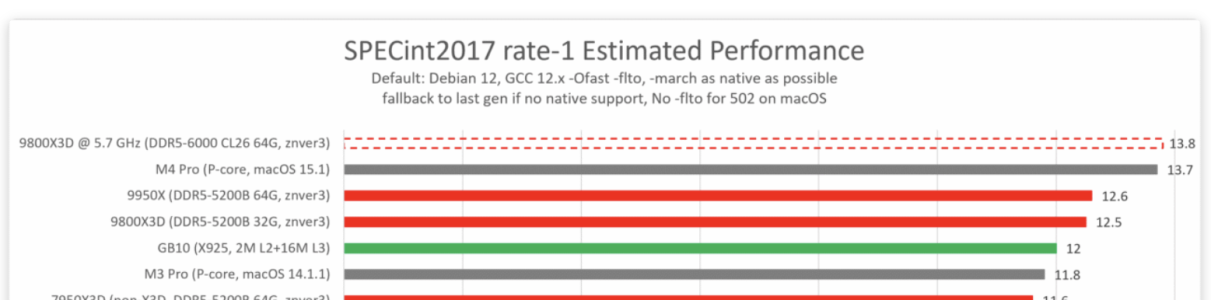

Bweeeeee it's n3 opinion discardedDon't remember if this has been talked about yet, but placing an X925 in a mobile/DT solution (GB10) nets it >7% higher IPC than the same core in a smartphone (Xring O1) thanks to uncore changes.

View attachment 134611

The result of this is that Zen 5 DT is only 5% faster than the X925, while also having a ~13% lead in perf over Zen 5 high power mobile (strix halo).

The X925 is last gen too.

n2 era will be funBweeeeee it's n3 opinion discarded

Also how is Apple still making do with Everest and sawtooth rehashes??Don't remember if this has been talked about yet, but placing an X925 in a mobile/DT solution (GB10) nets it >7% higher IPC than the same core in a smartphone (Xring O1) thanks to uncore changes.

View attachment 134611

The result of this is that Zen 5 DT is only 5% faster than the X925, while also having a ~13% lead in perf over Zen 5 high power mobile (strix halo).

The X925 is last gen too.

Different cache configs is likely the only significant change.Don't remember if this has been talked about yet, but placing an X925 in a mobile/DT solution (GB10) nets it >7% higher IPC than the same core in a smartphone (Xring O1) thanks to uncore changes.

It seems some games can be made to run at an acceptable speed: https://forums.anandtech.com/thread...nning-of-transformation.2617658/post-41535822To play any decent games you are going to need emulation, and even with all the work that's gone into FEX emu you are still going to get a significant emulation tax in both performance and power relative to a native hw solution.

And even in PC and Windows, you should use mostly Intel since most software were fully optimized to that brand... and even to certain generations like Sandy or Skylake.It seems some games can be made to run at an acceptable speed: https://forums.anandtech.com/thread...nning-of-transformation.2617658/post-41535822

I agree it's a single example and it might not be representative of other games.

As an aside, back when I was playing on PC 10 years ago, I had to switch back to Windows from Linux as Wine was not always working as expected (in particular with MT games). Beyond the processor the OS was an issue. If I ever decided to return to gaming on PC, I'd stick to Windows + x86.

I hope that a game that was optimized for Sandy Bridge or Skylake works well on any recent CPU, be it from AMD or Intel 🙂And even in PC and Windows, you should use mostly Intel since most software were fully optimized to that brand... and even to certain generations like Sandy or Skylake.

This is no longer Everest or Sawtooth. It's funny that some people in the industry continue to refer to Apple's new P and E cores by the same names from the A16 era simply because Apple has stopped giving them code names. The cores from the A15 and A16 have different code names, but the changes in architecture are minimal.Also how is Apple still making do with Everest and sawtooth rehashes??

They have to move on from that base soon

I didn't mean it would be slow, only that there would be a perf and power tax.It seems some games can be made to run at an acceptable speed: https://forums.anandtech.com/thread...nning-of-transformation.2617658/post-41535822

www.notebookcheck.net

www.notebookcheck.net

What I find really funny is what they write:this is news to me

Apple MacBook Pro 15: How to get 2 more hours of battery life with this simple trick

Sometimes, the things our reviewers uncover is downright stunning: power consumption on the new MacBook Pro 15 is significantly lower in full screen than in windowed mode. Consequently, battery life is improved by a stunning 135 minutes or, in other words, the length of an entire feature film...www.notebookcheck.net

laptops are fun

Remove the capital and it fully applies to windows (vs fullscreen) 🙂This does not apply to Windows, though.

Would this apply to current hardware? I figured this was a issue of the GPU not having to be concerned about what's behind the window and keeping it updated. But, the comments imply this is a related to how work is divided between the iGPU and dGPU, if I'm understanding correctly.this is news to me

Apple MacBook Pro 15: How to get 2 more hours of battery life with this simple trick

Sometimes, the things our reviewers uncover is downright stunning: power consumption on the new MacBook Pro 15 is significantly lower in full screen than in windowed mode. Consequently, battery life is improved by a stunning 135 minutes or, in other words, the length of an entire feature film...www.notebookcheck.net

laptops are fun

That comment looks stupid: there's no dGPU on recent Mac.Would this apply to current hardware? I figured this was a issue of the GPU not having to be concerned about what's behind the window and keeping it updated. But, the comments imply this is a related to how work is divided between the iGPU and dGPU, if I'm understanding correctly.

Would this apply to current hardware? I figured this was a issue of the GPU not having to be concerned about what's behind the window and keeping it updated. But, the comments imply this is a related to how work is divided between the iGPU and dGPU, if I'm understanding correctly.

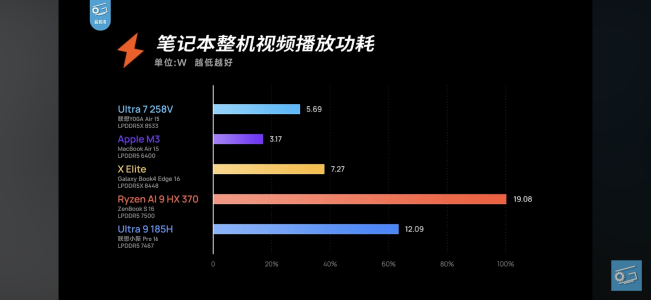

🤣Sorry here’s something more recent on iGPUs.That comment looks stupid: there's no dGPU on recent Mac.

And then I realized the article is 8 years old... @poke01 I hate you 😀

EDIT: the comment that looks stupid is the one on the site, just to clarify.

Would this apply to current hardware? I figured this was a issue of the GPU not having to be concerned about what's behind the window and keeping it updated. But, the comments imply this is a related to how work is divided between the iGPU and dGPU, if I'm understanding correctly.

All of my keyboards and mice came with USB-C, and my fastest USB drives are USB-C.Partly because every single keyboard, mouse and proper (speedy) usb keys are still all stupidly USB A.

Apple ditched USB-A on laptops NINE years ago. You'd think if this was a problem there'd be some backlash on it.Becsuse usb-c is a brittle and bad port for desktop peripherials?

laptops are than fine to come with no USB-A as they come with a built in keyboard and trackpad and mostly likely will be docked via USB-C but desktops are a big no.Apple ditched USB-A on laptops NINE years ago. You'd think if this was a problem there'd be some backlash on it.