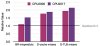

While this observation is interesting from a benchmarking standpoint, Geekbench is generally less demanding of the micro-architecture than SPEC CPU is. For a subset of the micro-architectural features, Figure 3 shows the relative metric value for CPU2006 and CPU2017 normalized to a baseline of 1.0 for Geekbench 5. These were generated from detailed performance simulations of a modern CPU. It shows that the branch mispredicts and data cache (D-Cache), data TLB (D-TLB) misses are 1.1x — 2x higher in SPEC CPU compared to that seen in Geekbench 5.

For this reason, chip architects tend to study a wide variety of benchmarks including SPEC CPU and Geekbench (among many others) to optimize the architecture for performance.

It is important to note that the observed correlation is not a fundamental property and can break under several scenarios.

One example is thermal effects. Geekbench typically runs quickly (in minutes) and especially so in our testing where the default workload gaps are removed, whereas SPEC CPU typically runs for hours. The net effect of this is that Geekbench 5 may achieve a higher average frequency because it is able to exploit the system’s thermal mass due to its short runtime. However SPEC CPU will be governed by the long term power dissipation capability of the system due to its long run-time. This is something to watch out for when applying such correlation techniques to systems that see significant thermal throttling or power-capping while running these benchmarks.

Another scenario where the correlation can break is non-linear jumps in performance that one benchmark suite sees but not the other. The interplay between the active data foot-print of a test and the CPU caches is a classic source of such non-linearities. For example, a future CPU’s cache may be large enough that many sub-tests of one benchmark suite may fully fit in cache boosting performance many fold. However, the other benchmark suite may not see such a benefit if none of its tests fit in cache. In such cases, the correlation will not hold.