Your post in nothing more than damage control at this point, a shared unit can never beat a dedicated unit, worse yet, RDNA2 does BVH traversal on the shaders as well (Turing does it on RT cores). So RT acceleration is shared on two levels with RDNA2, not one.

And no Textures units are not overbudgeted on modern GPUs, they are just the right amount for regular texturing, 16X AF filtering, and texture heavy shaders and effects.

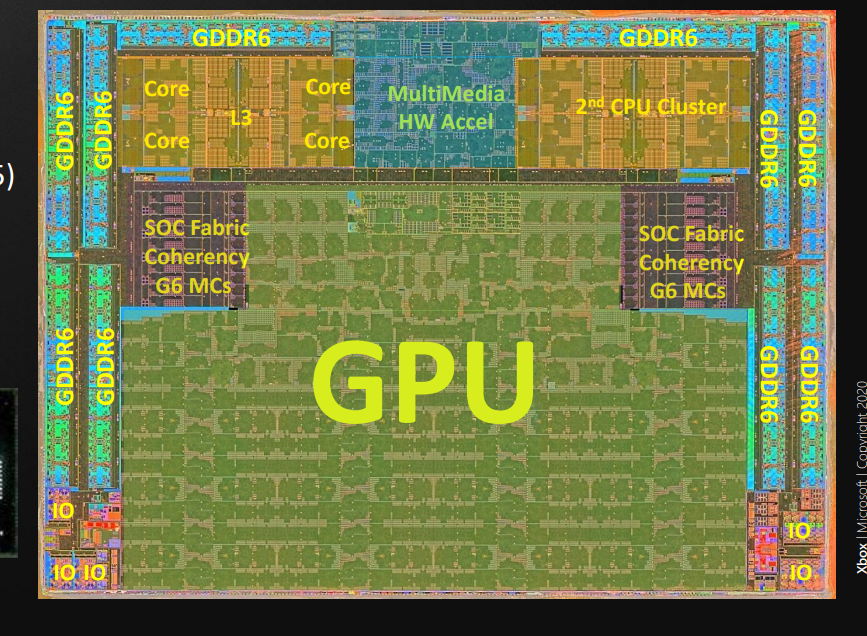

View attachment 28198

View attachment 28199

More damage control, Tensores are not necessary, but they are fast enough to offset any performance loss due to using ML to upscale the image, without tensors the loss would be bigger.

The shaders don't do the BVH traversal, it says they can run in parallel to the BVH traversal.

In other words, while the BVH traversal is taking place, they can perform other calculations while they wait for the BVH data.

That's called "not wasting compute resources".

And we're not talking about a shared unit vs a dedicated one if you didn't realise. We're talking about 4 shared units vs a single dedicated one.

So, let me restate what I said before: the implementation in RDNA2 is vastly different to that of Turing. Attempting to judge which is better based off of technical specs alone is a meaningless waste of time.

As for the portion about tensors, you're correct there, the performance loss will be larger. Well, it would be more accurate to say that the performance improvement from performing the same DLSS2.0 algorithm would me smaller.

Fact of the matter is that neither of us knows how long the tensors are used during the stage of the pipeline whilst they perform the algorithm, and to what degree of a slowdown it would cause. We have no degree as to the difficulty and accuracy of the algorithm AMD would use.

But I'm going to be a bit honest here - I simply cannot believe that it would not be possible to use the same algorithm as DLSS2.0 on RDNA2. I'm quite positive it'll be usuable, just much slower, such that the performance uplift will be much smaller. Still present though.

In the end, the upscaling is just another portion of the pipeline and unlike with Turing's architecture, running off the shaders still allows you to perform other computations at the same time (whereas running tensor operations on Turing prevents you from doing anything else on that SM at the same time).

You know, having remembered that last portion, I think I'm going to defer again to the same conclusion as the raytracing one.

The implementation is so different that I don't really think we can actually judge which will be appreciably better or if one will be severely handicapped vs the other. Once again, it's best to wait and see.