You tested single drive-to-drive copying. Exactly where DMI link speed doesn't matter, because PCIe is full duplex. That means the link can transfer the same bandwidth, in both directions, at once. A good analogy would be a highway with traffic in both directions. Anyway, such a test will always be limited to the write speed of the slowest drive in the copy chain. The 970/960 both have good write speeds, but not that good.

And just for the record, you can't use a non-PCIe switch passive adaptor on a mainboard without bifucation support. You only get a single drive doing that.

I rest my case here. I don't think there is any point in continuing this debate.

Wasn't that the problem I pointed out? The logic of Your post goes like this "Don't forget PCIe and DMI ( a carbon copy of higher speed PCI-E with marketing name ) is duplex, that reduces the bottlenecks even more. Then You go on inventing some magic scenario where You read from one drive at up to 3500, and write to two drives at up to 3500 total.

...

And that is somehow slower than on AMD where there is hard limit of 1300 (on that 2nd copy target and how is even the 3rd destination connected???"

I'll easily shut this debate down about what platform is better and more future proof'd.

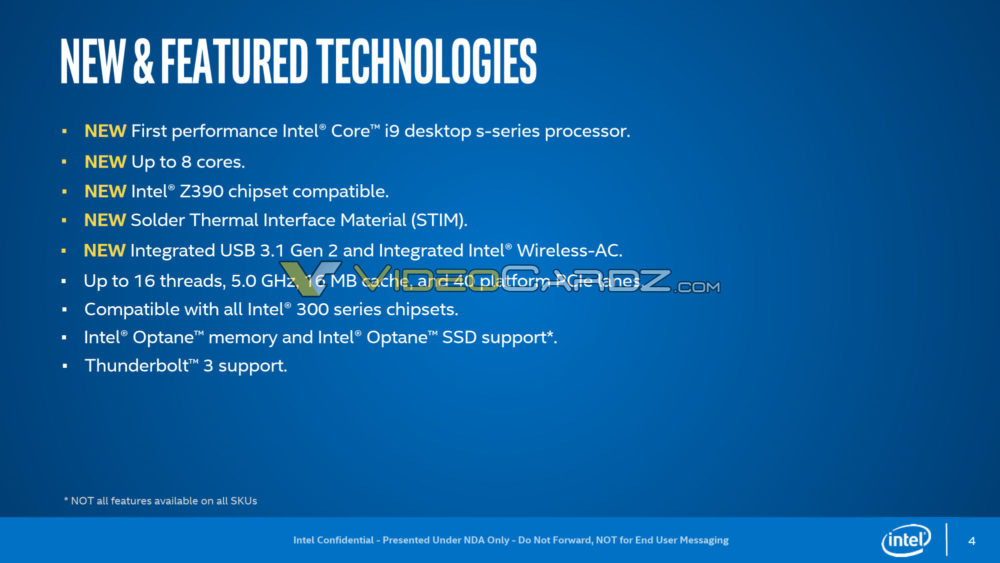

NVME drive speed and capability is increasing not decreasing. It interfaces at PCIE 3.0 x4 officially. The trend is that they are moving the drive capability to

full saturation and over saturation at lower latencies. At such a point, as intel even sees, NVME drives become first class cache storage. They already are in their current state. So, you will have constant flows to/from from all storage components through the NVME to System MEM and directly to the CPU.

You

absolutely do not want such a drive interfacing through a friggin chipset with all of your other much slower components.

Caching software is now free and ubiquitous on Ryzen platforms. The PCIE 3.0 x4 link absolutely does get saturated. By gimping the 2nd NVME drive to PCIE 2.0 on Ryzen, they ensure it

does not saturate the chipset connection which you do not want when you have multiple other I/O that is important. When you saturate or even during normal traffic, you're doing buffering/prioritization/throttling through the chipset which adds latency and results in unpredictable speeds. You want fair and sensible access. Chipset devices are

secondary citizens which is why they all

fairly share a PCIE 3.0 x4.. To be, no one citizen should be allowed to saturate the channel. First class citizens get their own dedicated lane.

Intel carried over a busted arse paradigm to gimp their platform and that's the end of this discussion. They have this junk even on their HEDT.

Meanwhile, in AMD land on HEDT, i have 3

dedicated PCIE 3.0 x4 lanes for direct nvme connection to CPU + x16/x16/x8/x8 that can be bifurcated to x4/x4 or x4/x4/x4/x4 allowing for insane I/O expansion. Meanwhile at Intel : DMI 3.0 ... The stunts intel pulls are as clear as day. You can ignore them and make excuses but no one with a brain is buying it.

Intel's strategy over the years they dominated was simple... One aspect of it was to gimp I/O so you have to buy more processors to scale your I/O. The way they have their I/O laid out on the desktop is still locked into this paradigm. It likely will change as it will have to in the years ahead but the current sockets and architecture are gimped to hell.