This is maybe a little off topic, but since already have given my opinion in my very

first post here about this, go there if you want to read it, the resume is the more cores the better, nowhere in the world the system with just 4 cores will be faster and gains performance over time!

But since the discussion change a bit and this is somehow related, I will add this:

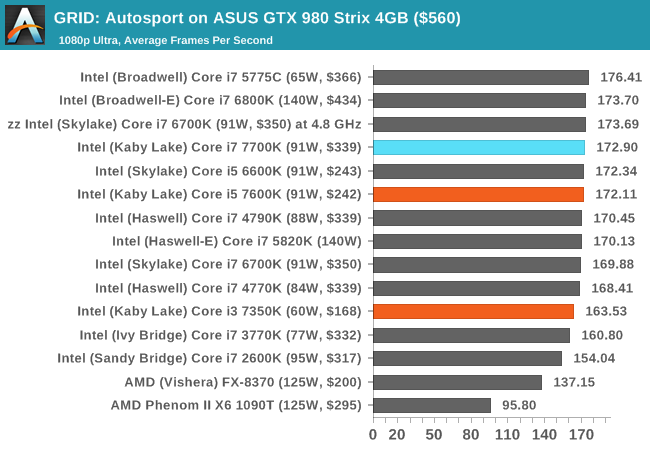

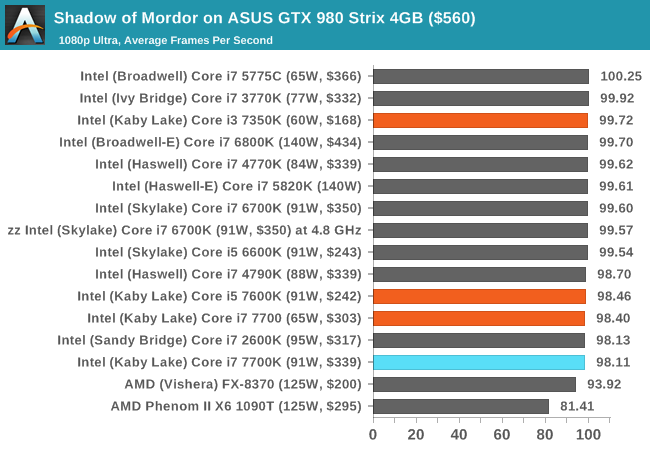

Normally games benchmarks testers gives you min frames and averages,

nobody cares about maximum frames which are very important for erratic and

-Redacted- game play, and also the frames difference between the

minimum obtained frames and the

maximum frames obtained, of course the

difference between those and the average is also very important!

I remember when I had the radeon 9700 and my friends and family had the radeon 9600, 9500, geforce 5600, 5700, … when playing Half Life 2 for example, my system was noticeable producing more frames that the others, but me and them noticed that somehow their game play was better than mine because it was noticeable less erratic game performance, where I was going from 100fps to 30fps or less and noticed, and their system kept a better stable game play experience, even if they were getting less frames than I, but at least the difference between the

maximum and the

minimums was not has high has my system. I certainly noted that the guys with the 9600XT (or something) the game play looked and felt better.

Let’s say for example my radeon 9700 was at 25fps min and 100fps max, and their 25fps mim and 60 fps max.

So the examples bellow not even with freesync or gsync or whatever tech appears solves the problem. But certainly improves specifically the second example.

Example of what a bad frame rate can be very enjoyable, since there are no slowdowns or excessive speed ups:

Example of a nice frame rate but with many slowdowns or excessive speed ups probably less enjoyable than the previous example:

Example of good frame rate but only for half a second because the other half system stalled:

Profanity is not allowed in the tech forums.

Profanity is not allowed in the tech forums.

Daveybrat

AT Moderator