William Gaatjes

Lifer

LOL where do you guys get this stuff? 😀🙄😵

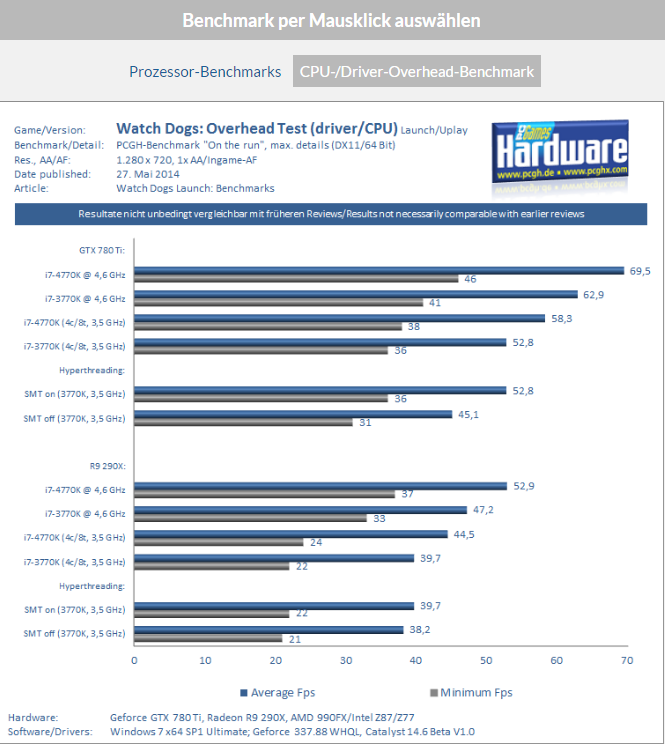

Do you just make things up as you go along? NVidia drivers scaling poorly on CPUs with more than four cores/threads eh? Then by gosh, how do you explain this? As far back as Kepler, NVidia drivers scaling with HT enabled on a 3770K whereas AMD's driver chokes:

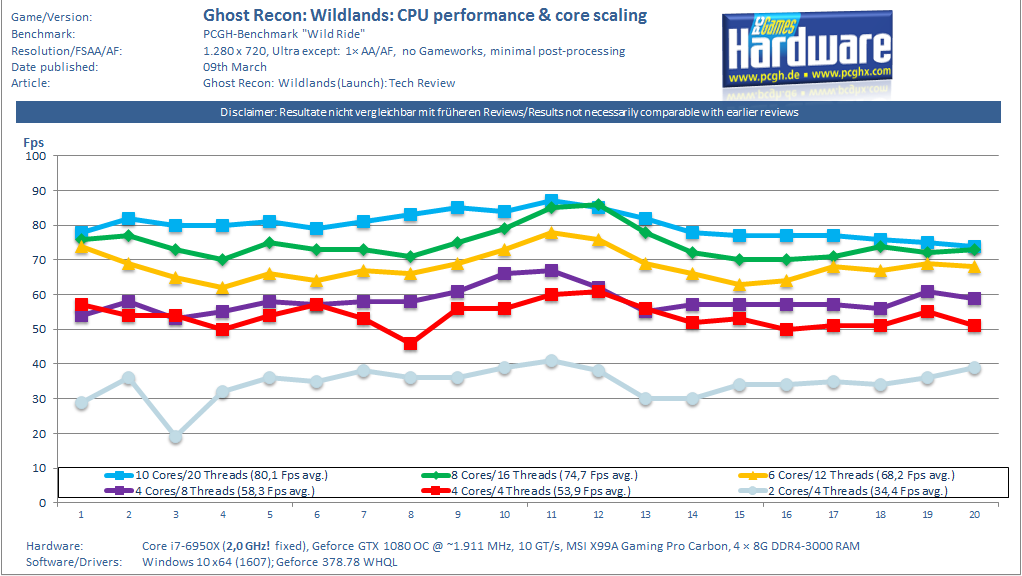

Perhaps something a bit more modern then? How about a GTX 1080 scaling all the way to 10 cores/20 threads in Ghost Recon Wildlands:

And just a few months ago, Computerbase.de did a test on CPU scaling with a Titan X Pascal, and look at what they found.

The truth is, you guys have no idea what you're talking about. NVidia's driver scales wonderfully on high core/threaded CPUs, and NVidia's drivers have been native 64 bit for years 🙄

Also, CPU scaling has more to do with the game itself than the drivers. If a game is programmed to only use four threads, then no amount of driver trickery will change that.

Could you post the same images with respect 1080p , 1440p and 4k ?