CrazyElf

Member

There is no "uncore" in Ryzen.

CCX essentially operates at it's own (core speed) and the fabrics and the memory controller at half the effective MEMCLK speed.

Uncore is just the term used by CPU-Z for data fabric (DFICLK).

That's basically what I meant - unlike say, Skylake, where the CPU core and uncore are split, on Ryzen it's all the same clocks. That makes sense.

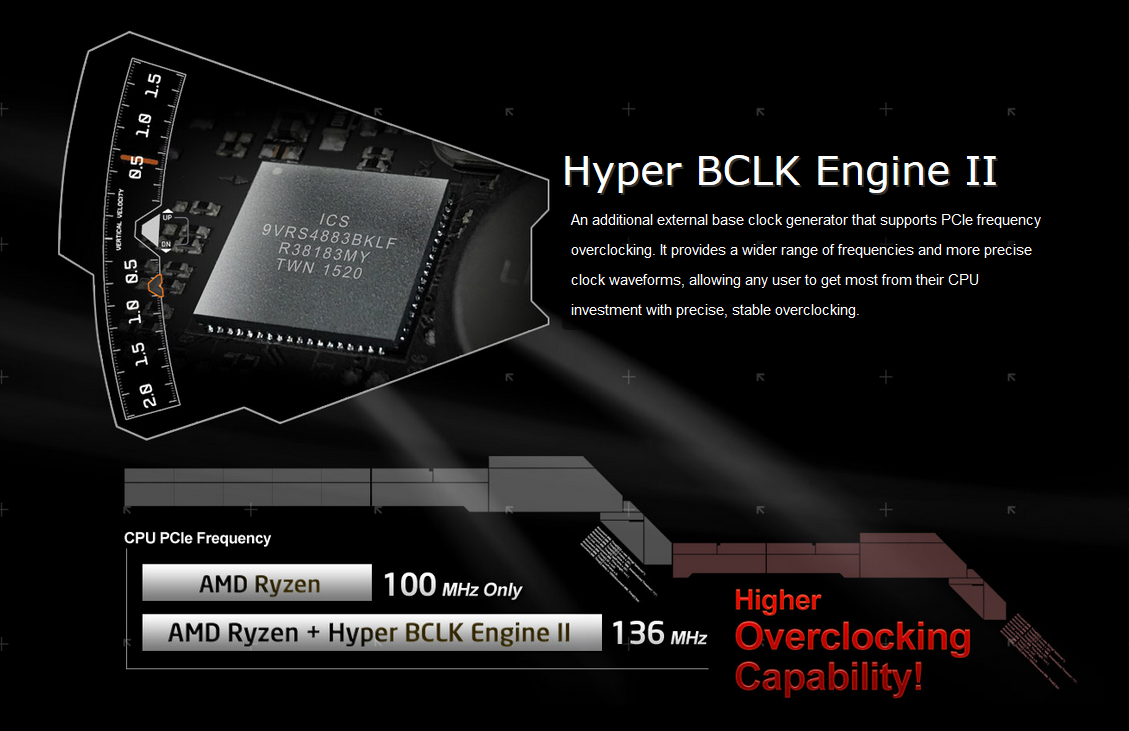

Has anyone seen these Asrock boards?

http://www.asrock.com/MB/AMD/Fatal1ty X370 Professional Gaming/index.asp

Hyper BCLK Engine II

An additional external base clock generator that supports PCIe frequency overclocking. It provides a wider range of frequencies and more precise clock waveforms, allowing any user to get most from their CPU investment with precise, stable overclocking.

They claim a 130 MHz base clock with their "engine". Any idea what that means?

Just marketing or does it actually do anything?

Edit: On Z170 boards apparently this played a role in the "SkyOC" function that allowed non-K CPUs to OC.

http://forum.asrock.com/forum_posts.asp?TID=2200&title=hyper-bclk-engine

Last edited: