poke01

Diamond Member

These won’t be available till June. What’s the point of announcing 3 months early?BOMBSHELL EXCLUSIVE NEWS

EXCLUSIVE: Microsoft will unveil OLED Surface Pro 10 and Arm Surface Laptop 6 this spring ahead of major Windows 11 AI update

Microsoft's first AI PCs will be unveiled on March 21, ahead of next-gen Windows 11 AI features coming this fall.www.windowscentral.com

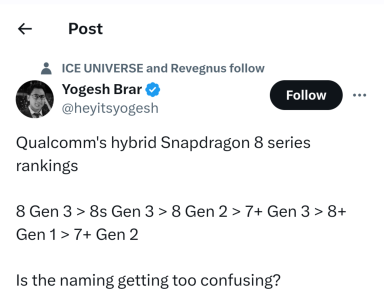

Microsoft will announce the next gen Surface Pro and Surface Laptop with Snapdragon X Elite next month.