MrMPFR

Senior member

YehBut not really much over the PS5 Pro.

I think the GPU clock will be in the 2.5 Ghz range.

If true Sony gonna do PS4 form factor. 2.5ghz on N3P will be crazy efficient.

YehBut not really much over the PS5 Pro.

I think the GPU clock will be in the 2.5 Ghz range.

You're right and I was wrong it seems. New pipeline allows AMD to do more with less + SE issue was overblown. -33% SE (also RB + Rasterizer) vs PS6 won't matter that much. Kepler also confirmed expanded dual issue for RDNA5, that'll help boost IPC a lot.I expect PS6 to be comparable to AT3 / Medusa Halo

Now that AT4 dGPU should be ~9060XT according to Kepler the 2X bigger AT3 dGPU should indeed be comparable to the PS6.

the power budgets are vastly different between 48cu AT4 vs 54 CU PS6I'd say the 48CU AT3 would need to be ~12% higher clocked than the PS6's 54CU for them to be comparable.

Looking at how Sony bet a lot on clockspeeds for the PS5, I don't know if that will be the case.

Actually, if the AT3 dGPU uses 16 channels * 24bit LPDDR6 14.4Gbps then its RAM bandwidth will be 691GB/s. If the PS6 goes with 160bit GDDR7 as currently rumored, then it's 640GB/s.One place the PS6 will have the edge is in using gddr7 memory. sony has always gone for bonkers memory. so in memory bound scenarios the PS6 should come ahead

Thought PS6 was 52CUs with 2 disabled.I'd say the 48CU AT3 would need to be ~12% higher clocked than the PS6's 54CU for them to be comparable.

Looking at how Sony bet a lot on clockspeeds for the PS5, I don't know if that will be the case.

Yeah GDDR7 gonna guzzle electrons in comparison to 384bit LPDDR6 But -370mhz will bring power down that's for sure.the power budgets are vastly different between 48cu AT4 vs 54 CU PS6

One place the PS6 will have the edge is in using gddr7 memory. sony has always gone for bonkers memory. so in memory bound scenarios the PS6 should come ahead otherwise it will be in ballpark of AT4

LPDDR6 has ECC overhead. 256/288 x 640GB/s = 569GB/s. I don't expect it to tap out LPDDR6 standard though but perhaps things will change ~2-2.5 years from now. Maybe ~13gbps. ~500GB/s.Actually, if the AT3 dGPU uses 16 channels * 24bit LPDDR6 14.4Gbps then its RAM bandwidth will be 691GB/s. If the PS6 goes with 160bit GDDR7 as currently rumored, then it's 640GB/s.

Things will change drastically if the AT3 dGPU only uses LPDDR5X, though.

Could be. Nothing is confirmed it's just speculation based on AMD patents and research papers.ML upscaling in playstation 6 will be a huge leap forward

MLID continuing the PS6 = RDNA 5 and PS5 = RDNA 2 claims and saying there's no such thing as full RDNA5 in latest Broken Silicon.

It's still misleading regardless of how AMD spins it. The equivalent would be that the 7600 didn't have dual issue or work graphs HW and they still called it RDNA3.Well, he's right. AMD is the one who decides what to call RDNA2 and RDNA5, and AMD declared the PS5's iGPU is RDNA2. AMD never said the PS5 uses "RDNA1.5", and neither did they ever say "full RDNA2 is only GFX1020 and up". If AMD says the PS5 is RDNA2 and the PS5's ISA is GFX1013, then GFX1013 is RDNA2.

DP4a + XSX compute advantage makes FSR4 INT8 possible, if AMD ever decides to officially release it.Instead, none of that happened:

- DP4a capabilities of the Xbox consoles never got used for AI upscaling because there wasn't enough throughput for it and they ended up using regular temporal scaling like the PS5;

- Hardware Variable Rate Shading Tier 2 was so restrictive that every game/engine ended up using compute shader-based solutions with better granularity;

- Sampler Feedback Streaming only ever got used in 3dmark and some RTX demo because once again the practical results didn't match the theory so modern engines adopted software virtual texturing instead.

Yeah XSX isn't very well balanced + has more bloated OS.Games would perform neck and neck between the PS5 and the Series X, with the former even beating the latter in a couple scenarios where the additional fillrate and/or triangle output would compensate for the lower compute throughput and memory bandwidth

No one bothered because main console didn't support any of it.And we're past the 5 year mark of those "longer" AAA development cycles, so if we haven't seen these adopted yet, then they're just not happening. Sony made the better decisions by locking down their specs earlier and focusing more on dev tools, as well as betting on narrow+fast instead of wide+slow for cheaper SoCs in the long run.

Kepler already confirmed it's not PS5 vs XSX 2.0. For gaming it should have basically the full feature set, so any talk of missing features is splitting hairs.That said, if MLID saw internal documents describing the PS6's iGPUs as RDNA5, then it's RDNA5 regardless of ISA.

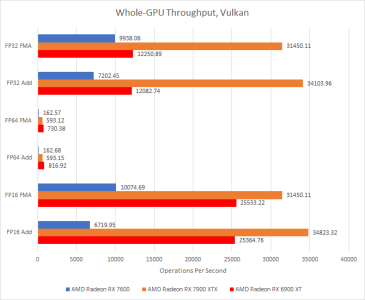

Don't know about work graphs hardware, but N33 does have dual issue:The equivalent would be that the 7600 didn't have dual issue or work graphs HW and they still called it RDNA3.

What AMD says publicly and what they consider the PS5 to be internally are very different things. Upcoming CPUs with "Zen6LP" are another example where despite the name, it is considered "Zen5 with Zen6 ISA" internally.Well, he's right. AMD is the one who decides what to call RDNA2 and RDNA5, and AMD declared the PS5's iGPU is RDNA2. AMD never said the PS5 uses "RDNA1.5", and neither did they ever say "full RDNA2 is only GFX1020 and up". If AMD says the PS5 is RDNA2 and the PS5's ISA is GFX1013, then GFX1013 is RDNA2.

The "full RDNA2" thing was a marketing push by Microsoft (then massively adopted by Digital Foundry and some hardcore fans at Beyond3D, NeoGAF, etc.) to paint the Xbox Series' iGPUs as "more advanced" than the PS5's so it would give the idea that multiplatform games would look substantially better or run substantially faster on the Xbox and boost its sales.

Instead, none of that happened:

- DP4a capabilities of the Xbox consoles never got used for AI upscaling because there wasn't enough throughput for it and they ended up using regular temporal scaling like the PS5;

- Hardware Variable Rate Shading Tier 2 was so restrictive that every game/engine ended up using compute shader-based solutions with better granularity;

- Sampler Feedback Streaming only ever got used in 3dmark and some RTX demo because once again the practical results didn't match the theory so modern engines adopted software virtual texturing instead.

Games would perform neck and neck between the PS5 and the Series X, with the former even beating the latter in a couple scenarios where the additional fillrate and/or triangle output would compensate for the lower compute throughput and memory bandwidth.

And we're past the 5 year mark of those "longer" AAA development cycles, so if we haven't seen these adopted yet, then they're just not happening. Sony made the better decisions by locking down their specs earlier and focusing more on dev tools, as well as betting on narrow+fast instead of wide+slow for cheaper SoCs in the long run.

That said, if MLID saw internal documents describing the PS6's iGPUs as RDNA5, then it's RDNA5 regardless of ISA.

They state 7000 series and newer in the Work graphs press releases + I know

ohhhUpcoming CPUs with "Zen6LP" are another example where despite the name, it is considered "Zen5 with Zen6 ISA" internally.

The PS5 shows almost exactly the same performance-per-clock and relevant features as all other RDNA2 GPUs. It's a RT-enabled follow-up of RDNA1 that reaches significantly higher clocks, and the features it lacks from GFX1020+ ended up not being widely used. For all intents and purposes, what AMD said publicly seems more correct than whatever these internal discussions concluded.What AMD says publicly and what they consider the PS5 to be internally are very different things.

If it wasn't utilized, then it's trivial. That's pretty much what defines "trivial".Again I know PS5 has the most important stuff, but omitting mesh shaders, VRS, Sampler feedback (TSS and SFS) and DP4a isn't trivial, even if that functionality mostly hasn't been utilized.

Aren't the "LP" class of CPU cores an entirely new line developed mainly for lower leakage idle and OS tasks, though?Upcoming CPUs with "Zen6LP" are another example where despite the name, it is considered "Zen5 with Zen6 ISA" internally.

RDNA2 doesn't have any "IPC" gainsThe PS5 shows almost exactly the same performance-per-clock

No DP4a, no VRS, no Mesh Shaders, no Task Shaders, no SFS.relevant features as all other RDNA2 GPUs.

Why would developers use a feature that the PS5 (with >75% market share) doesn't support?and the features it lacks from GFX1020+ ended up not being widely used.

They are derived from Zen5 though.Aren't the "LP" class of CPU cores an entirely new line developed mainly for lower leakage idle and OS tasks, though?

Not trivial from a hardware perspective, I know adoption has been underwhelming to put it mildly for reasons explained by Kepler.If it wasn't utilized, then it's trivial. That's pretty much what defines "trivial".

TSS remembers which texels have been shaded and only shades those that have been newly requested. Texels shaded and recorded can be reused to service other shade requests in the same frame, in an adjacent scene, or in a subsequent frame. By controlling the shading rate and reusing previously shaded texels, a developer can manage frame rendering times, and stay within the fixed time budget of applications like VR and AR. Developers can use the same mechanisms to lower shading rate for phenomena that are known to be low frequency, like fog. The usefulness of remembering shading results extends to vertex and compute shaders, and general computations. The TSS infrastructure can be used to remember and reuse the results of any complex computation.

Wasn't there some BS with the God of War PC port or some other Sony exclusive on PC where they stripped out all the PS4 Pro optimizations because it negatively affected Paxwell cards?Same thing happened with AMD on desktop PCs, they were first to many things like Tessellation, Async Compute, FP16 but those weren't used until NVIDIA supported it.

Does RDNA 2 have this in HW or just some clever compiler rework?no Task Shaders

Exactly. And for >95% of realistic use-cases, RDNA2 = RDNA1 + RT + higher clocks.RDNA2 doesn't have any "IPC" gains

Which are mostly irrelevant because they're not used.. Save for enabling DP4a XeSS on RDNA2 GPUs, neither PC nor Xbox hardware are making use of the rest.No DP4a, no VRS, no Mesh Shaders, no Task Shaders, no SFS.

1st party Xbox developers didn't use it either and Phil Spencer, Matt Booty, Sarah Bond, etc. could've dictated they had to use these to make a difference in their hardware.Why would developers use a feature that the PS5 (with >75% market share) doesn't support?

Microsoft is the biggest videogame publisher in the world and they couldn't mandate their own gamedevs to allocate the resources to develop a functional DP4a upscaler that runs on their ~30 million consoles?Also devs are not going to use DP4a for consoles when XSS can't run the stuff properly, Xbox is tiny portion of market + PS5 doesn't support it.

If it had DP4a then we would've seen a competent ML upscaler used PS5 on console at some point.

Yeah well MS clearly doesn't care + XSS GPU is useless and weaker than a 6500XT, RX 470/1650 territory. Would've never worked when RX 6600 struggles with FSR4 INT8.Microsoft is the biggest videogame publisher in the world and they couldn't mandate their own gamedevs to allocate the resources to develop a functional DP4a upscaler that runs on their ~30 million consoles?

FSR4/PSSR has a magical effect in RE Requiem