igor_kavinski

Lifer

Must've been unusually high quality to survive reverse polarity and not explode immediately after being turned on.Probably installed with the Uno Reverse card, ASUS style.

Must've been unusually high quality to survive reverse polarity and not explode immediately after being turned on.Probably installed with the Uno Reverse card, ASUS style.

Ooh, the GB10 chip that's in the DGX Spark is claimed to be using TSMC N3 for both the CPU and GPU. Also the GPU die is derived from GB100 and not the gaming chips.

Still very lacking in the memory bandwidth tho - 301 GB/sec.

It will be interesting see a matchup with Strix Halo.

It says the TDP is 140 W so not really a laptop chip. (Course tell that to Intel)

It says the TDP is 140 W so not really a laptop chip. (Course tell that to Intel)

in 2025 even 13'' thin laptops can do 140W total sustained without throttling

16'' 2kg class can do upwards of 200W

It will be roughly a Nvidia L4 combined with 20 core ARM, just with the latest gen 5 cuda cores instead of gen 4. The L4 does 30 TFLOPs FP32, while the GB10 is said to have 31 TFlops FP32 and they both have the same memory bandwidth, just that the GB10 may have up to 128GB unified memory, vs the L4 having 24GB dedicated memory (the unified memory is shared for both the ARM CPU cores and the GPU).

I only see video compression artifacts that likely smooth out the finer detail. Without it hair seems to be less dense so compression doesn't hurt as much.looks like Sh*t with on

looks like Sh*t with on

looks like Sh*t with on

Agreed. The only reason I own a 5090 is because its purchase is justifiable as AI processing hardware. There's no such alternative from AMD, unfortunately.A company trying to find ways to justify the high price tag of its overpriced unreliable GPU with buggy drivers. Yeah guys. Keep enjoying staring at the hair instead of actually playing the game and oh, don't worry if you smell something weird. It's part of the experience. If something burns, take it as an opportunity to reward this company for making more innovative products and buy the GPU again. Remember, you need to trust them and keep letting them try, try again. One day they will make a true banger of a product with zero issues!

That's true. AMD can't even be bothered to do Vulkan properly for RDNA4. It's not detected in LM Studio yet both Intel ARC and Nvidia cards (even the 1080 Ti) have no issue being detected by LM Studio.Agreed. The only reason I own a 5090 is because its purchase is justifiable as AI processing hardware. There's no such alternative from AMD, unfortunately.

288 SMs? Looks like an additional row on each side vs GB202I didn’t see a Rubin thread, so I’ll post this here:

NVIDIA Rubin CPX Accelerates Inference Performance and Efficiency for 1M+ Token Context Workloads | NVIDIA Technical Blog

Inference has emerged as the new frontier of complexity in AI. Modern models are evolving into agentic systems capable of multi-step reasoning, persistent memory, and long-horizon context—enabling…developer.nvidia.com

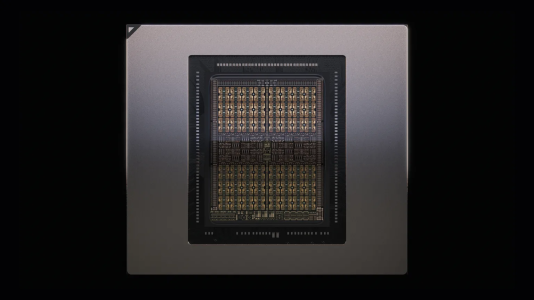

It looks like Nvidia are pre-announcing what I’m guessing is the professional version of what will likely be the same die as the RTX 6090, especially since it employs GDDR7 memory. I also count what appears to be a 512-bit memory interface:

View attachment 129892

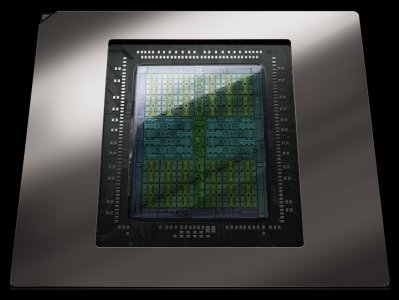

So this is a rasterizer going close to reticle size limit like GB202, but on N3P?288 SMs? Looks like an additional row on each side vs GB202View attachment 129893

So this is a rasterizer going close to reticle size limit like GB202, but on N3P?

Yeah.So this is a rasterizer going close to reticle size limit like GB202, but on N3P?

So we're likely looking at a ~40% to 60% uplift gen-on-gen. Yes, I understand that there likely will be clock speed improvements due to N3 FinFlex, but performance doesn't also scale perfectly with SMs either.288 SMs? Looks like an additional row on each side vs GB202View attachment 129893

That's been especially the problem with lotsa SM parts from NV.but performance doesn't also scale perfectly with SMs either.

I bet it won't prevent it from being the best selling Nvidia flagship, courtesy of some new exclusive feature that Nvidia's developers are secretly inserting into future games as we speak.That's been especially the problem with lotsa SM parts from NV.

They gotta work that out or this will be the most useless gaming toy in eons.

Features are whatever, just that SM scaling might be bad outside of doing GEMM.I bet it won't prevent it from being the best selling Nvidia flagship, courtesy of some new exclusive feature that Nvidia's developers are secretly inserting into future games as we speak.