BFG10K

Lifer

NVIDIA GeForce RTX 3070 Founders Edition Review - Disruptive Price-Performance

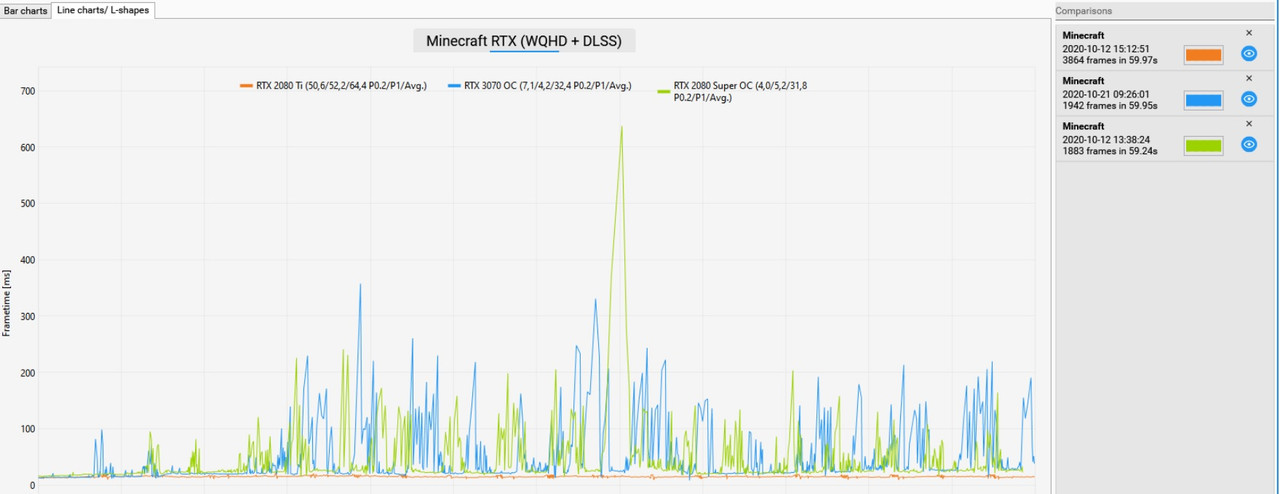

NVIDIA's GeForce RTX 3070 is faster than the RTX 2080 Ti, for $500. The new FE cooler is greatly improved, runs very quietly, and even has fan stop. In our RTX 3070 Founders Edition review, we'll also take a look at RTX performance, energy efficiency, frametimes, and overclocking.

Nvidia GeForce RTX 3070 im Test

Die Nvidia GeForce RTX 3070 ist der neue Ampere-Einstieg für 500 Euro. Im Test liefert sie sich ein Kopf-an-Kopf-Rennen mit der RTX 2080 Ti.

Nvidia GeForce RTX 3070 Founders Edition Review: Taking on Turing's Best at $499

Fast and efficient, the RTX 3070 basically matches the previous gen 2080 Ti at less than half the cost.

I'm DONE covering for NVIDIA - RTX 3070 Review

Learn more about the MSI MAG B550 TOMAHAWK Motherboard on Amazon at https://geni.us/sxc6m or Newegg at https://geni.us/fudnna Save 10% and Free Worldwide Shi...

VRAM test proving 8GB isn't enough in Minecraft and Wolfenstein: https://www.pcgameshardware.de/Gefo...747/Tests/8-GB-vs-16-GB-Benchmarks-1360672/2/

The 3070 is the first card that actually interests me from the Ampere line.

Last edited: