It doesn't really work like that. It isn't a case of programming for specific numbers of cores. It's a case of programming for multi-threaded where applicable.

Modern software will query the OS for the CPU count. When there is work that can be done in parallel. The will essentially spawn a number of threads to split that work depending on how many cores are deemed available.

Most games in the foreseeable future, are not going to see a huge performance boost from very high core counts, because most gaming problems are not highly parallel problems.

Let's look at an exception. A game that is mainly built around a highly parallel problem: Ashes of the Singularity. This is not a harbinger of things to come, nor programmers ahead of their time. It's a more unique problem space than most games.

AotS is basically about moving around thousands of independent units in parallel. It's as perfect a Parallel gaming problem as you will get.

3200 units/16 cores, so each core can work on moving 200 units.

It's the most parallel gaming workload on the market, and even it seems to stall out on high core counts. Why is that? Two things;

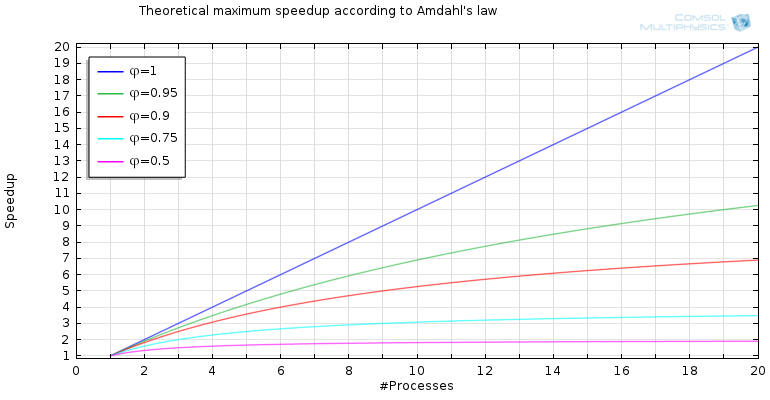

Bottlenecks and Amdahls Law. GPU will usually be a serious bottleneck but even when it isn't you have Amdahl's Law to contend with. Most games, IMO, will likely remain 50% Parallel max, meaning you will see some gains (but not 2X) moving from 4 cores to 8, but 8-16 will be insignificant.

I doubt even the most parallel games like AotS will get above 75% parallel overall, so gains will still be slight. 50-75% parallel are the bottom pink and blue lines on the graph:

Really, it's diminishing returns after quad cores, and isn't because programmer were coding for quad cores. It's the nature of mixed serieal/parallel problem spaces.