-

We’re currently investigating an issue related to the forum theme and styling that is impacting page layout and visual formatting. The problem has been identified, and we are actively working on a resolution. There is no impact to user data or functionality, this is strictly a front-end display issue. We’ll post an update once the fix has been deployed. Thanks for your patience while we get this sorted.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

News Intel GPUs - we've given up on B770, where's Celestial already

Page 47 - Seeking answers? Join the AnandTech community: where nearly half-a-million members share solutions and discuss the latest tech.

DrMrLordX

Lifer

Don't think it mattered at this point.

Depends on whether or not DG2/ARC's drivers are similar to the current iGPU drivers. If so, mining will be a mess on Arc until developers figure out how to make it work.

So, who's planning on Aping up into Intel hype train, just like me and buy that hopium they sell?

Who's planning on building profanum of a PC: AMD CPU and Intel dGPU?

If the price is right and the performance is there, I might consider it. My Radeon VII is over 2 years old now. I would eventually like a replacement. A 6900XT (or its successor) would be preferable, but getting one is too difficult/expensive for me to even want to consider it right now. I'll be looking whenever Raphael goes on sale.

Glo.

Diamond Member

Current speculation/rumors says that DG2 is anywhere between RTX 3060 and RTX 3070 Ti.

LOL.

Well, we can make some assumptions.

512 EUs(4096 ALUs), 128 ROPs, 256 bit bus

384 EUs(3072 ALUs), 96 ROPs, 192 bit bus

256 EUs(2048 ALUs), 64 ROPs, 128 bit bus.

128 EUs(1024ALUs), 32 ROPs, 64 bit bus.

Lets assume that it has per alu performance parity with Turing, not Ampere.

2048 ALUs would have RTX 2060 Super performance, or better(RTX 3060) performance.

3072 ALUs would have RTX 2080 Super performance, or better(higher clock speeds)

4096 ALUs would have RTX 2080 Ti performance(slightly below), which directly translates to the rumored performance numbers.

So the performance might be there.

Rumors also talk about those GPUs being cheap because Intel wanting to make a splash entry into the market, and flooding it with GPUs, and great value.

P.S. I would not be surprised if we would see CPU+GPU+Mobo bundles from Intel.

They would make possible getting GPUs at MSRPs this way...

LOL.

Well, we can make some assumptions.

512 EUs(4096 ALUs), 128 ROPs, 256 bit bus

384 EUs(3072 ALUs), 96 ROPs, 192 bit bus

256 EUs(2048 ALUs), 64 ROPs, 128 bit bus.

128 EUs(1024ALUs), 32 ROPs, 64 bit bus.

Lets assume that it has per alu performance parity with Turing, not Ampere.

2048 ALUs would have RTX 2060 Super performance, or better(RTX 3060) performance.

3072 ALUs would have RTX 2080 Super performance, or better(higher clock speeds)

4096 ALUs would have RTX 2080 Ti performance(slightly below), which directly translates to the rumored performance numbers.

So the performance might be there.

Rumors also talk about those GPUs being cheap because Intel wanting to make a splash entry into the market, and flooding it with GPUs, and great value.

P.S. I would not be surprised if we would see CPU+GPU+Mobo bundles from Intel.

They would make possible getting GPUs at MSRPs this way...

PingSpike

Lifer

So, who's planning on Aping up into Intel hype train, just like me and buy that hopium they sell?

Who's planning on building profanum of a PC: AMD CPU and Intel dGPU?

🙂

I was actually onboard with the profanum PC for my server. But since then Intel has abandoned gvt-g and DG1 was locked to a subset of Intel only motherboards idea died. So its more of a curiosity for me than anything else at this point. If the cards are really cheap though I'll reassess.

Jokes aside, how's current state of crypto mining algorithms on Intel GPUs?

Still doesn't work, at all?

First off, the miner has to target the Intel OpenCL platform, and no miner does it, the only reason that mining is possible on AMD IGPs it is because they share the same OCL platform as the GPUs. And even then, it does not work with every miner.

As i understand, only ethminer work with intel igpus but still needs some work arounds to make it work.

Intel can't really make a splash if they require Samsung or TSMC to get their GPUs fabricated. They'd still compete for wafers with AMD and NVidia, among other companies needing fab time and space. On the other hand, your conjecture about bundle deals makes a lot more sense and would incentivize purchases for their Alderlake hardware. No idea what their AIB partners will be like if any, but I can see bundle deals being the best way to get product moving and make a dent in AMD and NVidia's side. This is all dependent on ARC being good and well 12th gen Alderlake not being a steaming pile of doo.Rumors also talk about those GPUs being cheap because Intel wanting to make a splash entry into the market, and flooding it with GPUs, and great value.

P.S. I would not be surprised if we would see CPU+GPU+Mobo bundles from Intel.

They would make possible getting GPUs at MSRPs this way...

darkswordsman17

Lifer

Ian Cutress making his own youtube channel and not cross posting on AT, speaks for itself on how far anandtech has gone downminus the forums.

FTFY.

He seems more interested in doing fluff interviews and posting on Twitter anyway, so its not exactly like he's putting out quality content that trounces what's showing up on AT proper.

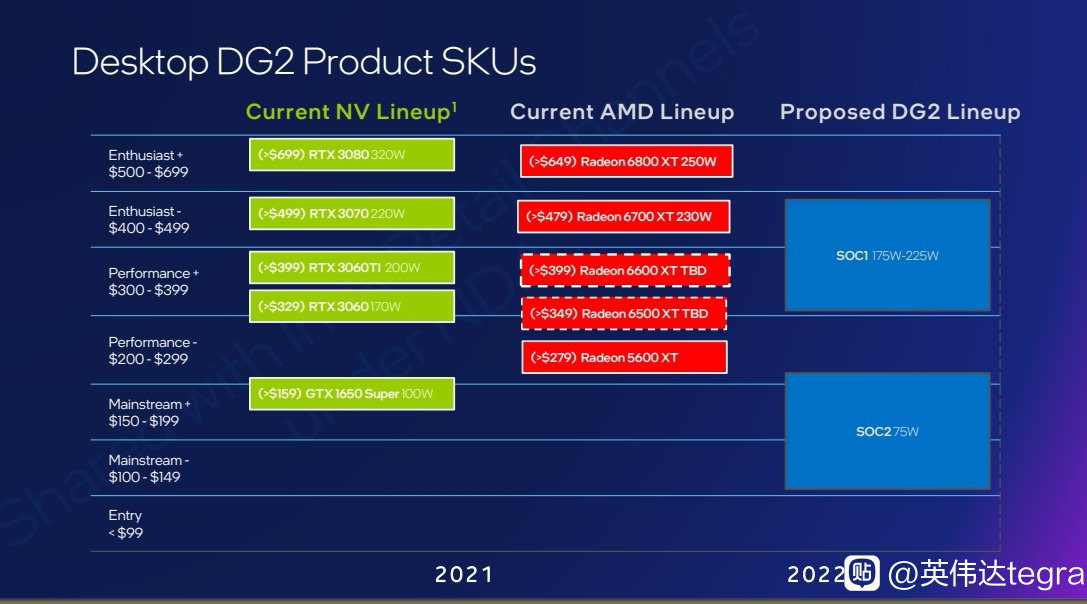

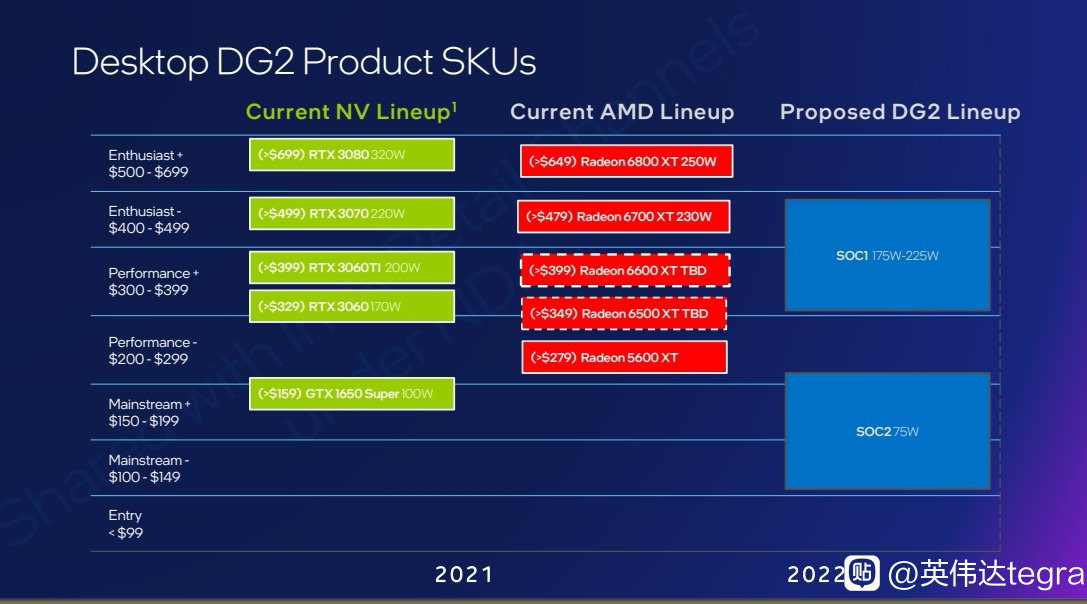

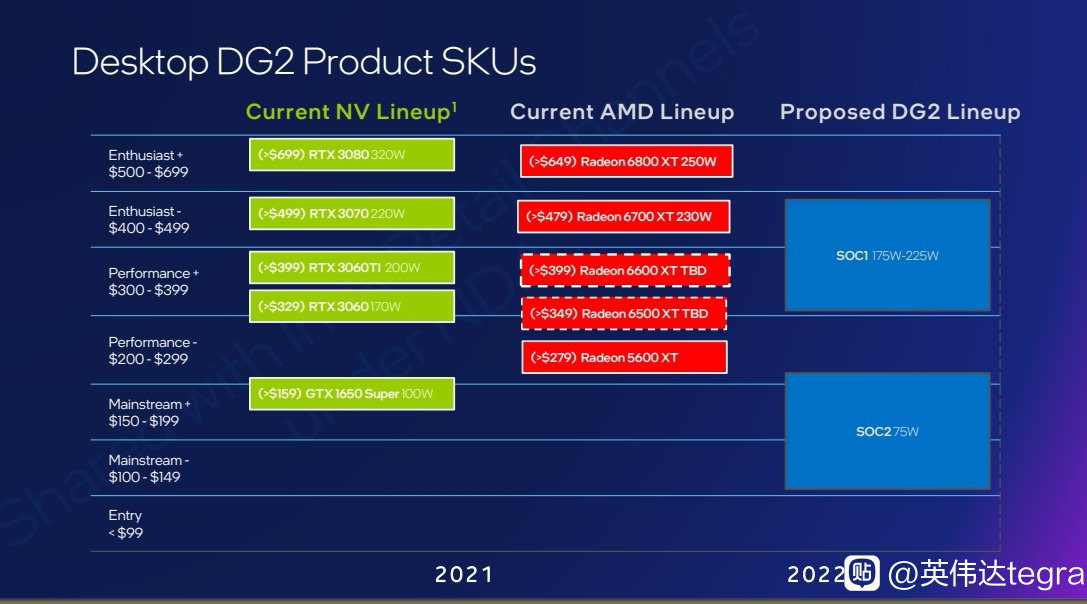

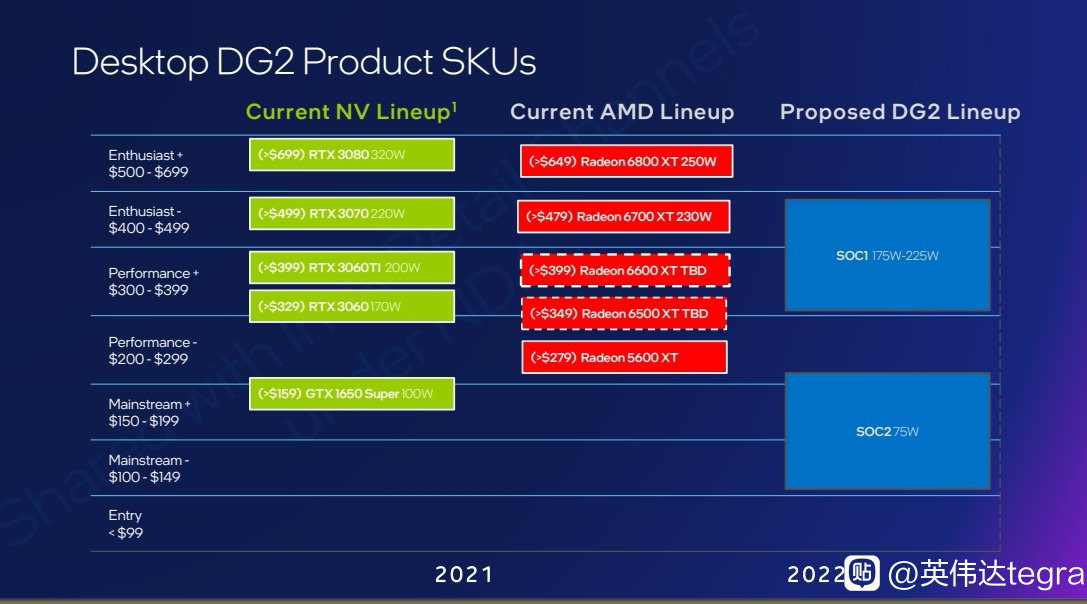

Intel seems to expect they can compete up to RTX 3070/6700XT with their fastest SKU.

videocardz.com

videocardz.com

It's also nice to see they are targeting the lower end range with a 75W SKU which is probably the 128EU version. Videocardz says it's either DG2-384 or DG2-256 but I don't think it is. There are only two dies as far as we know, 512 and 128 EUs which is I believe what they mean with SOC1 and SOC2, so it makes more sense Intel is using the smaller die for this and not a cut down 512 version.

Leaked slide shows Intel DG2 (Arc Alchemist) GPUs compete with GeForce RTX 3070 and Radeon RX 6700XT - VideoCardz.com

Intel Arc Alchemist to compete in 100 – 500 USD price range Intel DG2 GPUs are set to compete with NVIDIA’s upper mid-range segment officially, according to this leaked slide. Should the slide be real, Intel is clearly not planning to compete with NVIDIA GA102 and AMD Navi 21 GPUs in terms of...

It's also nice to see they are targeting the lower end range with a 75W SKU which is probably the 128EU version. Videocardz says it's either DG2-384 or DG2-256 but I don't think it is. There are only two dies as far as we know, 512 and 128 EUs which is I believe what they mean with SOC1 and SOC2, so it makes more sense Intel is using the smaller die for this and not a cut down 512 version.

DisEnchantment

Golden Member

FTFYHe seems more interested in doing fluff interviews andpostingdebating on Twitter anyway, so its not exactly like he's putting out quality content that trounces what's showing up on AT proper.

What I find awkward, and at least to my opinion unprofessional, is mentioning and involving corporate accounts and individuals in all this debate on twitter.

The only thing why I still read Anandtech is because there is no rumor whispy whispy garbage articles on their main site and I applaud them for that.

Patrick/STH from STH maintains a much more restraint behaviour when on posting on Twitter

Maintaining an objective attitude is what journalism at Anandtech used to be

psolord

Platinum Member

I like this graph because it's RGB! xDIntel seems to expect they can compete up to RTX 3070/6700XT with their fastest SKU.

Leaked slide shows Intel DG2 (Arc Alchemist) GPUs compete with GeForce RTX 3070 and Radeon RX 6700XT - VideoCardz.com

Intel Arc Alchemist to compete in 100 – 500 USD price range Intel DG2 GPUs are set to compete with NVIDIA’s upper mid-range segment officially, according to this leaked slide. Should the slide be real, Intel is clearly not planning to compete with NVIDIA GA102 and AMD Navi 21 GPUs in terms of...videocardz.com

It's also nice to see they are targeting the lower end range with a 75W SKU which is probably the 128EU version. Videocardz says it's either DG2-384 or DG2-256 but I don't think it is. There are only two dies as far as we know, 512 and 128 EUs which is I believe what they mean with SOC1 and SOC2, so it makes more sense Intel is using the smaller die for this and not a cut down 512 version.

Intel seems to expect they can compete up to RTX 3070/6700XT with their fastest SKU.

Leaked slide shows Intel DG2 (Arc Alchemist) GPUs compete with GeForce RTX 3070 and Radeon RX 6700XT - VideoCardz.com

Intel Arc Alchemist to compete in 100 – 500 USD price range Intel DG2 GPUs are set to compete with NVIDIA’s upper mid-range segment officially, according to this leaked slide. Should the slide be real, Intel is clearly not planning to compete with NVIDIA GA102 and AMD Navi 21 GPUs in terms of...videocardz.com

It's also nice to see they are targeting the lower end range with a 75W SKU which is probably the 128EU version. Videocardz says it's either DG2-384 or DG2-256 but I don't think it is. There are only two dies as far as we know, 512 and 128 EUs which is I believe what they mean with SOC1 and SOC2, so it makes more sense Intel is using the smaller die for this and not a cut down 512 version.

If the TDP numbers are accurate, the parts look good. However, knowing Intel, power consumption will moon into a 450W PL2, causing numerous complaints from everyone…

LightningZ71

Platinum Member

I think that Intel is missing an opportunity for product synergy here. They have the perfect product to pair with their dGPUs, Optane!

Hear me out, we know that putting a ton of very high speed ram on graphics cards gets expensive quite quickly in both cost and energy draw. Why not enhance their product with something that they already have but can't seem to find a good market for? If they integrated a few Optane chips onto their cards, they could have a pool of 64+GB of second class vram to use as "near" storage for their cards. This would allow the massive levels in modern games to be preloaded before game execution and be drawn from directly by the GPU during game play.

This is not without precedent. AMD did something similar with two cards that integrated SSDs on them to give massive VRAM buffers for professional applications. The main drawbacks with that approach are the response time of the SSDs and the fact that they are subject to rapid wear. Optane improves upon both of those issues by having a very quick (for an SSD) response time AND having an extremely high endurance rating. It's main hangups are cost per GB and relatively low density. Since we're only talking about 64-128GB, that cost won't be extreme, and modern Optane chips are dense enough to meet that need with very few packages.

I'm not proposing that the gpu render directly from the Optane, I'm suggesting that it can be used to rapidly swap in chunks of data from the VRAM of the card without having to go out to the PCIe bus of the PC, instead, it can use it's own private one to the Optane itself with zero contention and rapid setup times.

It seems like it could be a decent match.

Hear me out, we know that putting a ton of very high speed ram on graphics cards gets expensive quite quickly in both cost and energy draw. Why not enhance their product with something that they already have but can't seem to find a good market for? If they integrated a few Optane chips onto their cards, they could have a pool of 64+GB of second class vram to use as "near" storage for their cards. This would allow the massive levels in modern games to be preloaded before game execution and be drawn from directly by the GPU during game play.

This is not without precedent. AMD did something similar with two cards that integrated SSDs on them to give massive VRAM buffers for professional applications. The main drawbacks with that approach are the response time of the SSDs and the fact that they are subject to rapid wear. Optane improves upon both of those issues by having a very quick (for an SSD) response time AND having an extremely high endurance rating. It's main hangups are cost per GB and relatively low density. Since we're only talking about 64-128GB, that cost won't be extreme, and modern Optane chips are dense enough to meet that need with very few packages.

I'm not proposing that the gpu render directly from the Optane, I'm suggesting that it can be used to rapidly swap in chunks of data from the VRAM of the card without having to go out to the PCIe bus of the PC, instead, it can use it's own private one to the Optane itself with zero contention and rapid setup times.

It seems like it could be a decent match.

NTMBK

Lifer

I think that Intel is missing an opportunity for product synergy here. They have the perfect product to pair with their dGPUs, Optane!

Hear me out, we know that putting a ton of very high speed ram on graphics cards gets expensive quite quickly in both cost and energy draw. Why not enhance their product with something that they already have but can't seem to find a good market for? If they integrated a few Optane chips onto their cards, they could have a pool of 64+GB of second class vram to use as "near" storage for their cards. This would allow the massive levels in modern games to be preloaded before game execution and be drawn from directly by the GPU during game play.

This is not without precedent. AMD did something similar with two cards that integrated SSDs on them to give massive VRAM buffers for professional applications. The main drawbacks with that approach are the response time of the SSDs and the fact that they are subject to rapid wear. Optane improves upon both of those issues by having a very quick (for an SSD) response time AND having an extremely high endurance rating. It's main hangups are cost per GB and relatively low density. Since we're only talking about 64-128GB, that cost won't be extreme, and modern Optane chips are dense enough to meet that need with very few packages.

I'm not proposing that the gpu render directly from the Optane, I'm suggesting that it can be used to rapidly swap in chunks of data from the VRAM of the card without having to go out to the PCIe bus of the PC, instead, it can use it's own private one to the Optane itself with zero contention and rapid setup times.

It seems like it could be a decent match.

Sounds like a bit of a driver nightmare...

There is no PL2 or short boost on a graphics card, it wouldn't make sense. You want fps consistency while game, nobody want big fps drops after 60 seconds. Game tests are a lot longer than a Cinebench run. The drops on AMD/Nvidia cards are very small therefore.

I wasn’t implying that there was. It was a poor attempt at a joke.

I like this graph because it's RGB! xD

RGB now stands for:

Red = Radeon

Green = GeForce

Blue = Intel Blue

I think Intel missed a trick with not calling their cards anything starting with B.

Uh, perhaps you haven't noticed - but the forums have plummeted over the past 10 years. We used to have professionals in CPU/GPU design, process development, etc. in the past. For various reason, they have moved on (except DMENS, but he mostly snipes at Intel without giving much detail).Ian Cutress making his own youtube channel and not cross posting on AT, speaks for itself on how far anandtech has gone down minus the forums.

DrMrLordX

Lifer

We used to have professionals in CPU/GPU design, process development, etc. in the past. For various reason, they have moved on (except DMENS, but he mostly snipes at Intel without giving much detail).

Some still exist, though I think most folks play their cards close to their chests now.

NTMBK

Lifer

Uh, perhaps you haven't noticed - but the forums have plummeted over the past 10 years.

Looks nervously at own join date

moonbogg

Lifer

Uh, perhaps you haven't noticed - but the forums have plummeted over the past 10 years. We used to have professionals in CPU/GPU design, process development, etc. in the past. For various reason, they have moved on (except DMENS, but he mostly snipes at Intel without giving much detail).

If the GPU situation continues to decline (for gamers that is) then tech forums will basically die. It will be such a niche it won't even be funny. Losing GPUs means removing the entertainment element from PC hardware. Nothing to read about or talk about then.

LightningZ71

Platinum Member

I've gotten to the point that I believe that the gpu market is about to transition into the asic market for Bitcoin. Essentially, manufacturers will only bother to cater to the miners, as that's where the bulk of their volume goes, without having to even bother to market. Why would you even bother to cater to gamers, who will demand warranty service, bios fixes, etc, when you can make easy bulk sales to miners?

DrMrLordX

Lifer

I've gotten to the point that I believe that the gpu market is about to transition into the asic market for Bitcoin. Essentially, manufacturers will only bother to cater to the miners, as that's where the bulk of their volume goes, without having to even bother to market. Why would you even bother to cater to gamers, who will demand warranty service, bios fixes, etc, when you can make easy bulk sales to miners?

GPU mining is not a stable growth market. Gaming is.

LightningZ71

Platinum Member

When has a modern company EVERY taken the long game on ANYTHING? There is a boat load of short term dollars to be made by catering to miners. The conversion costs to move between mining cards and gaming cards are minimal.

gdansk

Diamond Member

The two GPU companies were both badly burned by excess mining inventory before. In the last quarterly report AMD said they are not heavily "exposed" to crypto. The word choice is telling: they see it as a risk.When has a modern company EVERY taken the long game on ANYTHING? There is a boat load of short term dollars to be made by catering to miners. The conversion costs to move between mining cards and gaming cards are minimal.

Given that environment I don't see how they'll ever design their actual GPUs for mining. Even not being good at it is a selling point for Nvidia's current LHR cards.

Last edited:

LightningZ71

Platinum Member

Oh, I don't think that AMD, NVIDIA or Intel will be designing a purpose built GPU SPECIFICALLY for mining any time soon. They might tweak an existing design for the purpose, or, as Nvidia has done, regurgitate past generation silicon with new BIOS settings for mining. The ONLY way that I see them going that way is if someone engages their semi-custom design services in the case of AMD, uses their foundry services for Intel, or just flat out commissions a design with NVidia.

My point is, for an example, consider the possibility of a mining consortium purchasing a company like EVGA, or at least their AIB section, and then using the AIB business unit to custom design cards and bios programs that exploit existing gpu silicon specifically for mining. This could entail disabling unneeded sections of the chips in BIOS, changing the memory timings to favor mining, and designing the cards for 24/7/365 maximum throughput with no extra resources put towards video out, flashy board designs, etc.

My point is, for an example, consider the possibility of a mining consortium purchasing a company like EVGA, or at least their AIB section, and then using the AIB business unit to custom design cards and bios programs that exploit existing gpu silicon specifically for mining. This could entail disabling unneeded sections of the chips in BIOS, changing the memory timings to favor mining, and designing the cards for 24/7/365 maximum throughput with no extra resources put towards video out, flashy board designs, etc.

TRENDING THREADS

-

Discussion Zen 5 Speculation (EPYC Turin and Strix Point/Granite Ridge - Ryzen 9000)

- Started by DisEnchantment

- Replies: 25K

-

Discussion Intel Meteor, Arrow, Lunar & Panther Lakes + WCL Discussion Threads

- Started by Tigerick

- Replies: 25K

-

-

-