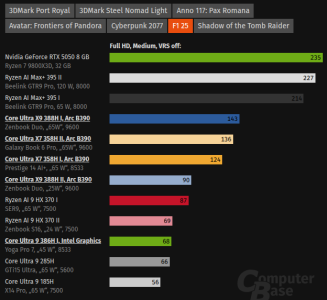

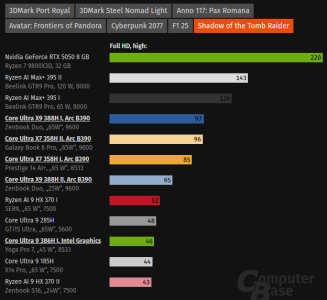

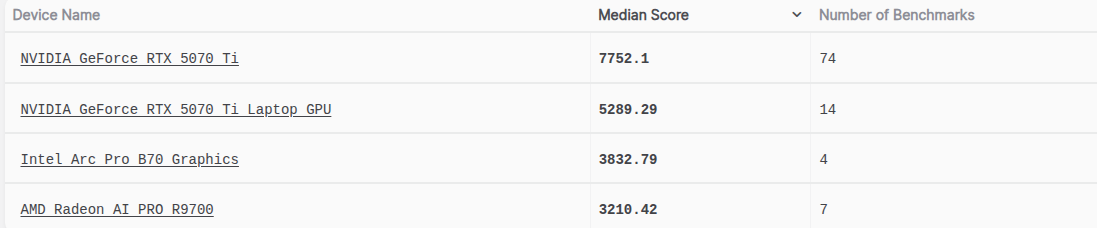

The imaginary gaming version(B770 ?) with a bit more thermal headroom(and potential missing game-specific optimizations for G-31 ?) would probably be somewhat above or around 5060 Ti on average esp. for raster. It was never that exciting a prospect even for gaming/consumer PoV (B580 at least got 12GB VRAM for its price range). It could have only made some sense in early 2025 or by H1 2025.The website has some gaming results.

Roughly 50% faster than B60. Makes more sense why they didn't make B770. That's below 5060 Ti level. They would have had to sell it at $350 maximum, ideally $300. Even before the AI slop inflation it wouldn't have made sense for Intel.

Then there's CPU-bottleneck issues with Battlemage, some games not being as optimized for some Intel platforms, DLSS4/4.5 being better vs XESS both quality and in-game availability. It wouldn't have been as clear a choice in many cases over a 16GB 9060XT/5060 Ti as a B580 was over some 8GB cards like RTX 4060/5050 or RX 7600.

For over a year now it seemed that next dGPUs after BMG series were going to use newer IP than Xe3 and those would have real volume planned for somewhere likely in 2027(or maybe end 2026 but doubtful).Lemme think, they wasted their time with a refresh, and their dGPU is behind their iGPU, so optimistically they could have done a B770 in early 2025. Then a C770 could have come by mid-2026.

If memory pricing doesn't ease enough till 2028 then they might not do another Gaming dGPU till then(assuming they want to do dGPUs) and if they do they will use Xe3p or possibly newer IP.

The partnership might be primarily on DC-side, the scope might be far from final though. Even if hypothetically Intel abandons dGPUs and AI-accelerators why would they necessarily need Nvidia for its iGPUs? In such scenario they can have a smaller team for just iGPU and graphics IP.For me it's hard to see how it would benefit Nvidia to stay in an extreme niche market, therefore if the partnership becomes long term, I cannot see other than Xe team being replaced entirely.

Nvidia isn't abandoning its ARM CPU roadmaps in either DC(Vera->Rosa and so on) or consumer(N1/GB10 and successors) for partnership neither has it officially committed to using Intel's Process nodes for any of its products yet. With Intel's management anything is possible but abandoning GPU IP completely seems beyond stupid.

There's some rumors regarding Titan Lake using Nvidia's "iGPUs" but that's most likely a misinterpretation of Serpent Lake(Halo APU w/ Nvidia GPU) being moved out of Titan Lake series to its separate thing.

iGPUs are happening and even Halo(RZL-AX) could come. Laptop dGPUs took a heavy blow with Alchemist mobile dGPUs, too many things missing and broken. But laptop dGPUs anyway have become Nvidia only territory since some time.If Intel does well with their own IP, then the question is why not expand everywhere where people are trying to shoehorn niche Nvidia parts are going to be? If they have a class-leading part, then why not Xe in laptops, and halo APUs? Being perf/w uncompetitive is why they don't have a laptop variant anymore.

Last edited: