cheers mate. heard it on a podcast in passing whilst driving and couldn't find much info other than listing son sellers sites.I think they have AVX2 VNNI instructions supported by Gracemont.

GNA is its own separate accelerator. Though seems kind of pointless once they add MTL's VPU. Wonder if they'll end up dropping it.

-

We’re currently investigating an issue related to the forum theme and styling that is impacting page layout and visual formatting. The problem has been identified, and we are actively working on a resolution. There is no impact to user data or functionality, this is strictly a front-end display issue. We’ll post an update once the fix has been deployed. Thanks for your patience while we get this sorted.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Discussion Intel current and future Lakes & Rapids thread

Page 808 - Seeking answers? Join the AnandTech community: where nearly half-a-million members share solutions and discuss the latest tech.

what a strange question! they're bizzarre animals with massive elongated teeth, fat and slow but in a show of aggression will likely hurt you if they want to, never mind the silly bristle brush mustaches they all have. dreadful animals. seen plenty of beached walruses before, they die and stink to the heavens. their only use would be to render their fat for burning and their meat to be used in animal feed.

Waifu is NOT a Wife.

One stays eternally beautiful, doesnt blow up like a balloon after its first child, and will never ask you for anything.

Anyhow Waifu culture has the same thing as giving toys female names, like boats and cars.

Hell my Tesla's name is Nadeshiko even, which translates to perfect wife, or at least it started that way, when i was all into Tesla's.

A what? He said Walrus.Waifu is NOT a Wife.

One stays eternally beautiful, doesnt blow up like a balloon after its first child, and will never ask you for anything.

Anyhow Waifu culture has the same thing as giving toys female names, like boats and cars.

Hell my Tesla's name is Nadeshiko even, which translates to perfect wife, or at least it started that way, when i was all into Tesla's.

SiliconFly

Golden Member

Ur right. Raichu has posted a V/F curve which shows MTL ES2 reaching 5.1 GHz already (a bit higher than I thought). Thats impressive considering Intel 4 HP library's height has been reduced by up to 40% which i assumed would impact f-max. But it looks like thats not the case.The ES2 silicon is already achieving 5.1ghz at 1.1V, if @OneRaichu is to be believed.

Edit: Wow his twitter posts creeps me out.

But one thing's for sure, MTL efficiency is gonna be insane! Hope they can manufacture enough cpus.

Last edited:

nicalandia

Diamond Member

How old are you?Waifu is NOT a Wife.

One stays eternally beautiful, doesnt blow up like a balloon after its first child, and will never ask you for anything.

Anyhow Waifu culture has the same thing as giving toys female names, like boats and cars.

Hell my Tesla's name is Nadeshiko even, which translates to perfect wife, or at least it started that way, when i was all into Tesla's.

Last edited:

nicalandia

Diamond Member

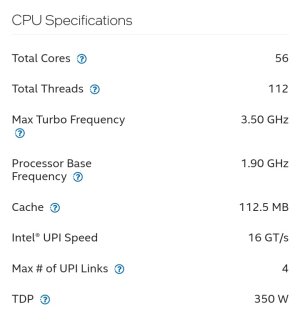

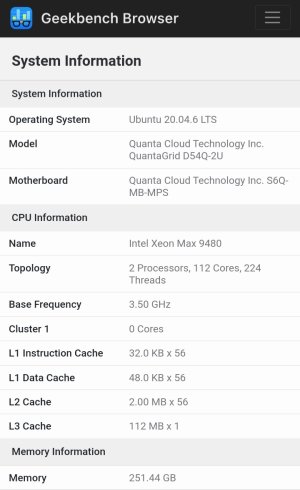

Intel Max makes it Debut on Geekbench5

browser.geekbench.com

browser.geekbench.com

Quanta Cloud Technology Inc. QuantaGrid D54Q-2U - Geekbench

Benchmark results for a Quanta Cloud Technology Inc. QuantaGrid D54Q-2U with an Intel Xeon Max 9480 processor.

The process has no issue hitting 5.3-5.4ghz (with the sacrifice of efficiency), from what I understand, so we should ignore the rumors of clock regression.Ur right. Raichu has posted a V/F curve which shows MTL ES2 reaching 5.1 GHz already (a bit higher than I thought). Thats impressive considering Intel 4 HP library's height has been reduced by up to 40% which i assumed would impact f-max. But it looks like thats not the case.

But one thing's for sure, MTL efficiency is gonna be insane! Hope they can manufacture enough cpus.

Intel 4 will have capacity issues because most of Intel’s focus is on 18A.

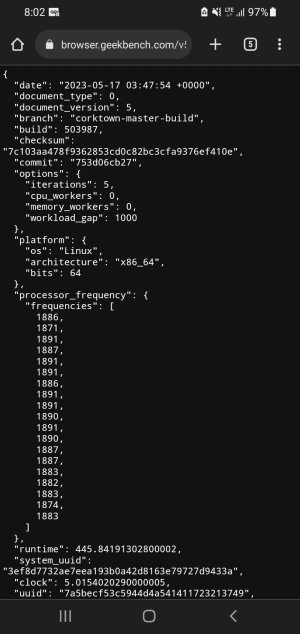

Running at 1.8ghz clocks. Interesting.Intel Max makes it Debut on Geekbench5

View attachment 80697

Quanta Cloud Technology Inc. QuantaGrid D54Q-2U - Geekbench

Benchmark results for a Quanta Cloud Technology Inc. QuantaGrid D54Q-2U with an Intel Xeon Max 9480 processor.browser.geekbench.com

nicalandia

Diamond Member

A what? He said Walrus.

nah he said Originally meant Waifu...

[/QUOTE]

Not a fan of the Waifus?

lol you turned it into a Walrus. Which my sarcasm was mostly aimed at saying, to some males, they are the same thing, wife being a walrus. But lets not go there, or i may end up getting kicked out of the house.

How old are you?

My commet was aimed at sarcasm.

But Waifu's do extend to cars / boats / planes / other stuff.

And Videocards are now coming out with a lot of Waifu Edition:

Its a growing subculture for PC Builders now.

Last edited:

Apparently Intel's been doing more budget cuts/layoffs. Their client business group did indeed get an ~8% budget cut. Can't find out exactly how many layoff that translates to, but certainly a lot. Sounds like the main engineering org also got another round of budget cuts/layoffs, but a smaller number. Sounds like graphics was hit hard though.

Thunder 57

Diamond Member

Apparently Intel's been doing more budget cuts/layoffs. Their client business group did indeed get an ~8% budget cut. Can't find out exactly how many layoff that translates to, but certainly a lot. Sounds like the main engineering org also got another round of budget cuts/layoffs, but a smaller number. Sounds like graphics was hit hard though.

I hope that doesn't mean they plan on giving up on graphics already. That would be incredibly shortsighted. But we know how much Intel likes margins, and margins on their cards right now is probably poo.

I doubt they'll kill graphics officially, but they run the very real risk of leaving it stillborn from underfunding. Honestly, that's probably the risk for most of their products. They're making huge cuts today that will hurt them most in '26-'27. Which of course will become justification for yet more cuts, just as their troubles today were mostly the result of mismanagement under BK. This is the death spiral that poorly managed companies fall into, and I don't see Gelsinger having the wherewithal or backbone to break out of it.I hope that doesn't mean they plan on giving up on graphics already. That would be incredibly shortsighted. But we know how much Intel likes margins, and margins on their cards right now is probably poo.

Like, any rational person can see the problem with the logic of "Our financials are terrible because our products are uncompetitive." -> "We need to cut costs to improve financials." -> "Our products are uncompetitive because we cut costs" -> [repeat]. But apparently common sense does not dictate corporate decision making.

After the release of ChatGPT and competitors? Nope. Intel needs GPUs to stay competitive in the AI space.I doubt they'll kill graphics officially, but they run the very real risk of leaving it stillborn from underfunding. Honestly, that's probably the risk for most of their products. They're making huge cuts today that will hurt them most in '26-'27. Which of course will become justification for yet more cuts, just as their troubles today were mostly the result of mismanagement under BK. This is the death spiral that poorly managed companies fall into, and I don't see Gelsinger having the wherewithal or backbone to break out of it.

Like, any rational person can see the problem with the logic of "Our financials are terrible because our products are uncompetitive." -> "We need to cut costs to improve financials." -> "Our products are uncompetitive because we cut costs" -> [repeat]. But apparently common sense does not dictate corporate decision making.

Or boost is disabled. That chip should have no issues with maintaining higher clocks on a test like gb5/6More like 1.9, which is the advertised all core base.

View attachment 80707

View attachment 80708

Thats to keep the CPU from exceeding the TDP

I doubt they'll kill graphics officially, but they run the very real risk of leaving it stillborn from underfunding. Honestly, that's probably the risk for most of their products. They're making huge cuts today that will hurt them most in '26-'27. Which of course will become justification for yet more cuts, just as their troubles today were mostly the result of mismanagement under BK. This is the death spiral that poorly managed companies fall into, and I don't see Gelsinger having the wherewithal or backbone to break out of it.

Like, any rational person can see the problem with the logic of "Our financials are terrible because our products are uncompetitive." -> "We need to cut costs to improve financials." -> "Our products are uncompetitive because we cut costs" -> [repeat]. But apparently common sense does not dictate corporate decision making.

Yep. I said this before, but it looks like Intel is going "all in" on their foundry play and counting on not only regaining process leadership for their own products, but also gaining lots of foundry customers at the same time. If it works, Gelsinger will look like a genius but lots of things have to go nearly perfect for it to work at this point. There is not much room for error anymore at Intel.

I dunno about cutting employees tbh. amd had and still has far less than intel does and they're kicking intel's ass six ways from sunday. Gelsinger has been in a downward dog yoga position since taking the helm with amd firmly behind him but not in the manner he'd prefer which is amd being 2nd to them. if you're cutting staff it sucks but if the staff was excess that isn't needed and don't contribute much to their inividual orgs then wy keep keep feeding the baby birds?

DrMrLordX

Lifer

Sounds like graphics was hit hard though.

Yeah they got rid of Raja after all! He was like 25% of their GPU division all by himself!

Sad that Intel needs GPUs to be competitive in AI when they've got so many independent AI projects operating under the same roof. Or had anyway.After the release of ChatGPT and competitors? Nope. Intel needs GPUs to stay competitive in the AI space.

igor_kavinski

Lifer

He kind of wiggled his way into the Intel blanket during the Intel-AMD collaboration for Kaby Lake G. I think his pitch went like this:i bet whoever recommended raja's hiring knew they were on their way being sacked and that was their final hurruh moment they'll treasure forever.

"So I see that you payin' millions to AMD to use their GPU tech. I'm the brains behind that tech. Just hire me and I'll make you billions. All I ask for in return is peanuts compared to what you will make eventually. (in his mind, the thought continued, "eventually as in, FAR, FAR, FARRRRRR into the future, enough to help my coming generations live off the dough I make while promising great things to these buffoons.)"

And he sunk billions of investor money into the GPU sinkhole. Thankfully, the hole wasn't big enough so some of that money made its way into a product that finally launched. Kudos to him for not making that hole larger. Thank you, Raja Koduri. We owe you.

It was the 2nd time Intel either got dropped or terminated a deal. Before the buy out was done intel had partnered with ati to provide integrated graphics on their low and mid range chipsets, their enthusiast range still used their crappy gma stuff. they went with sis for several years before introducing their igpu. this was a pivotal moment because there was much buzz on how intel accomplished this.He kind of wiggled his way into the Intel blanket during the Intel-AMD collaboration for Kaby Lake G. I think his pitch went like this:

raja's sole purpose other than making a mess is to be in the dc and not regular consumer lines. I hope keller keeps him at arm's distance.

SiliconFly

Golden Member

Sounds exciting! But any pointers and/or references to 5.3 - 5.3 ghz numbers? Cos' most of the articles I've come across; tend to get their numbers from rumors or guesstimates. 🙁The process has no issue hitting 5.3-5.4ghz (with the sacrifice of efficiency), from what I understand, so we should ignore the rumors of clock regression.

Intel 4 will have capacity issues because most of Intel’s focus is on 18A.

Last edited:

SiliconFly

Golden Member

Actually I think raja doesn't deserve all the bashing that I've seen on the web. He's done some good too!He kind of wiggled his way into the Intel blanket during the Intel-AMD collaboration for Kaby Lake G. I think his pitch went like this:

"So I see that you payin' millions to AMD to use their GPU tech. I'm the brains behind that tech. Just hire me and I'll make you billions. All I ask for in return is peanuts compared to what you will make eventually. (in his mind, the thought continued, "eventually as in, FAR, FAR, FARRRRRR into the future, enough to help my coming generations live off the dough I make while promising great things to these buffoons.)"

And he sunk billions of investor money into the GPU sinkhole. Thankfully, the hole wasn't big enough so some of that money made its way into a product that finally launched. Kudos to him for not making that hole larger. Thank you, Raja Koduri. We owe you.

If we turn the clock back, Intel had to completely scrap the Larabee project after pouring in billions because it under performed. Well, in reality, it was a total disaster!

Their iGPUs have been stagnant for more than a decade with tiny incremental updates that aren't even worth a mention.

Raja started with a clean slate, cut out all the old crap, delivered decent hardware (not top notch but still good) and most importantly intel now has super stable and clean graphics drivers because of his past efforts. That itself is a humongous task. He did take his own sweet time, but without his knowledge and experience, Intel would be having another Larabee in their hands.

Instead, now they're in a position to compete with AMD/Nvidia/Apple in the lower to mid-range graphics which by itself is an massive achievement. In spite of delays, Raja still deserves a little bit of our appreciation I think.

Last edited:

TRENDING THREADS

-

Discussion Zen 5 Speculation (EPYC Turin and Strix Point/Granite Ridge - Ryzen 9000)

- Started by DisEnchantment

- Replies: 25K

-

Discussion Intel Meteor, Arrow, Lunar & Panther Lakes + WCL Discussion Threads

- Started by Tigerick

- Replies: 25K

-

Discussion Intel current and future Lakes & Rapids thread

- Started by TheF34RChannel

- Replies: 24K

-

-