nicalandia

Diamond Member

- Jan 10, 2019

- 3,331

- 5,282

- 136

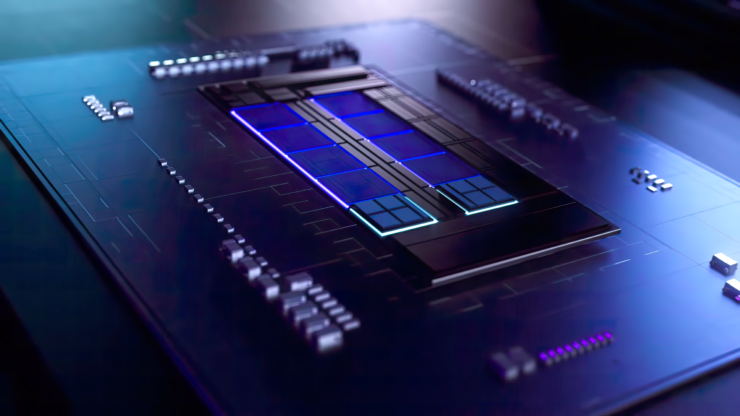

Well Arrow Lake is supposed to use the same SoC tile as Meteor Lake, right? So...Note: I am not going to deny that cores in the big tile exist. I hope they do, since the more technology and the more cores out there the better off we are will be. But, even that link doesn't specify Meteor Lake. We can imply that it is in Meteor Lake due to the same 3-tile graphic used for other Meteor Lake presentations (ignoring the obvious simplification that Meteor Lake isn't 3 tiles). But that is also the same image used for Arrow Lake. So, these are still just hints at this point for those of us without inside information.

Purely in terms of the CPU tile, yeah, that looks right.

You need hybrid bonding to get close to monolithic. Foveros has a smaller bump pitch than EMIB, which is probably why it's used here, but it's still not quite monolithic.

Unfortunately, I think you'll be disappointed, but I hope my pessimism ends up being incorrect.

If my estimation on the die size is roughly correct the total area (with empty space) is around 178 mm^2.

So the top left should be Intel4 8c+16c compute part. The tile below would be N4 IO.

The center part would be N3 iGPU but it has space for 384 EU so it either is:

2 media engine + 384 EU

or

2 media engine + 192 EU + 6 tensor processing core (TPC) or similar

The right lean tile would be N5 SOC.

Oh there are plenty of theoretical advantages, don't get me wrong, but I'm not convinced any will actually get realized with Meteor Lake as rushed as it seems to be.It seems that on a higher level you can use things as power gating to lower power use, but they don't use it for say, the decoders as it's too latency critical.

So theoretically it should offer both faster on/off transitions for the chipset actually allow it to reach much lower power idle(and/or more often) and also reduce the absolute floor of power the communication line uses. This means the ~1.5W or so TDP of the on-package chipset is often reached even in very light load. Yes you can get that really low in idle, but it's very easy to knock it off that state.

That is theory of course. Atom didn't benefit from things being on-die until Silvermont when they updated to a proper on-die bus.

You swapped the GPU and IO.Top left is 2+8. Bottom tiny die is GPU. Center large tile is SoC or basically what's called PCH in current terms. Right is I/O.

This is my first post in years

atleast you didn't summon "the one"It's all my fault, isn't it? 🤣

While ~1-4MB range latency improvements are obviously due to 2MB of L2 ( remarkably it is not slower than 1MB in <1MB range ), Intel seems to have remembered their premier cache skills and 8-32MB range is looking improved ( even more so considering that graph is log scale, very sizeable improvements in cycles for what is also larger cache with more stops on ring due to two more clusters of small cores).

Intel seems hell bent to feed their very wide and powerful core and combined with faster DDR5 support it will perform substantially better than ADL.

I predict Raptor Lake to be slower than the 5800X3D in games that like Cache.

Well, cache is getting significantly increased in Raptor Lake. The 5800X3D is barely faster than the 12900K when the latter is paired with high speed DDR5, which compensates for the lack of cache somewhat.

But what you stated could also be the case for Zen 4 as well. There's a high chance that Zen 4 will be slower than the 5800X3D in many games.

You guys are banking on that additional 60% increase in L2$ which gives 6 additional MiB of L2$. The L3 remains the same. Zen4 will have a 100% increase on the L2 and L3 will remain the same.

No, that's just the 13900K or SKUs that have 16 e cores, the rest will remain the same. the 13700K will likely be 8+8 with 30 MiB of L3. So the Per core L3 remains the same.L3 is going from 30MB in Alder lake to 36MB in Raptor Lake, and it looks like it will shave some cycles off the access time. Memory controller will also be better.

No, that's just the 13900K or SKUs that have 16 e cores, the rest will remain the same. the 13700K will likely be 8+8 with 30 MiB of L3. So the Per core L3 remains the same.

While Games might take advantage of the L3 that is not being used by e cores(6 additional MiB), they will not take advantage of the L2 on e cores since they have plenty of P cores to choose from.The E cores are getting their L2 cache doubled as well, so that should take some of the pressure off of the L3 cache and make it more performant.

Looks like your prediction a while back is going to be accurate. I think a lot of people are underestimating the performance of Raptor Lake. At this point, I am almost certain Raptor Lake will have the highest single thread performance compared to Zen 4, and will also be highly competitive in multithreaded apps; though Zen 4 will be stronger there overall.

Raptor Lake should also take the gaming crown.

As long as it's On Track™, sure.Meteor Lake bottom die being just a passive interposer? I don't even.

Meteor Lake is Lakefield 2.0. The bottom die is the PCH, which per tradition is built on the N-1 node, in this case 10nm Foveros.

Proof: see the high density package and tell me where the PCH is... https://wccftech.com/intel-shows-of...pu-tiles-produced-by-intel-gpu-tiles-by-tsmc/

This is my first post in years just to correct this nonsensical "theory". Foveros is active interposer.

Edit: if Intel just needed passive connections it would use EMIB, which is Intel's ultra-low cost alternative to passive interposer. @jpiniero @IntelUser2000 @ashFTW

Look at AMD APUs vs. their CPUs. Their CPUs have DOUBLE the cache of the APUs, yet it helps very little in most cases:

Raptor lake might be slightly faster than Alder Lake in ST performance, but the difference is likely to be less than 10%. I believe you are overestimating what a small addition to cache will do. Look at AMD APUs vs. their CPUs. Their CPUs have DOUBLE the cache of the APUs, yet it helps very little in most cases: CPU 2021 Benchmarks - Compare Products on AnandTech

Zen 4 is likely to be much faster.

Look at AMD APUs vs. their CPUs. Their CPUs have DOUBLE the cache of the APUs, yet it helps very little in most cases:

That's the crux. Intel had some catching up to do there (all the Skylake clones had excellent cache latency) so it's important it does just that.Overall real good direction to take for manufacturer whose chips had same latency with IMC on chip as AMD had with separate IOD.

That's the crux. Intel had some catching up to do there (all the Skylake clones had excellent cache latency) so it's important it does just that.