GunsMadeAmericaFree

Golden Member

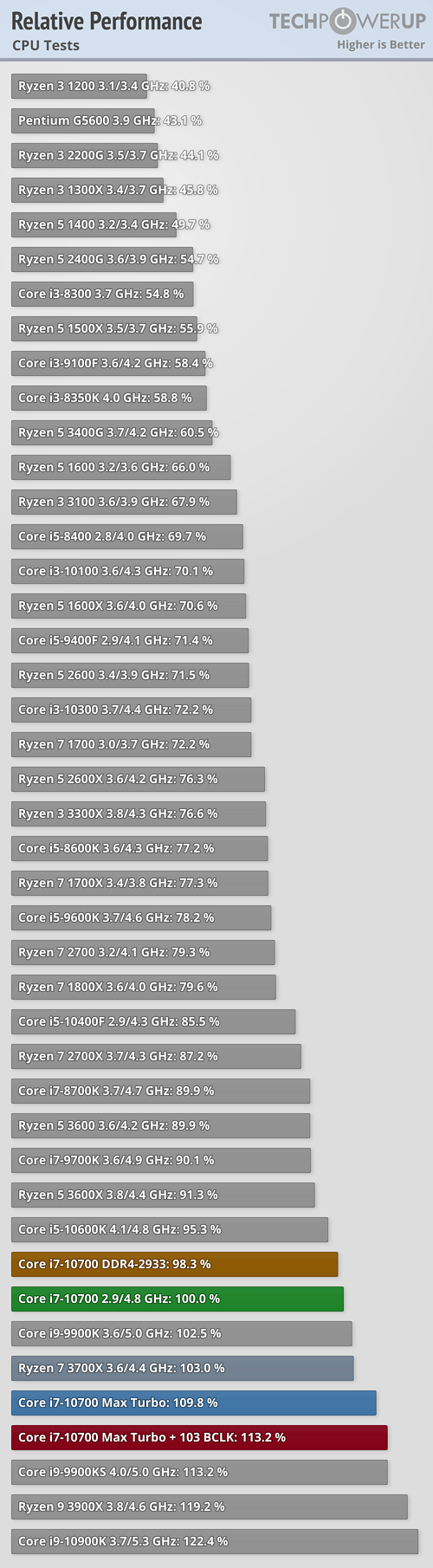

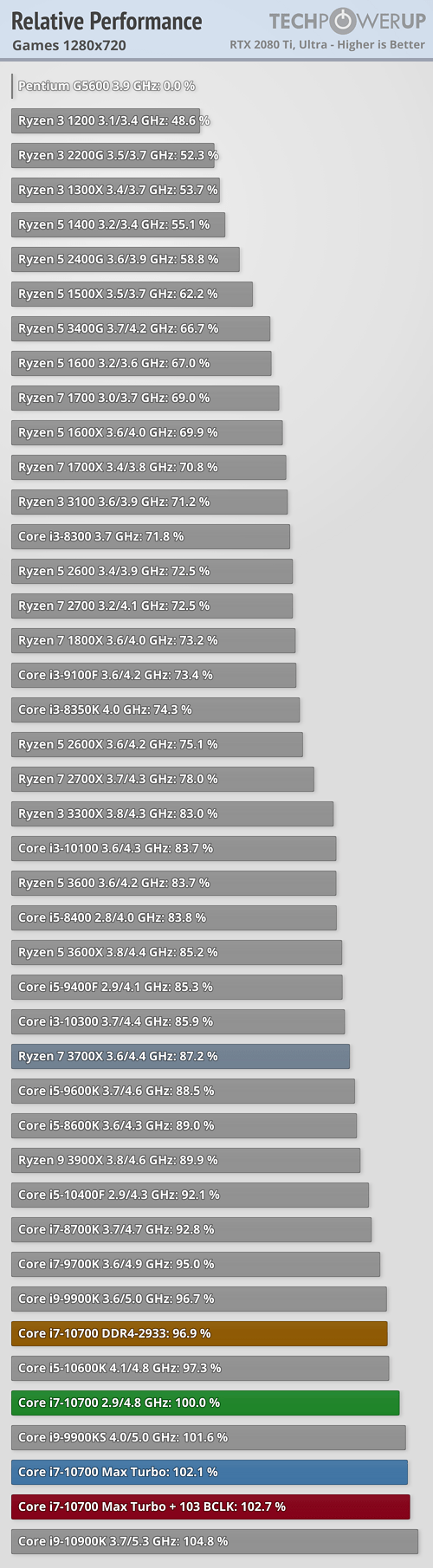

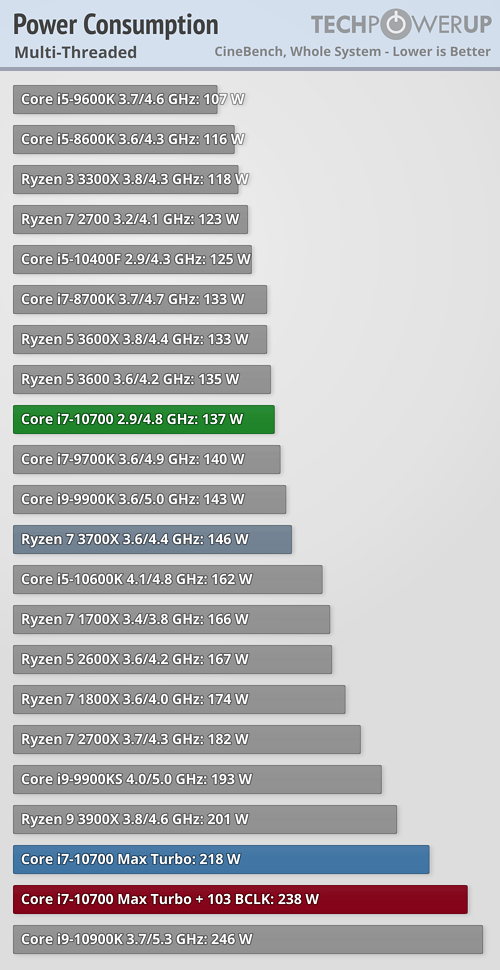

early benchmarks

more benchmarks

Just thought I would provide these so folks could draw their own conclusions. Evidently the processors have been getting sold for a few days now in Germany.

more benchmarks

Just thought I would provide these so folks could draw their own conclusions. Evidently the processors have been getting sold for a few days now in Germany.