View attachment 140632

Ok this explains it better. With the amount of x86 apps and games, this is just a marketing gimmick for now.

So wait, if this "happens in Intel's labs not your PC" and "the original binary on disk is never modified", it must be shipping an optimized binary that comes from "Intel's labs" to run on your PC, right? This "user mode service" that watches for the relevant binaries causes the new binary you downloaded from Intel to be run, instead of "the original binary on disk".

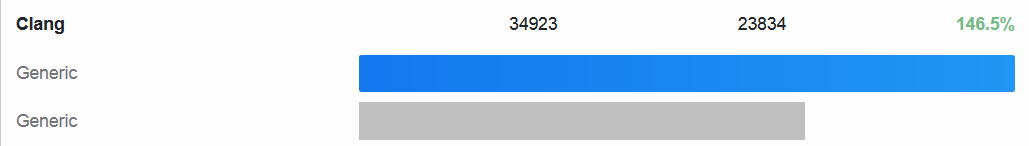

By their nature, benchmarks don't always use the most efficient algorithm possible. Let's say Geekbench included quicksort as one of its benchmarks. Intel's labs could replace that with a faster sort algorithm which generates the same answer but it is NOT measuring the same thing.

I think John is 100% correct to blanket ban use of these results for Geekbench until he can inspect the binary and see what is being done. The problem is even if he inspects it today and decides "OK, this is just some better optimization, I'm OK with it" Intel could later update the "improved" binaries they are distributing with ones that go further and change the algorithms being used.

There are a lot of games you can play with a benchmark, without even "optimizing" anything. Imagine you're an OEM selling Android phones using basically the same SoC everyone else is using. It is hard to raise yourself above the pack. Some have done it by overclocking those SoCs a little. It looks better in a specs comparison and if people believe it is faster they might choose that phone between other similar ones that are not overclocked. But if that overclocking doesn't help Geekbench because the phone is overheating and throttling, well here's how you fix it. You do something like Intel's service, providing an "optimized" Geekbench binary that makes the brief pauses between subtests a little longer to give the SoC more time to cool down. Now you get the benefit of the overclocking and customers may be more likely to choose your phone - even though it hasn't become any faster in real world tasks!

Now for actual applications and games maybe its a different story. If it runs faster for you, you may not care if you're running the original binary or one Intel has optimized. But what happens if you experience problems - I'm gonna bet the first thing the application vendor tells you is to disable Intel's optimization for it and see if you can reproduce the issue.

I'd also be worried about potential hacking of this service. If Intel (with a USER MODE service) can override what binary you run, maybe a hacker can leverage it to replace the binary you expected to run with one that includes some malware. Your malware scanners wouldn't catch it, because "the original binary on disk is never modified". I can't imagine corporations that care about security would permit this to be enabled.