igor_kavinski

Lifer

Geekbench 6 - Geekbench Blog

Weird choice of baseline CPU and even weird is that the baseline score is 2500.

i7-12700 does hardly 2000 in GB5 with the fastest DDR5.

EDIT: Gideon's browser extension/add-on: https://forums.anandtech.com/thread...d-against-core-i7-12700.2610597/post-41452115

Helps you make sense of the results if you are left scratching your head about why a certain test is fast on a certain CPU.

Super useful!

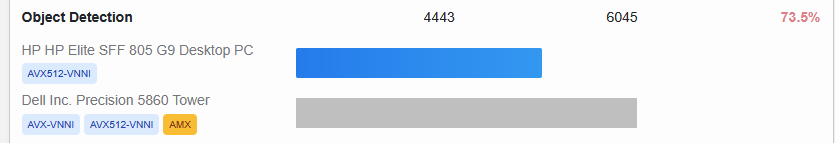

Sample screenshot:

Last edited: