Woot, my ram came in today (G.Skill RMA turn-around time FTW :thumbsup

so I was able to get my system powered up for some tests.

Here's what I did with the GPU, first I took out the dremel with a metal cut blade and cut the standoff posts in half:

^ you can see the springs I bought laying in the background of that photo. They were $0.75 each, I only used two for this project.

Here's the uncut spring jacketing the sawed off post:

I cut the ends off each spring (using the dremel cutter again), here are the spring-jacketed posts now:

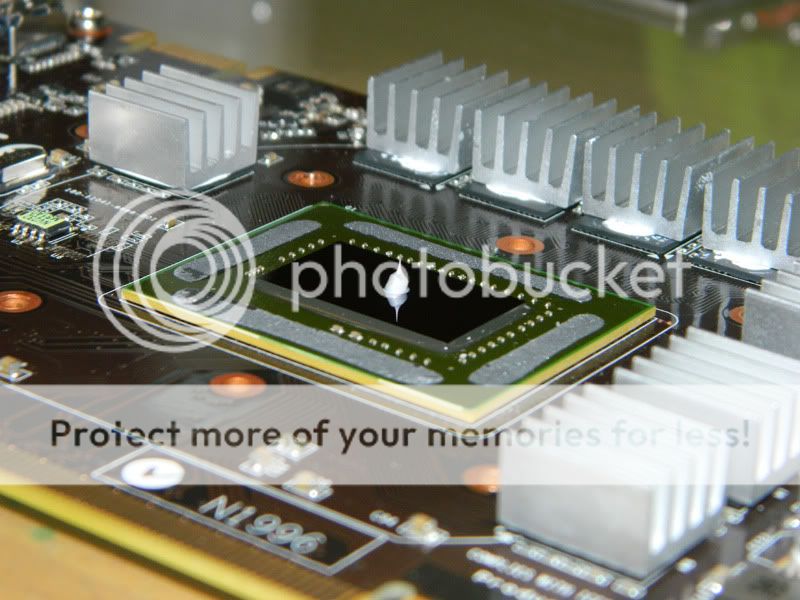

Here's one final shot of the polished Accelero HSF surface:

For the

first attempt, I added an very small dot of

Noctua NT-H1 TIM:

^ This turned out to be too little, I failed to grab a photo showing how much the TIM spread across the silicon surface but it did not cover corner-to-corner and I knew something was amiss because the screen was artifacting during the BIOS initialization phase of booting the computer.

I took off the Accelero and cleaned off the NT-H1, then added a much larger dollop:

^ that turned out to be the right amount. No artifacting now :thumbsup:

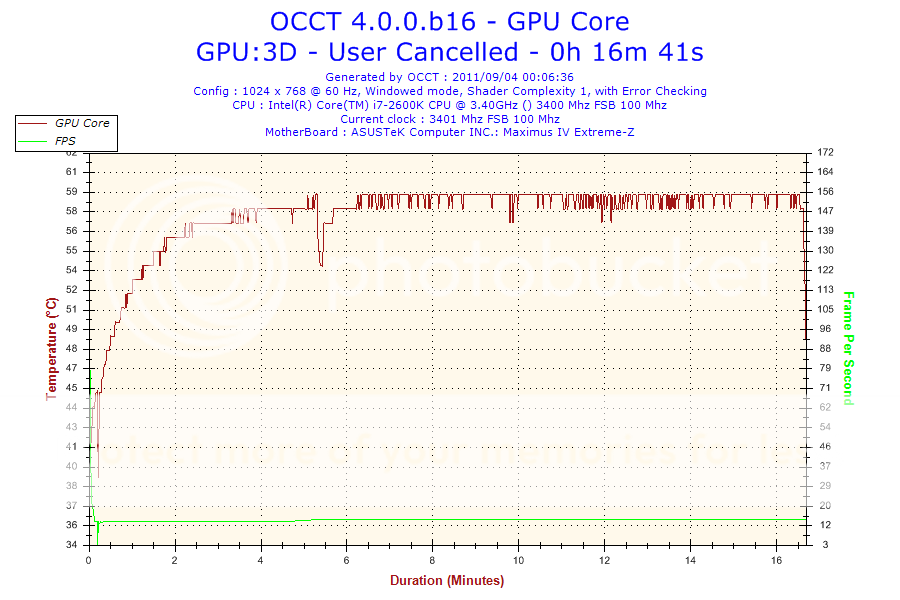

I've got OCCT running now on the GPU, I'll update the thread with temp results as soon as I have them