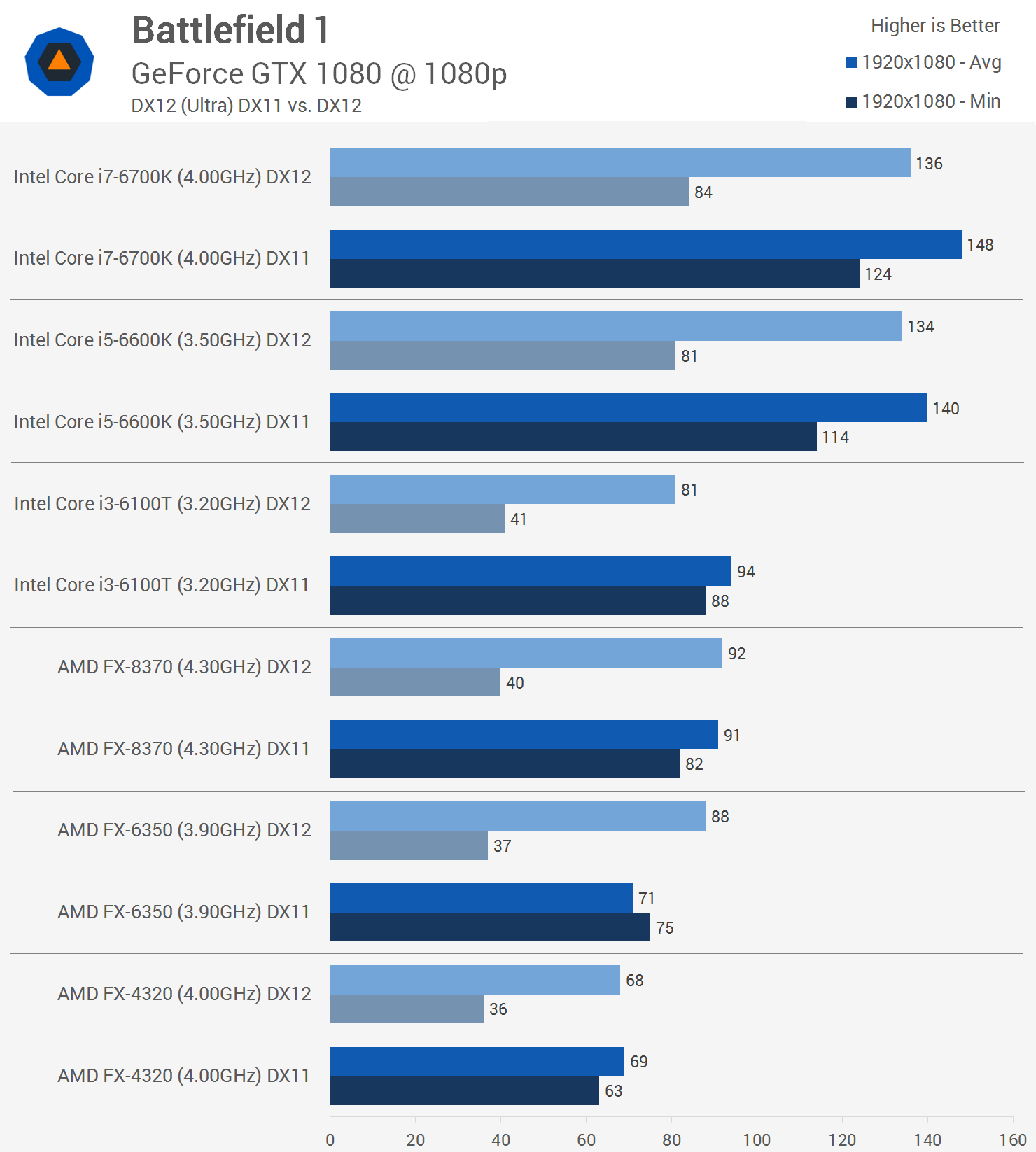

But a fail for the dx12 implementation. Disappointing. Dx12 is not working in 64 man mp battles. Period.[H] comparing 480 v 1060

http://www.hardocp.com/article/2016...eo_card_dx12_performance_preview#.WA5wffkrJD8

He calls a draw.

I enjoy the game though. Think its better that bf3 or bf4. It smells of war blood pain and anxiety.