-

We’re currently investigating an issue related to the forum theme and styling that is impacting page layout and visual formatting. The problem has been identified, and we are actively working on a resolution. There is no impact to user data or functionality, this is strictly a front-end display issue. We’ll post an update once the fix has been deployed. Thanks for your patience while we get this sorted.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Discussion Ada/'Lovelace'? Next gen Nvidia gaming architecture speculation

Page 37 - Seeking answers? Join the AnandTech community: where nearly half-a-million members share solutions and discuss the latest tech.

Saylick

Diamond Member

Yeah, but that would erode the price of Ampere. They needed to build out a pocket where the remaining inventory could sell out at the highest price possible... This behavior is transparent AF.It would have been great as 4070 Ti at $599. It's just $300 too high...

biostud

Lifer

Nobody is paying $899 for a xx70 class card, so instead of lowering price the just call it a 4080 😛Yeah... that naming scheme makes no sense.

biostud

Lifer

Also depends on local sales tax.I guess >2100 € for 4090 and 1500 €ish for 16GB 4080 here in Finland.

EDIT:

From NVIDIA...

GeForce RTX 4090: >=1999,00 €

RTX 4080 (16GB): >=1509,00 €

RTX 4080 (12GB): >=1129,00 €

DisEnchantment

Golden Member

76B Transistors should be 700+ mm2 chip, not cheap.

Saylick

Diamond Member

Die sizes haven't been confirmed, but going off of rumored sizes we now have:

- AD102, ~600mm2 = $1599 ---> $2.67 $/mm2

- AD103, ~380mm2 = $1199 ---> $3.16 $/mm2

- AD104, ~300mm2 = $899 ---> $3.00 $/mm2

It's obvious that RTX 4080 in all of its forms is priced at least $100 too high. The issue is they don't want to kill off Ampere sales entirely... and that's where RTX 4080 lives. I fully expect AMD to take Nvidia head-on in the $600 - $1200 price range, with Nvidia being 100% capable of lowing the price to compete (ideally, for them, only after Ampere clears inventory).

- AD102, ~600mm2 = $1599 ---> $2.67 $/mm2

- AD103, ~380mm2 = $1199 ---> $3.16 $/mm2

- AD104, ~300mm2 = $899 ---> $3.00 $/mm2

It's obvious that RTX 4080 in all of its forms is priced at least $100 too high. The issue is they don't want to kill off Ampere sales entirely... and that's where RTX 4080 lives. I fully expect AMD to take Nvidia head-on in the $600 - $1200 price range, with Nvidia being 100% capable of lowing the price to compete (ideally, for them, only after Ampere clears inventory).

swilli89

Golden Member

Not to mention that Samsung 8nm has huge capacity and is still producing cards. So why not even keep them in production as Samsung sells its 8nm wafers at a massive discount versus TSMC 4N. TSMC 4N is likely capacity constrained so this feels like production and fab capacity optimization versus inventory problems.Yeah, but that would erode the price of Ampere. They needed to build out a pocket where the remaining inventory could sell out at the highest price possible... This behavior is transparent AF.

Ampere "inventory" NVIDIA has already sold to board partners and other 3rd parties, so they wouldn't keep them in the lineup only to help others sell inventory. Just as they introduced a new 2xxx series in 2021 (on old TSMC 12nm) they are basically reintroducing 3xxx series and will keep these in production long enough that 4N becomes cheaper and they can start to build lower end 4xxx on 4N.

GodisanAtheist

Diamond Member

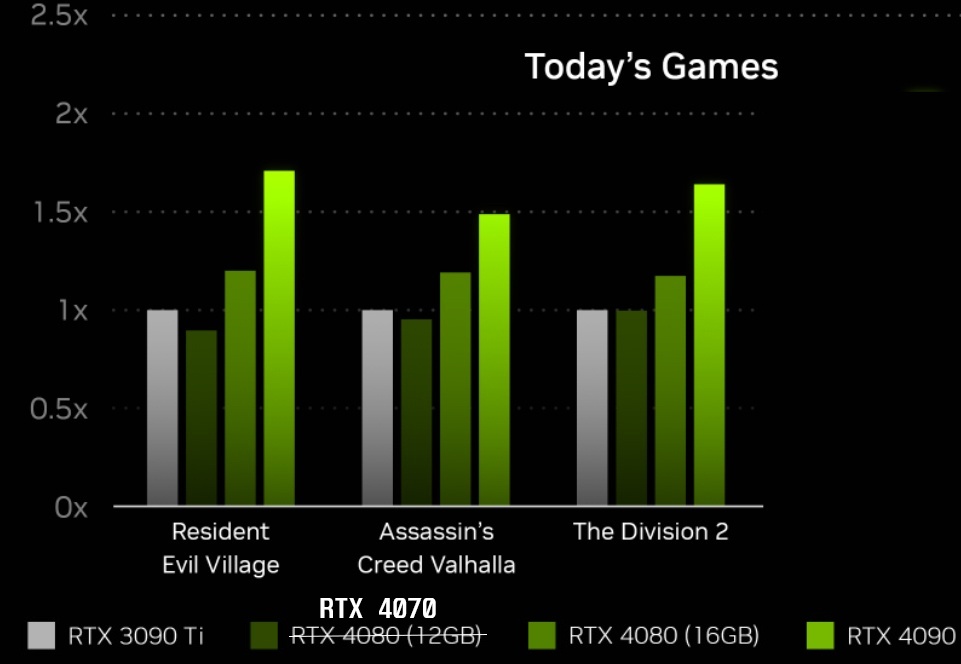

Valhalla numbers is telling..

pretty sure that will be real average performance over 3090TI.

1.5x

View attachment 67830

- Need enough people with current gen cards to believe this and I'm set. Everyone, stop bashing and start hyping STAT.

OMG 4x FASTER THAN the 3090TI!!! that means that the 3090TI is clearly $1600/4 = $400 value now guys! EVERYONE SELL SELL SELL!

R81Z3N1

Member

Maybe my reading comprehension fell off a truck but when are we going to get final specs. So to clarify we are getting a PCIe gen 4 card, that will require a new 3.0 PSU for required power cable, and most likely have beta drivers that need to be tweaked for DLSS.

If AMD brings out a Gen5 card in November they will be a better option going forward. If your building a new system using AM5 do you really want a Gen 4 card, especially if a serious hobbyist.

R81Z3N1

If AMD brings out a Gen5 card in November they will be a better option going forward. If your building a new system using AM5 do you really want a Gen 4 card, especially if a serious hobbyist.

R81Z3N1

biostud

Lifer

When has the PCIe generation been the limiting factor in a x16 card?Maybe my reading comprehension fell off a truck but when are we going to get final specs. So to clarify we are getting a PCIe gen 4 card, that will require a new 3.0 PSU for required power cable, and most likely have beta drivers that need to be tweaked for DLSS.

If AMD brings out a Gen5 card in November they will be a better option going forward. If your building a new system using AM5 do you really want a Gen 4 card, especially if a serious hobbyist.

R81Z3N1

NVIDIA GTC (GPU Technology Conference) is a global AI conference for developers that brings together developers, engineers, researchers, inventors, and IT professionals. So not really a gaming focus.What the hell is this presentation? It feels like an investor slide deck. Literally 4 minutes spent on new video cards as they pertain to video games and its been over 50 minutes on robotics/datacenter/car computers and other abstract things. Am I missing something or am I crazy?

GodisanAtheist

Diamond Member

NV has left a hole here large enough for AMD to drive a dump truck through. A competitive N31 @ $1200 would likely bring in more cash than AMD's wildest dreams while still seriously undercutting the NV halo price in a huge way.

NV's "everything but gaming" heavy focus in this presentation felt like a shockingly transparent display of no confidence in their top end product to actually compete in traditional gaming scenarios.

NV's "everything but gaming" heavy focus in this presentation felt like a shockingly transparent display of no confidence in their top end product to actually compete in traditional gaming scenarios.

fleshconsumed

Diamond Member

Leave it to nvidia to make dishonest comparison by making "off" pictures so dark you can't see s**t. I was excited about it too, but I literally can't see supposed benefits because originals are so dark.RTX Remix enabled modding is the thing I'm most excited about. This looks really promising.

Anyway. disappointing, but not unexpected. This feels like Turing 2.0. Higher power consumption, higher prices just because, relabeling 4070 into 4080, selling midrange 4070 chip for $900 with only 12GB of RAM. This is the kind of stuff that apple pulls. Will definitely be waiting till RDNA3 reveal. Will have to see how things shake out, what RDNA3 looks like, what used prices are going to look like after miners start dumping their cards. As someone has said, I just may have to give up being an enthusiast gamer if this is what future looks like - I ain't paying $900 for 12GB "upper midrange" card. Good thing I have plenty other hobbies other than gaming. If RDNA3 isn't priced better looks like my best choice would be to pick up used 6000 series card on the cheap after miner selloff. Time will tell. But right now I'm with Linus Torvalds when it comes to nvidia.

Furious_Styles

Senior member

When you're using a 10+ year old mobo? I.E. not in a long, long time.When has the PCIe generation been the limiting factor in a x16 card?

tamz_msc

Diamond Member

Vanilla Morrowind IS dark in a lot of the indoor areas.Leave it to nvidia to make dishonest comparison by making "off" pictures so dark you can't see s**t. I was excited about it too, but I literally can't see supposed benefits because originals are so dark.

Ahahahaha holy hell what a mess

"up to 2 to 3 times faster!"

What the hell does that even mean!?!

Their presentation is littered with marketing doublespeak, extreme cherry-picking and turd glitter (DLSS3).

nVidia, it's still a turd.

RIP hardcore PC gaming, it'll only go down hill from here. Unless AMD serves up a silver bullet or two into nVidia HQ with RDNA3. As they infrequently do.

"up to 2 to 3 times faster!"

What the hell does that even mean!?!

Their presentation is littered with marketing doublespeak, extreme cherry-picking and turd glitter (DLSS3).

nVidia, it's still a turd.

RIP hardcore PC gaming, it'll only go down hill from here. Unless AMD serves up a silver bullet or two into nVidia HQ with RDNA3. As they infrequently do.

Last edited:

fleshconsumed

Diamond Member

It is. I'm not disputing it. I'm just saying it's not an apples to apples comparison when one picture is pitch black and the other one is bright as day.Vanilla Morrowind IS dark in a lot of the indoor areas.

tamz_msc

Diamond Member

It cannot be an apples to apples comparison. It is almost like a modded remake of the assets in the original game, using tools that NVIDIA has developed.It is. I'm not disputing it. I'm just saying it's not an apples to apples comparison when one picture is pitch black and the other one is bright as day.

exquisitechar

Senior member

Seems to be a bit slower, even.So wait, after all this time we have a 4080 12GB at $899 MSRP that just ties the 3090 Ti with DLSS3.0 off? Am I reading that correctly?

The same 3090 Ti that's only like 10% faster at 4k than a 12GB 3080 Ti, a card currently selling for under $750?

Woof.

R81Z3N1

Member

When has the PCIe generation been the limiting factor in a x16 card?

I agree I run a pcie-4 card in a gen 3 motherboard. But if your spending that kind of money you would expect the best. Not to mention new specs like Display Port 2.0 looks like to me the safe money is to wait.

Pick up a 3 series at a supper discount use FSR to upscale if needed and wait it out.

R81Z3N1

DiogoDX

Senior member

Ahahahaha holy hell what a mess

"up to 2 to 3 times faster!"

What the hell does that even mean!?!

Their presentation is littered with marketing doublespeak, extreme cherry-picking and turd glitter (DLSS3).

nVidia, it's still a turd.

RIP hardcore PC gaming, it'll only go down hill from here. Unless AMD serves up a silver bullet or two into nVidia HQ with RDNA3. As they infrequently do.

So wait, after all this time we have a 4080 12GB at $899 MSRP that just ties the 3090 Ti with DLSS3.0 off? Am I reading that correctly?

The same 3090 Ti that's only like 10% faster at 4k than a 12GB 3080 Ti, a card currently selling for under $750?

It'd probably be better at lower resolutions but it does look like there's a serious memory bottleneck.

TRENDING THREADS

-

Discussion Zen 5 Speculation (EPYC Turin and Strix Point/Granite Ridge - Ryzen 9000)

- Started by DisEnchantment

- Replies: 25K

-

Discussion Intel Meteor, Arrow, Lunar & Panther Lakes + WCL Discussion Threads

- Started by Tigerick

- Replies: 25K

-

-

-