-

We’re currently investigating an issue related to the forum theme and styling that is impacting page layout and visual formatting. The problem has been identified, and we are actively working on a resolution. There is no impact to user data or functionality, this is strictly a front-end display issue. We’ll post an update once the fix has been deployed. Thanks for your patience while we get this sorted.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Question Zen 6 Speculation Thread

Page 406 - Seeking answers? Join the AnandTech community: where nearly half-a-million members share solutions and discuss the latest tech.

adroc_thurston

Diamond Member

It was a stupid idea and Robinson and his boys were very plainly told "no".Having the feature natively support different lengths was kind of the point of AVX10 though.

DrMrLordX

Lifer

I don't know how much there is to elaborate on. AVX10 doesn't do anything differently that would make it easier to support in a CPU with hybrid architecture (p-cores and e-cores). Intel could've made a hybrid architecture CPU that supports AVX512.

That's what I was getting at, thanks. And yes Intel could have made their entire CPU lineup support AVX-512 with reduced throughput but chose not to do so.

I don't want to speak for OneEng2, but he seems to be conflating the width of the vectors in the instructions with the width of the execution unit. As other commenters have said, you can execute a 512-bit instruction with a "double-pumped" 256-bit execution unit.

Yes, Intel did this with Goldmont back in the day (supported AVX2 with 128b execution unit).

Having the feature natively support different lengths was kind of the point of AVX10 though.

Technically it still does, and technically AVX-512 always could have (which is actually news to me, but interesting to note anyway).

Robinson and team already worked on the OG AVX-512 they were mostly from area point of view and other stuff. AVX-512 will take a decent dev time for themIt was a stupid idea and Robinson and his boys were very plainly told "no".

Jan Olšan

Senior member

Where did he get $50M 😱

Rich parents? Or does a redditor make it that big that fast when he puts on an analyst badge?

I believe they are, but I am interested in hearing your argument on this.Your understanding here is still incorrect. Hybrid architectures aren't any easier with AVX10 than AVX512.

OK. After reading MORE about this topic, I have an even clearer explanation and a correction to my last post.I don't know how much there is to elaborate on. AVX10 doesn't do anything differently that would make it easier to support in a CPU with hybrid architecture (p-cores and e-cores). Intel could've made a hybrid architecture CPU that supports AVX512.

I don't want to speak for OneEng2, but he seems to be conflating the width of the vectors in the instructions with the width of the execution unit. As other commenters have said, you can execute a 512-bit instruction with a "double-pumped" 256-bit execution unit.

Before I go into ANY detail, the MOST important thing to understand about AVX10.2 is that it is a VLA ISA (Vector Length Agnostic). This is done EXPLICITLY to support the same compiled code across different bit width implementations of different CPU's PEROID. This is the entire POINT of the new instruction set.

Let's look at this from 3 very different aspects:

- Register width

- "Vector Size" (Width of the instructions)

- Execution Path size

XMM (128b)

YMM (256b)

ZMM (512b)

AVX10.2 supports 3 different Vector (instruction) widths:

XMM 128b operands

YMM 256b operands

ZMM 512b operands

Now lets look at Data Paths.

NOTHING IN AVX10.2 REQUIRES 256b OR 512b DATA PATHS. The entire data path could be 128b.

Now, finally, within a core, there are FEATURE flags in the CPUID that determine the LEVEL of AVX10.2 that is supported.

LEVEL1:

Max architectural width 256b (note MAX)

Supports XMM & YMM

DOES NOT HAVE ZMM registers OR ZMM Instructions/Vectors.

LEVEL2:

Max architectural width 512b (again note MAX)

Supports XMM, YMM and ZMM

NOTE: These are NOT in the instructions. The instructions are agnostic to the bit width supported. This is what makes it special.

Yes, it absolutely IS the point.Having the feature natively support different lengths was kind of the point of AVX10 though.

So what this MEANS is that it is very easy to make a hybrid architecture CPU with different bit width cores BUT all the cores within the CPU MUST be the same level to avoid issues with scheduling unsupported instructions.

The LEVEL is at the CPU level, not the core level. This DOES allow Intel to use the SAME P Core on server as they do in client BUT the CPU stays LEVEL 1 AVX10.2 support. The server part using the SAME core could advertise LEVEL 2 AVX10.2 support.

BOTH Server AND Client CPU's would run the SAME compiled code though.

If I have messed this up, someone let me know.

Schmide

Diamond Member

Long and short, intel realized how stupid it would be to carve out a 256b niche for a dying platform.

The instructions, the compilers, and the programs are already out there. If you have an avx512 program, you expect it to run on future hardware that exposes the avx512 flags.

If you look at the instructions.

byte 0 EVEX 0x62 (old bound instruction) this tells the system it is a special instruction and the next 3 bytes are going to define the operand.

byte 1 register map

byte 2 data width and opcode map

byte 3 vector length

bytes after. variable length from the opcode map in byte 2.

map 1-3 legacy 2-3 byte opcodes.

map 5,6 avx 512 opcodes.

It gets even more complicated after that.

If intel was to define a 256b path with the above. They would have to replicate avx512 in the remaining maps. Well guess what APX took maps 4 and 7.

No older hardware is going to expose new flags for a 256b path for avx512. So any implementation is left to future.

Putting out half ass future cores that will be the only users of this subset or replicated subset just makes you look lazy (crazy?) considering amd's unified implementation.

This is the point you realize it's just easier to add full avx512 support even if it runs slower using lesser hardware.

The instructions, the compilers, and the programs are already out there. If you have an avx512 program, you expect it to run on future hardware that exposes the avx512 flags.

If you look at the instructions.

byte 0 EVEX 0x62 (old bound instruction) this tells the system it is a special instruction and the next 3 bytes are going to define the operand.

byte 1 register map

byte 2 data width and opcode map

byte 3 vector length

bytes after. variable length from the opcode map in byte 2.

map 1-3 legacy 2-3 byte opcodes.

map 5,6 avx 512 opcodes.

It gets even more complicated after that.

If intel was to define a 256b path with the above. They would have to replicate avx512 in the remaining maps. Well guess what APX took maps 4 and 7.

No older hardware is going to expose new flags for a 256b path for avx512. So any implementation is left to future.

Putting out half ass future cores that will be the only users of this subset or replicated subset just makes you look lazy (crazy?) considering amd's unified implementation.

This is the point you realize it's just easier to add full avx512 support even if it runs slower using lesser hardware.

Covfefe

Member

If the official spec doc from intel removing the phrase "optional 512-bit" doesn't convince you, then I don't know what will. At this point I'm done arguing. The discussion has run it's course and it's blocking up the thread.I believe they are, but I am interested in hearing your argument on this.

OK. After reading MORE about this topic, I have an even clearer explanation and a correction to my last post.

Before I go into ANY detail, the MOST important thing to understand about AVX10.2 is that it is a VLA ISA (Vector Length Agnostic). This is done EXPLICITLY to support the same compiled code across different bit width implementations of different CPU's PEROID. This is the entire POINT of the new instruction set.

Let's look at this from 3 very different aspects:

AVX10.2 supports 3 different register widths (I incorrectly said it required 32 x 512b registers):

- Register width

- "Vector Size" (Width of the instructions)

- Execution Path size

XMM (128b)

YMM (256b)

ZMM (512b)

AVX10.2 supports 3 different Vector (instruction) widths:

XMM 128b operands

YMM 256b operands

ZMM 512b operands

Now lets look at Data Paths.

NOTHING IN AVX10.2 REQUIRES 256b OR 512b DATA PATHS. The entire data path could be 128b.

Now, finally, within a core, there are FEATURE flags in the CPUID that determine the LEVEL of AVX10.2 that is supported.

LEVEL1:

Max architectural width 256b (note MAX)

Supports XMM & YMM

DOES NOT HAVE ZMM registers OR ZMM Instructions/Vectors.

LEVEL2:

Max architectural width 512b (again note MAX)

Supports XMM, YMM and ZMM

NOTE: These are NOT in the instructions. The instructions are agnostic to the bit width supported. This is what makes it special.

Yes, it absolutely IS the point.

So what this MEANS is that it is very easy to make a hybrid architecture CPU with different bit width cores BUT all the cores within the CPU MUST be the same level to avoid issues with scheduling unsupported instructions.

The LEVEL is at the CPU level, not the core level. This DOES allow Intel to use the SAME P Core on server as they do in client BUT the CPU stays LEVEL 1 AVX10.2 support. The server part using the SAME core could advertise LEVEL 2 AVX10.2 support.

BOTH Server AND Client CPU's would run the SAME compiled code though.

If I have messed this up, someone let me know.

branch_suggestion

Senior member

Oh that is the cute part, they can release upgraded versions every 2-3 years instead of 7.Now that's an argument for a new market - PC gaming market basically, and initially it might be good, so first year sales might be 2-3 mln, but consoles are designed for 6-7 and now looks like even 8 year lifecycles, but PCs evolve, so this PC will be fixed in time few years after release, sales will drop to near zero.

Since y'know, it is a PC.

Also Moore's Law is dead, the value prop will remain strong over the intended lifespan.

What?Plus it's competing against big OEMs who make far more boxes and get far better economies of sale than something that will sell at best level of Steam Deck (8 mln units).

If they only launch AT2 then you are getting barely any more perf from discrete, unless you pay the green tax.One big negative is that form factor will be limiting upgrades, so it's not real desktop that can last for a while, doubt a lot of PC gamers will be interested - it's basically neither here nor there, and in 2027/28 when this things comes out RDNA5 dGPU will be available for decent upgrades in PCs.

Of course it isn't intended to replace enthusiast DIY, but really to be an easy reference gaming PC platform that will have cyclical upgrades to stay on top.

If you already have a good PC, at most it can exist as a supplemental box for when you just wanna play curated stuff.

Well it is 36GB, and that is still way cheaper than the equiv desktop PC when it launches.Also there is question of margin - they won't sell it at a loss or even break even, so we might see $1200-$1500 range with the way memory inflation gone: 32 GB GDDR7 won't be cheap.

The whole point is they no longer compete with the PS6, it is all alone, except the handheld is designed to crush Switch 2 into a fine paste.PS6 will kill it.

Unless you consider PC to be competing with console.

AMD definitely doesn't need the complication of AVX10.2 as long as compilers support AVX512. AMD has decided that having different architecture e and p cores isn't their strategy so it really doesn't help AMD at all (except for the new instructions in 10.2).Long and short, intel realized how stupid it would be to carve out a 256b niche for a dying platform.

The instructions, the compilers, and the programs are already out there. If you have an avx512 program, you expect it to run on future hardware that exposes the avx512 flags.

If you look at the instructions.

byte 0 EVEX 0x62 (old bound instruction) this tells the system it is a special instruction and the next 3 bytes are going to define the operand.

byte 1 register map

byte 2 data width and opcode map

byte 3 vector length

bytes after. variable length from the opcode map in byte 2.

map 1-3 legacy 2-3 byte opcodes.

map 5,6 avx 512 opcodes.

It gets even more complicated after that.

If intel was to define a 256b path with the above. They would have to replicate avx512 in the remaining maps. Well guess what APX took maps 4 and 7.

No older hardware is going to expose new flags for a 256b path for avx512. So any implementation is left to future.

Putting out half ass future cores that will be the only users of this subset or replicated subset just makes you look lazy (crazy?) considering amd's unified implementation.

This is the point you realize it's just easier to add full avx512 support even if it runs slower using lesser hardware.

I don't expect to see AVX10.2 support in any Zen or AMD architecture until such a time as AMD decides to make different architecture cores (not just different cache and different layouts to suit needs).... unless AMD wants to keep x86 consolidated to fend off ARM?

APX is another story. I think AMD should have full support of APX NO LATER than ZEN 7 (Really should be in Zen 6 IMO).

I mean, I'm glad that Intel FINALLY got drug into X86-64 by AMD and we went from 8 registers to 16 GPR's (one has to wonder why they didn't make the jump to 32 registers when they made the jump to 64 bit). I think that APX will bring x86 up to snuff (mostly) with ARM in areas that it is lagging. Note, I still think x86 is ahead of ARM in other aspects.

Schmide

Diamond Member

Zen 6 supports most everything in AVX10.2 including FP16, Bit Reversal, and possibly 32 zmm registers.AMD definitely doesn't need the complication of AVX10.2 as long as compilers support AVX512. AMD has decided that having different architecture e and p cores isn't their strategy so it really doesn't help AMD at all (except for the new instructions in 10.2).

I don't expect to see AVX10.2 support in any Zen or AMD architecture until such a time as AMD decides to make different architecture cores (not just different cache and different layouts to suit needs).... unless AMD wants to keep x86 consolidated to fend off ARM?

APX is another story. I think AMD should have full support of APX NO LATER than ZEN 7 (Really should be in Zen 6 IMO).

I mean, I'm glad that Intel FINALLY got drug into X86-64 by AMD and we went from 8 registers to 16 GPR's (one has to wonder why they didn't make the jump to 32 registers when they made the jump to 64 bit). I think that APX will bring x86 up to snuff (mostly) with ARM in areas that it is lagging. Note, I still think x86 is ahead of ARM in other aspects.

The only question is will they add the APX (32 gprs) as well.

Considering everything is held in the physical register files that are 224+, it's really comes down to doubling the register alias table and implementing the decode of the APX instructions. Seems pretty probable.

Last edited:

That's true for AVX512 with extensions too. Both AVX512 and AVX10 do not define how hardware should implement them. What has been already said in this thread...NOTHING IN AVX10.2 REQUIRES 256b OR 512b DATA PATHS. The entire data path could be 128b.

That's wrong. Like AVX512 it just offers 3 fixed lengths to choose from. That's nowhere near true VLA like ARM's SVE.Before I go into ANY detail, the MOST important thing to understand about AVX10.2 is that it is a VLA ISA (Vector Length Agnostic).

Once again it's wrong. Take a look at the example below. AVX512 and AVX10.2 compile to the same assembly. You can clearly see that both zmm and ymm registers are used. The ARM SVE example (furthest to the right) is true VLA example as nowhere does is make a distinction about the size of the target register. Everything is just Z{number}.s where s denotes we are dealing with 32b floats.NOTE: These are NOT in the instructions. The instructions are agnostic to the bit width supported. This is what makes it special.

https://godbolt.org/z/PTc5xobfh

Yes you did.If I have messed this up, someone let me know.

https://www.phoronix.com/news/Intel-AVX10-Drops-256-Bit this Phoronix article also links to revised intel docs on the matter.

No. That is simply wrong. AVX10.2 is not VLA. The vector lengths are encoded into instructions. If code is compiled to use 128-bit registers, it will run on any AVX10.2 CPU, but always use 128-bit registers. If it is compiled to use 512-bit registers, it will only run on implementations with 512-bit registers.Before I go into ANY detail, the MOST important thing to understand about AVX10.2 is that it is a VLA ISA (Vector Length Agnostic). This is done EXPLICITLY to support the same compiled code across different bit width implementations of different CPU's PEROID. This is the entire POINT of the new instruction set.

This is incorrect. Any instruction may use any register size, but the register size is encoded into instructions.NOTE: These are NOT in the instructions. The instructions are agnostic to the bit width supported. This is what makes it special.

Vector length agnostic isas are ones like SVE(2) and RVV, which allow code compiled for one vector length to be used on a wider or narrower architecture, and always run at the full capability of the architecture.

AMD has already announced support for AVX10.AMD definitely doesn't need the complication of AVX10.2 as long as compilers support AVX512. AMD has decided that having different architecture e and p cores isn't their strategy so it really doesn't help AMD at all (except for the new instructions in 10.2).

That's wrong. Like AVX512 it just offers 3 fixed lengths to choose from. That's nowhere near true VLA like ARM's SVE.

I stand corrected. Since this is true, what advantage does AVX10.2 have?No. That is simply wrong. AVX10.2 is not VLA. The vector lengths are encoded into instructions. If code is compiled to use 128-bit registers, it will run on any AVX10.2 CPU, but always use 128-bit registers. If it is compiled to use 512-bit registers, it will only run on implementations with 512-bit registers.

For what purpose I wonder?AMD has already announced support for AVX10.

Schmide

Diamond Member

They have the functionality already. In fact zen5 supports the most avx512 flags of any processor.For what purpose I wonder?

It all comes down to adding a bit of microcode such that they understand the instructions.

None of the CPUs, amd or intel, have dedicated FP16 BF16. They just convert operate convert back. Most video cards do the same.

APX is another story. I think AMD should have full support of APX NO LATER than ZEN 7 (Really should be in Zen 6 IMO).

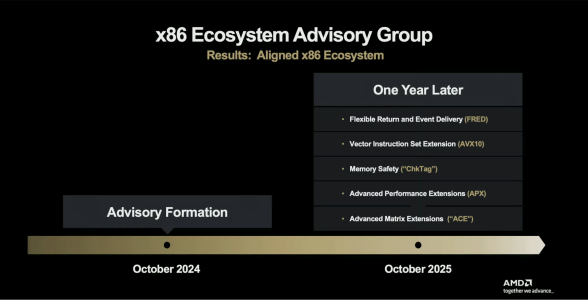

I would assume that APX could arrive with Zen 6. For Zen 6 AMD announced "new AI datatype support" and "more AI pipelines". That could be AMX and/or APX. But that is not clear to me. AMD does not state AMX or APX in any of their documents or blogposts (only Mark Papermaster had APX on one slide regarding x86 consortium, AMX was never shown). Zen 6 brings at least FP16 support and INT8 VNNI for AVX512 (where we have documented evidence).Zen 6 supports most everything in AVX10.2 including FP16, Bit Reversal, and 32 zmm registers?

The only question is will they add the APX (32 gprs) as well.

ACE is more or less confirmed for Zen 7. AMD officially states "New Matrix Engine" and some leaked documents showed ACE (but half of the max. specified throughput as far as I remember).

Edit:

Source: https://www.techpowerup.com/342763/amd-zen-6-isa-to-bring-avx512-fp16-vnni-int8-and-more"A set of GNU compiler patches confirms this, as the new open-source enablement adds AVX512_BMM, AVX_NE_CONVERT, AVX_IFMA, AVX_VNNI_INT8, and AVX512_FP16 to GCC. These instructions are particularly interesting due to their intended use cases. AVX-512 BMM is designed for bit matrix manipulation, significantly accelerating local AI deployments. With native FP16 calculations and AVX VNNI in INT8 format on desktops, users will no longer need to rely on Intel Xeon CPUs for AVX-related development and process acceleration. This development benefits more users across the entire product family based on Zen 6. AMD is now directly competing with Intel in AVX development, meaning the difference between the two will come down to implementation strategy. There is also increasing evidence that Intel's next-generation "Nova Lake" will reintroduce AVX-512 functionality to desktops, suggesting that advanced vector and matrix acceleration is set to expand on consumer PCs."

Edit 2:

Does anybody know, which data types ACE will support? FP8, FP6, FP4? MXFP versions?

Last edited:

Simplified feature detection. To be clear, it just doesn't add much over AVX512. The original plan was to have basically AVX-512 with different register lengths, and a runtime way of figuring out which code path to dispatch. The industry revolted, no-one wants the complication of shipping multiple code paths. What's left is just AVX-512 with a better feature detection scheme bolted on top. (Which no-one will use for decades, because there is a significant base of AMD AVX-512 hardware, both on desktop and in server, and people will use the old style of feature detection to make sure code runs on them too.)I stand corrected. Since this is true, what advantage does AVX10.2 have?

For the same purpose they have added all the previous Intel SIMD extensions. Compatibility means following even the missteps. It helps that it is very cheap to add, as it is just feature detection.For what purpose I wonder?

The funny/sad part is that the register length is the least important part of AVX-512, the ability to mask memory operands is much more important. Had intel introduced the features as AVX10.2/256, everyone would still be using that. That was imho the objectively correct way to expand AVX. But they shipped AVX-512 instead, then unshipped it on desktop, and then wanted to segment the ISA by register width. And all of that was just horrible for everyone trying to ship software that uses the damn thing.

On Linux, Intel lobbied heavily to add AVX-512 to the x86-64-v4 baseline. And just as it was adopted, Intel started talking about AVX10, and register widths that vary by product segment. Everyone revolted, including Torvalds himself putting his foot down. A CPU on linux is either x86-64-v4, meaning it has 512-bit registers, or it is x86-64-v3, meaning software would have to be compiled without support for AVX-512 and AVX10 by default. I believe Microsoft did the same thing, and told Intel to go pound sand. Segmenting the product line by ISA features is terrible for software, because every additional dispatch path adds testing overhead. What we want is linearly improving chips, so you can pick your minimum supported feature set, and target that.

Last edited:

It is the standard that all future CPUs from AMD and Intel will implement.I stand corrected. Since this is true, what advantage does AVX10.2 have?

The fragmentation of the x86 ISA that Intel has brought about over the last two decades has been detrimental to x86.

To put an end to the endless list of AVX512 subsets.For what purpose I wonder?

adroc_thurston

Diamond Member

Cuz it's the latest SIMD ISA.For what purpose I wonder?

Win2012R2

Golden Member

Ok, so semi-custom design costs will have to be amortised over 2-3 years rather than 7-8, on fairly new processes too - I don't know exactly how much it costs, but it should be pretty expensive, doubt it's less than $100 mln, more likely a few hundred millions: if you sell just 4-6 mln units then it's a big %.Oh that is the cute part, they can release upgraded versions every 2-3 years instead of 7.

Since y'know, it is a PC.

Selling cheap gaming PC is competing directly with OEMs that make Microsoft far more money from software sales.What?

Nah it won't be and in any case a lot more expensive than PS6, which is the primary market it will compete in.Well it is 36GB, and that is still way cheaper than the equiv desktop PC when it launches.

APX is another story. I think AMD should have full support of APX NO LATER than ZEN 7 (Really should be in Zen 6 IMO).

Zen6 seems highly unlikely to me, I'd love to be pleasantly surprised.

branch_suggestion

Senior member

The Magnus SoC die can last 7 years just fine, just swap the GMD with a shiny new one.Ok, so semi-custom design costs will have to be amortised over 2-3 years rather than 7-8, on fairly new processes too - I don't know exactly how much it costs, but it should be pretty expensive, doubt it's less than $100 mln, more likely a few hundred millions: if you sell just 4-6 mln units then it's a big %.

Well it will likely lack all the freedoms of a DIY PC.Selling cheap gaming PC is competing directly with OEMs that make Microsoft far more money from software sales.

If you wanna play PC games and do (some) PC stuff, PS6 is not an option.Nah it won't be and in any case a lot more expensive than PS6, which is the primary market it will compete in.

If you wanna do both they you gotta have a PS6 and a PC.

Sony ain't releasing PC ports ever again.

It is Z7 with the full new featureset yes. Maybe by Z9 they can have a proper new AArch64 style clean sheet ISA.Zen6 seems highly unlikely to me, I'd love to be pleasantly surprised.

Tachyonism

Member

So you're thinking that Intel would also go along with that new ISA do-over?It is Z7 with the full new featureset yes. Maybe by Z9 they can have a proper new AArch64 style clean sheet ISA.

branch_suggestion

Senior member

Depends on Unified core plans, that is ~Z8 time so could get interesting.So you're thinking that Intel would also go along with that new ISA do-over?

CouncilorIrissa

Senior member

It's very unrealistic yes. Given APX was mostly spearhead by Intel and only finalized in the late 2024, APX support in Zen 6 seems very unlikely, the RTL would've been long done by then. CPU core roadmap from FAD '25 would've mentioned this as well.Zen6 seems highly unlikely to me, I'd love to be pleasantly surprised.

TRENDING THREADS

-

Discussion Zen 5 Speculation (EPYC Turin and Strix Point/Granite Ridge - Ryzen 9000)

- Started by DisEnchantment

- Replies: 25K

-

Discussion Intel Meteor, Arrow, Lunar & Panther Lakes + WCL Discussion Threads

- Started by Tigerick

- Replies: 25K

-

Discussion Intel current and future Lakes & Rapids thread

- Started by TheF34RChannel

- Replies: 24K

-

-