Panino Manino

Golden Member

Nitpicking: IBM chose the 8088, not the 8086 😉

"Fool me once, shame on you.

Fool me twice, shame on me. "

Nitpicking: IBM chose the 8088, not the 8086 😉

It's the same reason why x86 continues to live. Despite its IPC superiority, Apple M4 cannot run a Geforce 4090 or 5090 or 9070 XT. And suppose Apple came out with a micro-ATX board for it tomorrow (very unlikely), how interested would AMD/Nvidia be in rewriting their GPU drivers for it? Apple would have to PAY both for the driver development and then hope the cost pays off in the end. They could totally go down this path but they are rolling in so much cash that they go "meh" at even the thought of it.

Apple struck gold, oil, and a mine full of diamonds with the iPhone. Everything else matters little.As you said very unlikely. Should really be no chance in hell. Apple loves their walled garden and controlling everything. They would never allow anyone to possibly tarnish their brand. Also, it's not like they need the money

…and to think: the first iPhone almost had an Intel chip inside! 🤣Apple struck gold, oil, and a mine full of diamonds with the iPhone. Everything else matters little.

The Smartphone is the classic example of the thing we didn't even know we desperately needed.…and to think: the first iPhone almost had an Intel chip inside! 🤣

The Smartphone is the classic example of the thing we didn't even know we desperately needed.

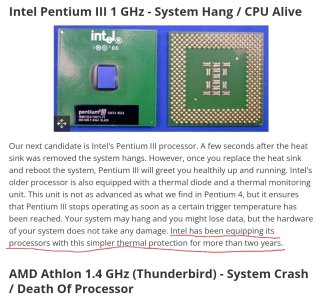

It wasn't that AMD chips ran hot, it was that they lacked effective thermal protection.Socket A Thunderbirds where freakin awesome. I am never a big fan of these stacked comparisons. 6 months to a year before this Intel CPU's would do the same. Intel came up with their speed step for slowing down as tempatures got higher, first, and all of a sudden it was look how bad AMD is because their CPU's will burn up without a cooler. Instead of being a cool feature Intel CPU's it became proof that AMD chips ran hot and were bad.

Well it isn't as if Intel integrated the thermal diode 10 years before, huh.It wasn't that AMD chips ran hot, it was that they lacked effective thermal protection.

Back in the day Intel chips had built in thermal protection well before speed step. They would just stop working if they got hot but they were not damaged and worked fine once they cooled down.

I specifically remember because I sent an AMD computer to a friend. Somehow it ended up with the heatsink off and the AMD CPU burned up dead.

Also one of the AMD owners (who gave me a hard time for buying Intel) burned up his AMD CPU. The AMD owners made it sound like this would never happen but it did happen, twice that I know of personally.

It was a dumb thing not to have. Thousands of transistors and you can't put one thermal diode in there?

Yeah IDK the video at Tom's is my earliest recollection of the topic. Tom's indicates that Intel's 2 year old solution at the time was better than AMD's most recent efforts at the time.Well it isn't as if Intel integrated the thermal diode 10 years before, huh.

Crackberry was the homerun.The corporate world knew for a long time prior. Things like the Palm Pilot and the Compaq iPaq were around for years prior, and there were teams actively working on the concepts of converged devices. Apple gets credit for being the first to pull it off.

Yep, from memory, it was (IMO) from previous generation with Intel meaning socketed PIII. Not really a lot earlier than AMD.Yeah IDK the video at Tom's is my earliest recollection of the topic. Tom's indicates that Intel's 2 year old solution at the time was better than AMD's most recent efforts at the time.

I did run across some evidence that Intel might have had some sort of thermal protection as early as the P1:

Pentium® OverDrive® processors with MMX™ technology

Runs Slower if the Fan is Disabled

End of Interactive Support Announcement

These products are no longer being manufactured by Intel. Additionally, Intel no longer provides interactive support for these products via telephone or e-mail, nor will Intel provide any future software updates to support new operating systems or improve compatibility with third party devices and software products.

THESE DOCUMENTS ARE PROVIDED FOR HISTORICAL REFERENCE PURPOSES ONLY AND ARE SUBJECT TO THE TERMS SET FORTH IN THE "LEGAL INFORMATION" LINK BELOW.For information on currently available Intel products, please see www.intel.com and/or developer.intel.com

Symptom

The system seems slower than normal with the Pentium® OverDrive® processor with MMX™ technology installed. Across all applications, the performance drop appears the same. Diagnostics report the processor working at the proper speed.

Description

The fan is not working. The thermal protection circuitry built into the microprocessor is reducing the number of instructions performed, thus slowing the system.

Yep, from memory, it was (IMO) from previous generation with Intel meaning socketed PIII. Not really a lot earlier than AMD.

Then, I would not take Tom's as a source of anything but garbage. Another source would be better but it is around that era.

AFAIR next AMD generation had a diode which should mean Athlon XP Palomino and next ones.

A good lesson to us to properly define our terms! re microcontrollers, I hear you; you've awakened memories that I've tried to forget, even if I wasn't directly involved. Ugh.If we're going farther afield, there are a couple of microcontrollers whose designers I would very much like a word with in a dark alley...

Compared to some of the train wrecks on this list it doesn't even break the top 10 😂I know that this won't be a champion, but can we consider the Tensor G5 on how the dissaster was done to be considered a case of studio?

I mean, uses TSMC 3nm, which is considered a superior node, has a decent CPU configuration, but then choses a Power VR GPU which is unoptimized and causes that all the good things they did, gets royally screwed.

Gaming wise has a lot of glitches, processing wise, performs like a Snapdragon 8 Gen 2.

And yeah, it is being even compared to the Kirin 9020... a "7nm" processor that can go toe by toe. It left me think... what Google did wrong to end like that?

The accidental greatness of the PC - and a reason why I think it was just that it won - was that it wasn't hardwired to have particular graphics and sound chips and that it had a base of a common platform (BIOS) and OS (the PC/MS DOS). IBM didn't intend it, but this computer ended up being not just a one-company play like all the competition (say, Amiga, Atari, Apple and various highend stuff), but a platform. The viability of clones and their coming was MASSIVE THING.The biggest problem for the market is that there was never a successful collaboration of resources by any one alternative. Mac volume was never enough on its own for the same economy of scale. Commodore and Atari imploded, with the XT line hitting a wall and Amiga barely limping along. You get MIPS, VAX, Sparc and Alpha along the way, but none can outgrow their niche. If they had formed an industry consortium, rallied around the 68000 and bespoke accelerators or a compatible high/low product approach with a common programming and OS kernel platform, they would have been a force for change.

Conventional wisdom in some areas is that the PC market is a good example of bundling vs unbundling theory. In the early days of computing (the Commodore/Sun era) the markets were too small to unbundle - you had to do it all in house because there wasn't enough volume to tip up a component/OEM model where the component makers could stay in business.enough on its own for the same economy of scale. Commodore and Atari imploded, with the XT line hitting a wall and Amiga barely limping along. You get MIPS, VAX, Sparc and Alpha along the way, but none can outgrow their niche. If they had formed an industry consortium, rallied around the 68000 and bespoke accelerators or a compatible high/low product approach with a common programming and OS kernel platform, they would have been a force for change.