-

We’re currently investigating an issue related to the forum theme and styling that is impacting page layout and visual formatting. The problem has been identified, and we are actively working on a resolution. There is no impact to user data or functionality, this is strictly a front-end display issue. We’ll post an update once the fix has been deployed. Thanks for your patience while we get this sorted.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

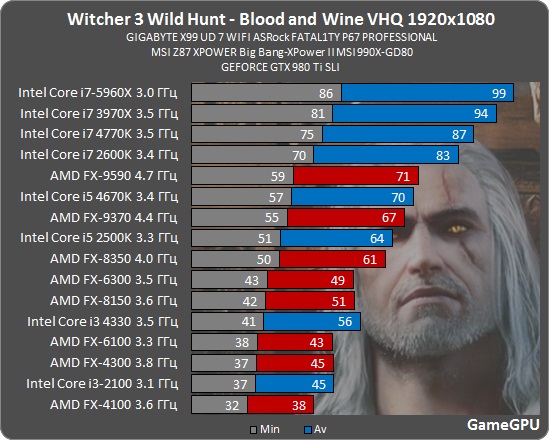

Witcher III: Blood and Wine CPU benchmarks - MOAR CORES

- Thread starter escrow4

- Start date

VirtualLarry

No Lifer

If you have anything less than a 2500K and you want to AAA game with decent minimums, its upgrade time.

It certainly looks like that, but ... where is the i3-6100 / i3-6320? Those Skylake i3s often bench very much the same as a non-overclocked i5-2500K.

DidelisDiskas

Senior member

Do lower end cards like r7 360, or the 750TI get a considerable boost from a good cpu (versus something like a celeron, or an 860k)?

Do lower end cards like r7 360, or the 750TI get a considerable boost from a good cpu (versus something like a celeron, or an 860k)?

You need balance in any system. Still, those GPUs will hold you back more than a old GPU. They can barely sustain 30FPS.

@VirtualLarry

They haven't updated their CPUs in a long while.

DidelisDiskas

Senior member

You need balance in any system. Still, those GPUs will hold you back more than a old GPU. They can barely sustain 30FPS.

So there would be no noticeable improvement even if one would play those games at lower settings?

So there would be no noticeable improvement even if one would play those games at lower settings?

Unless its an Core 2 or similar vintage. Anything remotely modern should be fine. What CPU do you have in mind?

DidelisDiskas

Senior member

Unless its an Core 2 or similar vintage. Anything remotely modern should be fine. What CPU do you have in mind?

I was just curious in general, as i was surprised that a cpu that is not even twice as fast in general (4670 vs 4100), can have such a big impact on performance when working with higher end gpu's.

Yuriman

Diamond Member

I was just curious in general, as i was surprised that a cpu that is not even twice as fast in general (4670 vs 4100), can have such a big impact on performance when working with higher end gpu's.

If you consider Cinebench to be a valid benchmark, a stock 4670 is actually a little more than twice as fast as a 4100, scoring 6.41 and 3.12 respectively.

Do lower end cards like r7 360, or the 750TI get a considerable boost from a good cpu (versus something like a celeron, or an 860k)?

maybe if you force it, like lower most settings (even resolution) keeping the more demanding settings for the CPU at max

at 1080 medium not really... those cards run near 30FPS, so it's best to use a 30FPS cap and enjoy consistent framerate.

DidelisDiskas

Senior member

If you consider Cinebench to be a valid benchmark, a stock 4670 is actually a little more than twice as fast as a 4100, scoring 6.41 and 3.12 respectively.

Still, that's almost a linear performance hit in terms of cpu speed. Which part of the process causes this, considering games are much more gpu heavy?

Last edited:

Still, that's almost a linear performance hit in terms of cpu speed. Which part of the process causes this, considering games are much more gpu heavy?

On the contrary games are rather CPU heavy now thanks to multi-core consoles. Need to squeeze the power out of those tablet CPUs. 😉

superstition

Platinum Member

Looks like the 9590 is throttling.

TheELF

Diamond Member

Keep in mind that SLI runs twice the drivers putting smaller CPUs at an disadvantage.

Also always look at the video of the tests they are doing,it's no coincidence they are called gameGPU they always test in CPU undemanding areas where multicore CPUs get the advantage of just rendering frames with all their cores,something that does not happen if there is (demanding) game code running.

Old example from crysis 3 but very relevant.

Also always look at the video of the tests they are doing,it's no coincidence they are called gameGPU they always test in CPU undemanding areas where multicore CPUs get the advantage of just rendering frames with all their cores,something that does not happen if there is (demanding) game code running.

Old example from crysis 3 but very relevant.

A 2011 2500K that you can buy used for very low prices is already out of reach for any AMD CPU with a slight OC, based on this benchmark. And BCLK overclocking is still possible with cheap non-K Skylake CPUs on select motherboards.

Also the 'more expensive Haswell i5' does support this feature called overclocking as well. 🙂

Also the 'more expensive Haswell i5' does support this feature called overclocking as well. 🙂

Last edited:

Oh there's no need to go used, neither change the goalpost to OCing low-end parts and comparing them to (more expensive) chips at stock like you usually do. I'm sure you can find some (new) discounted Haswell i5 + MB combos for very low prices depending on where you live. The 2500K bit was just to show that, in 2016 - after years of multi-core optimizations and PS4/X1 ports - performance per core (IPC/clocks) still matters. 😛

Last edited:

Zstream

Diamond Member

Lol, modern day Rollowell if we are changing goal posts, then i guess you didnt know there are used and cheaper FXs out there 🙄

Zstream

Diamond Member

Isn't that kinda an oxymoron or are you saying 4 threads and that's it?Witcher 3 doesn't scale beyond four threads, but it also loves hyperthreading.. Any increase from m0ar cores, likely stems from the larger caches of those CPUs more than anything.

Isn't that kinda an oxymoron or are you saying 4 threads and that's it?

I'm saying it uses four threads and that's it. HT can improve performance in other ways, like reducing memory latency and mitigating thread stalls. So the boost from HT enabled CPUs in that graph isn't because the game itself is using those threads, but probably because the code is latency bound.

http://wccftech.com/intel-skylake-6700k-6600k-amd-fx-8370/I'm saying it uses four threads and that's it. HT can improve performance in other ways, like reducing memory latency and mitigating thread stalls. So the boost from HT enabled CPUs in that graph isn't because the game itself is using those threads, but probably because the code is latency bound.

^^ thanks for proving my point 😀

On another note, WCCftech is one of the least competent tech sites for testing I can think of. They should stick to news.

Witcher 3 is only CPU bound in Novigrad, and likely also in Beauclair. Those are the only two areas where HT is going to make a difference, and I know that GameGPU tested their CPU run in Beauclair. In fact, this is their test run for the CPU:

GameGPU CPU test run for Blood and Wine

BTW, how do you embed YouTube clips? I tried using the insert function but I got an error.

On another note, WCCftech is one of the least competent tech sites for testing I can think of. They should stick to news.

Witcher 3 is only CPU bound in Novigrad, and likely also in Beauclair. Those are the only two areas where HT is going to make a difference, and I know that GameGPU tested their CPU run in Beauclair. In fact, this is their test run for the CPU:

GameGPU CPU test run for Blood and Wine

BTW, how do you embed YouTube clips? I tried using the insert function but I got an error.

TRENDING THREADS

-

Discussion Zen 5 Speculation (EPYC Turin and Strix Point/Granite Ridge - Ryzen 9000)

- Started by DisEnchantment

- Replies: 25K

-

Discussion Intel Meteor, Arrow, Lunar & Panther Lakes + WCL Discussion Threads

- Started by Tigerick

- Replies: 25K

-

Discussion Intel current and future Lakes & Rapids thread

- Started by TheF34RChannel

- Replies: 24K

-

-