This, why is it so hard to understand for some that game engines scale to the memory available doesn't mean they NEED said amount on the card.

Some understand it perfectly because it wasn't an issue for the last 8 months with 970 SLI or 980 SLI but now a new metric needs to be created where AMD can't compete to justify sticking to the preferred brand. Also, the game might use > 4GB of VRAM but at that point the performance hammers any single GPU out today (Example #1). Alternatively, a game can use > 6GB of VRAM but it doesn't mean it runs poorly on a 4GB card (Example #2). You can also have a situation where VRAM being used is very high in MSI AB making you believe a 4GB card would choke but in reality it wipes the floor with a 6-12GB card (Example #3).

Dead Rising 3 - Example #1

COD AW - Example #2

SoM - Example #3 (R9 295X2 is 63% faster than TX)

Do you think it's a coincidence several sites are coming out with articles about VRAM usage in 4K just before Fury launches?

It would matter if their own analysis supported this hypothesis but it seems they just make stuff up.

"On the GeForce GTX 980 Ti we clearly exceed 4GB of VRAM in Dying Light at 1440p and 4K. We also exceed 4GB of VRAM at 4K in GTA V and Far Cry 4. What this shows is that these games, the GTX 980 cards "want to" and can, exceed the 4GB framebuffer if more VRAM is exposed.

This means the 4GB of VRAM on the GTX 980 is limiting these cards, but it is not on the 6GB GeForce GTX 980 Ti."

980 SLI > Titan X in GTA V @ 4K. If 4GB of VRAM was limiting the 980, TX would be faster.

980 SLI > Titan X in Dying Light @ 4K

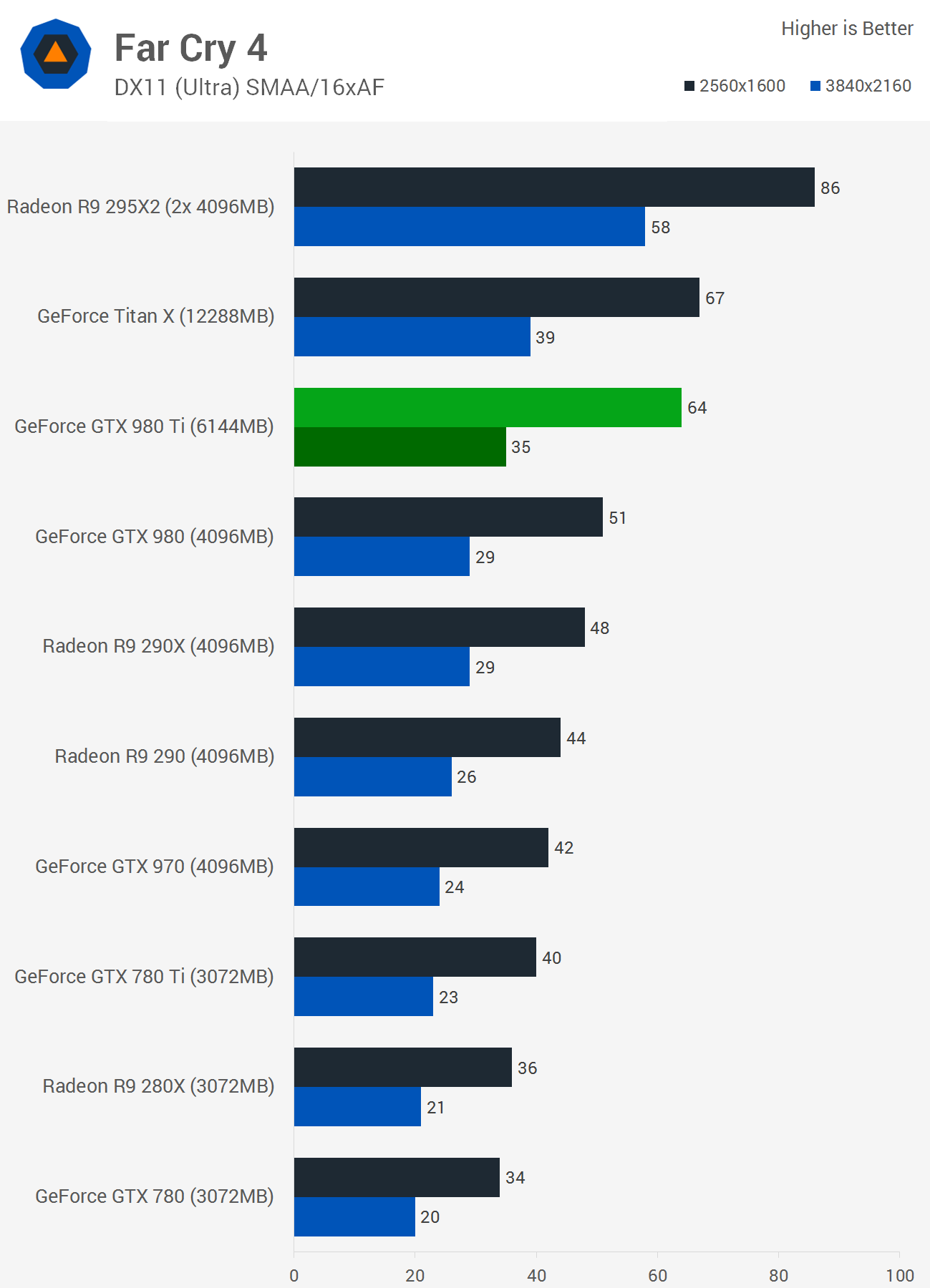

I couldn't find 980 SLI in Far Cry 4 @ 4K but R9 295X2 crushes a Titan X in that game at 4K.

When a professional review site doesn't understand the difference between required VRAM usage vs. dynamic VRAM usage in a modern PC game, that is an eye-opener.