Do you have an example of this dishonesty and lies?

Not that I can post here (it would be considered member callout), but yes I have a number of them.

Last edited:

Do you have an example of this dishonesty and lies?

That test measured 37 db.

The Vapor-X is not the same card as the Tri-X that the above poster was referencing.

Well, that answers that.

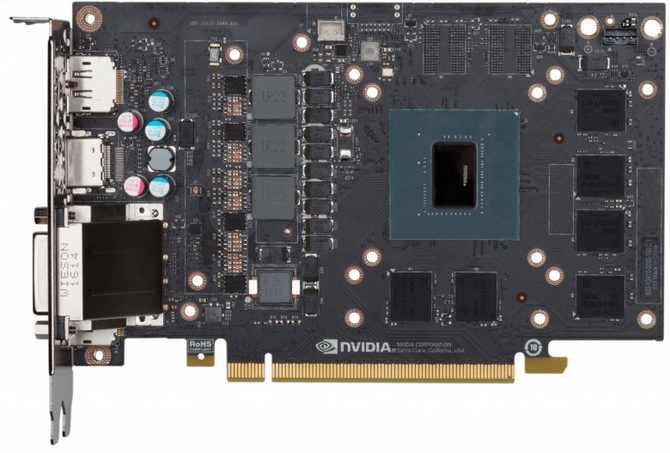

Power input is soldered onto the big holes if the silkscreen is any indication. The connector in the middle looks like it might be for the fan.

Not that I can post here (it would be considered member callout), but yes I have a number of them.

So we're just supposed to believe you? Why would it be a member callout, you'd just be posting what they themselves have said?

Are you for real? Sorry but this is lame historical revisionism regardless of how often it gets blindly parroted. When AMD was in the lead they absolutely were repeatedly praised on it. Examples:-But the real fact of the matter is that wattage never mattered until nVidia was better at it... (snip wall of text excuses)

Are you for real? Sorry but this is lame historical revisionism regardless of how often it gets blindly parroted. When AMD was in the lead they absolutely were repeatedly praised on it. Examples:-

"Conclusion: Low power consumption, excellent performance per watt" is literally number one on the plus list - April 2011

http://www.techpowerup.com/reviews/AMD/HD_6670/26.html

"It's nice to see the power consumption going down while performance goes up"

"That's some pretty low Power Consumption, even under load"

"Nice power consumption improvements over HD 5670 ... and over HD 4670"

"The Athlon 64 X2 4200+ also consumes less power, at the system level, than the Pentium D 840-just a little bit at idle (even without Cool'n'Quiet) but over 100W under load. That's a very potent combo, all told." - May 2005

http://techreport.com/review/8295/amd-athlon-64-x2-processors/16

Rinse & repeat hundreds of times on dozens of forums / review sites for YEARS when AMD/ATI were in front they were positively commented on vs nVidia's "power hogs". The term "Space Heater" itself sprung up around the P4 era by AMD users commenting on how the A64 drew as much as 50 watts less. If anything, it's only really been the last 2-3 years when AMD has started slipping behind on efficiency, and the very moment the roles got reversed a new group of "goalpost movers" sprung up on tech forums to "combat bias" - by pretending anything AMD is currently weak at "has always been irrelevant" and simply ended up "anti-fanboy-fanboys" themselves. And now we have 14nm, guess what? RX480 vs 2nd hand R9 290 card comparisons suddenly include "better perf per watt, high efficiency", etc, as a "new" positive sales point for buying the former over the latter by the same people who "didn't care" just 3 weeks ago. :sneaky: New RX 480 owners pumped over how a little undervolting and "it draws HALF the power of a 290!". So 150w AMD vs 275w AMD = "that's a great reduction", but 120w nVidia's vs 275w AMD = "no one has ever cared about that stuff, you smokescreen fanboy". And this is your "stamping out fanboy bias" methodology?

If efficiency is not important to you personally that's fine. But unless you're brand new to building computers, don't pretend "no one ever mentioned power consumption before Sandy Bridge / Maxwell", because that's one hell of a parallel universe fantasy that's already been repeatedly debunked by looking at reviews and forums comments of AMD products back when they were ahead and seeing the exact polar opposite of that claim... :thumbsdown:

That is a weird decision. It will not have contact resistance when compared to a connector, but this is more labour intensive and normally costs more.

About the 1060 - can this do Multi-Engine, aka asynchronous compute?

Anyone know exactly how the 1060 will fare in DX12?

My feeling is the 6GB will be an issue in DX12 at 1440p on day1 releases - perform better after driver updates.

Pascal cannot do DX12/Vulkan Multi-Engine. Lacks a real hardware scheduler that allows this flexibility.

How do you define a "real hardware scheduler"?

Something which violates the PCIe spec and promotes your 170W card as a 150W one.

Something which violates the PCIe spec and promotes your 170W card as a 150W one.

/sarcasm

He has no clue. Like the other ones which are still spreading these lies. Pascal fully supports "Multi engine".

Something nVidia improved from maxwell.

AMD still lacks FL12_1, Tiled Ressources Tier 3 and any VR-improving capabilities.

The reason I ask is that when the Polaris "teaser" materials first showed up, AMD promoted a feature known as a "hardware scheduler." This was a bit strange because GCN has, to my knowledge, what would be considered a "hardware scheduler" and has had one since inception.

Later on, we learned that the "hardware scheduler" AMD was talking about was a special new unit that essentially acts as a souped up Asynchronous Compute Engine, or ACE.

This is why I would like Silverforce11 to clarify his statement around a "real hardware scheduler." Because, strictly speaking, pre-Polaris GPUs didn't have "real hardware schedulers," at least in the context of the "hardware scheduler" block that was introduced with Polaris. But, even the original GCN had hardware schedulers per CU to schedule wavefronts to the vector units.

Already discussed previously:

http://forums.anandtech.com/showthread.php?p=38323925&highlight=hws#post38323925

You can even go back further, because the HWS units are in Fiji too:

http://forums.anandtech.com/showpost.php?p=37669975&postcount=14

All explained in those posts.

As for hardware/software scheduler, read Anandtech's articles on the matter. They've covered it well enough, if you're really curious and want to know.