-

We’re currently investigating an issue related to the forum theme and styling that is impacting page layout and visual formatting. The problem has been identified, and we are actively working on a resolution. There is no impact to user data or functionality, this is strictly a front-end display issue. We’ll post an update once the fix has been deployed. Thanks for your patience while we get this sorted.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

The anti-AI thread

Page 14 - Seeking answers? Join the AnandTech community: where nearly half-a-million members share solutions and discuss the latest tech.

Kaido

Elite Member & Kitchen Overlord

Kaido

Elite Member & Kitchen Overlord

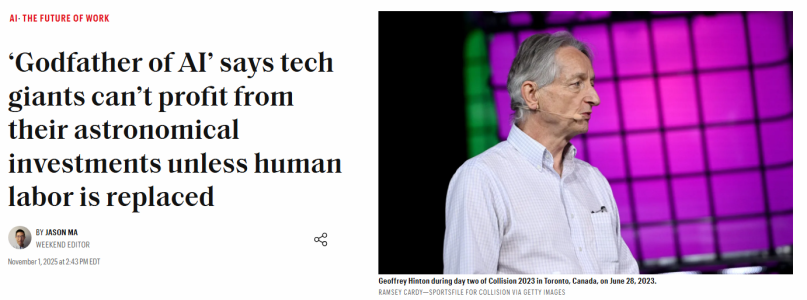

‘Godfather of AI’ says tech giants can’t profit from their astronomical investments unless human labor is replaced | Fortune

“I think the big companies are betting on it causing massive job replacement by AI, because that’s where the big money is going to be.”

mikeymikec

Lifer

‘Godfather of AI’ says tech giants can’t profit from their astronomical investments unless human labor is replaced | Fortune

“I think the big companies are betting on it causing massive job replacement by AI, because that’s where the big money is going to be.”fortune.com

View attachment 133104

My personal feelings on this topic, in meme format:

"About that time again, eh chaps?" "Right-o!"

Also:

Kaido

Elite Member & Kitchen Overlord

My personal feelings on this topic, in meme format:

View attachment 133227

"About that time again, eh chaps?" "Right-o!"

Humans: "Let's make AI do the boring stuff so that we're free to enjoy creative pursuits, like music & art!"

AI: "Strike that. Reverse it."

KMFJD

Lifer

you have got to be shitting me

Kaido

Elite Member & Kitchen Overlord

They backtracked:

OpenAI does damage control after a top exec talked government support for its spending spree

The company cleaned up comments supporting a government guarantee so AI firms can maintain their huge spending on chips and new data centers

OpenAI CEO Says U.S. Shouldn’t Bail out AI Companies

NVIDIA too:

Nvidia CEO Jensen Huang backtracks after saying 'China is going to win the AI race'

Nvidia later released a statement from Huang that hedged those comments. "China is nanoseconds behind America in AI," he said

It's the latest arms race:

South Korean president calls for aggressive AI spending in budget speech

China is genius:

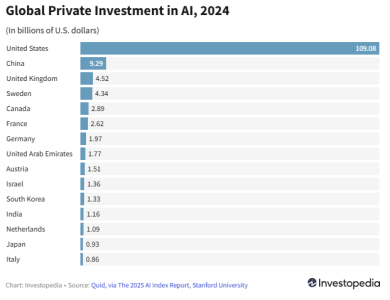

Which countries are investing most in AI?

They let us do all the R&D & then clone it lol:

Kaido

Elite Member & Kitchen Overlord

pop that bubble

I'm no oracle, but:

1. They cloned all of the creative stuff (video, photo, music, art, writing, graphic design)

2. They cloned coding (websites, smartphone apps, programs, gaming etc.) & learning (QuickTakes, NotebookLLM, etc.)

3. Robots are hard, but the tools are improving. City full self-driving is also hard, but improving. I suspect both of these will take at least 10 years to become truly as useful "as advertised" for consumers.

I suspect most growth will be niche stuff (medical drug development & whatnot). Datacenter usage prices are already dropping as stuff gets faster, better, and cheaper. Curious how you fund stuff after that point! The Big Short guy is already betting on the AI bubble:

KMFJD

Lifer

Inside Microsoft’s earnings was a charge that caught analysts by surprise: a $4.1 billion hit on its investment in OpenAI.

The figure was up 490% from a year earlier. It implies a more than $12 billion quarterly loss at OpenAI, said Firoz Valliji, an analyst at Bernstein, based on the 32.5% stake Microsoft reported owning in OpenAI last quarter.

A $12 billion loss in three months would mark one of the largest single-quarter losses for a tech company in history. It is not far off the $13 billion in revenue that OpenAI has told investors it is on track to take in over the course of the whole year.

clearly it's a spending issue

Kaido

Elite Member & Kitchen Overlord

Amazon and UPS Cut 48,000 Jobs to Automation. Is the AI Job Apocalypse Here?

Amazon and UPS Cut 48,000 Jobs to Automation. Is the AI Job Apocalypse Here? - The HighWire

Tech CEOs have warned about an AI job apocalypse in the coming years, as thousands of AI-related layoffs by Amazon and UPS begin.

thehighwire.com

Thousands of jobs have been cut by Amazon, UPS, and other major US corporations in a significant escalation of what many have dubbed the AI job apocalypse. Amazon cut 14,000 jobs due to AI, while UPS has cut 34,000 positions, including drivers and package handlers, as the peak holiday season approaches. “AI and robotics help to make jobs safer, while also reducing repetitive tasks,” a spokesperson said in an NBC report.

KMFJD

Lifer

numbers must go up or economy crashes right?

lxskllr

No Lifer

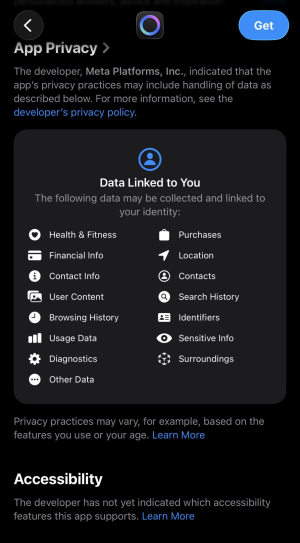

Is it really unreal? Really?!?! I assume it's the same shittastic meta that controls all the other shitty meta services, right? Use their services, all those terms apply to you too. Instagram, idiotbook, whatsapp, threads... Anything less would be the shocking revelation.

Kaido

Elite Member & Kitchen Overlord

numbers must go up or economy crashes right?

Simple structure:

1. Corporations pay lobbyists

2. Lobbyists pay politicians

3. Politicians set policy

OpenAI has upped its lobbying efforts nearly sevenfold

RE:

UnitedHealth on track for record lobbying spending

Last edited:

Kaido

Elite Member & Kitchen Overlord

Is it really unreal? Really?!?!

The funniest thing I've seen all year is when the United States government shut down Tiktok & everyone jumped ship to directly China's Red Note lol:

What to know about Red, the Chinese app rising as a TikTok alternative

Ahead of a potential US ban, TikTok creators are warming up to Xiaohongshu, known as Red in the West. Is it the right move?

Kaido

Elite Member & Kitchen Overlord

lobbying is just bribery wrapped around some nice legal verbiage

Marketing 101! These systems always have the same mechanics:

1. Legally driven, not ethically driven

2. Use money to fund lobbying (cough cough)

3. Adjust the laws to suit!

This was the discussion in the P&N Tesla thread earlier this year with the political blowback & lack of PR crisis management. Tesla stock has since recovered & is back up to $429 USD, the owner is up to $482 billion, and just secured a $1 trillion-dollar bonus. From an operational mechanics standpoint, it's not about public ethics; it's about game navigation within the framework of capitalism, where you are free to adjust the rules of the game based on your funding abilities. Case in point:

The US Government’s Use of Grok AI Undermines Its Own Rules

Executive Order 14319, “Preventing Woke AI in the Federal Government,” mandates that all government AI systems be “truth-seeking, accurate, and ideologically neutral.” OMB’s corresponding guidance memos (M-25-21 and M-25-22) go further: they require agencies to discontinue any AI system that cannot meet those standards or poses unmitigable risks.

Grok fails these tests on nearly every front. Its outputs have included Holocaust denial, climate misinformation, and explicitly antisemitic and racist statements. Even Elon Musk himself has described Grok as “very dumb” and “too compliant to user prompts.” These are not isolated glitches. They are indicators of systemic bias, poor trollish training data, inadequate safeguards, and dangerous deployment practices.

In Senate testimony, White House Science Adviser Michael Kratsios acknowledged that such behavior directly violates the administration’s own executive order. When asked about Grok’s antisemitic responses and ideological training, Kratsios agreed they were “obviously aren’t true-seeking and accurate” and “the type of behavior” the order sought to avoid.

That acknowledgment should have triggered a pause in deployment. Instead, the government expanded Grok’s footprint to every agency. This contradiction, banning “biased AI” on paper while deploying a biased AI system in practice, undermines both the letter and the spirit of federal AI policy.

OpenAI is under a lot of pressure:

1. The entire world is coming to eat its lunch, especially China. This incentivizes them to take the safety rails off.

2. There is no way they can sustain a zillion dollars in revenue to cover the current investments but that's simply how every "gold rush" works (re: the dot-com bust in the early 2000's)

3. From a corporate perspective, "asking forgiveness instead of asking permission!" (re: Sora 2's initial lack of copyright guardrails) is sort of the go-to policy, because the worst government response simply requires paying a fee (re: Anthropic paid out $1.5 billion-dollar settlement in order to secure $300 billion in funding).

AI is like guns: at this point, it simply exists; what matters is how people choose to use it as a tool. Guns can be used for hunting, fun, and self-protection, as well as for murder or in a war of aggression. I spend roughly 50% of my time with AI these days, which lets me do incredible things, but which is also used poorly by other people:

Algorithms of Genocide: From Silicon Valley to the Gaza Strip - Al Habtoor Research Centre

The tools of twenty-first-century warfare are no longer confined to conventional weapons such as missiles, tanks, and aircraft. They have expanded to encompass cloud-computing platforms, artificial-intelligence systems, and data-processing capabilities developed and managed by major U.S.-based...

AI-Enabled Killing

However, the fundamental danger inherent in these systems lies in their heavy dependence on data that may be biased or flawed, a well-known principle known as “garbage in, garbage out.” Reports indicate that the digital tools employed by the Israeli military in Gaza rely on inaccurate data, rough estimates, and systematic biases, thereby exposing civilians to extreme risk. When algorithms such as “Lavender” are trained on loosely defined, politically motivated threat classifications, for instance, equating legitimate Palestinian human rights organisations with “terrorist groups”, their outputs inevitably replicate and amplify those biases, heightening the likelihood of erroneous and unlawful targeting of non-combatants. Consequently, the immense computational capacities of cloud infrastructure function as accelerants of destruction, magnifying the consequences of embedded AI errors and generating systematic, large-scale harm to civilians.

It's always been the same sad story throughout recorded history; it's just more visible & more powerful thanks to modern communication & technology.

Last edited:

KMFJD

Lifer

no no no....that 500k i just donated to your campaign, not a bribe, something totally different.....wow will you look at that, this company would like me to be on their board after i retire from politics with a nice cushy salary.....my wife? oh she's on a different board/non profit(?!)

nakedfrog

No Lifer

Oh, I know this one!can someone explain to me how a shitty electrical car manufacturer that builds a fraction of the cars that others do is worth(?) more than the combined value of the top 26 car makers?

Through the magic power of...

IronWing

No Lifer

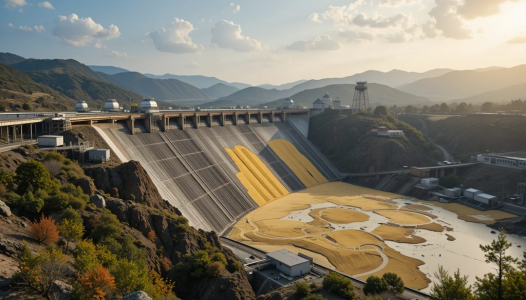

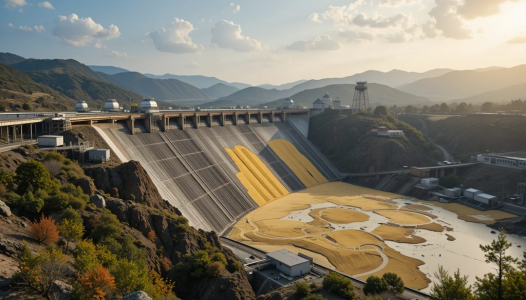

This is the bullshit we can expect in our AI future:

Gold mining tailings dam. AI-generated stock image.

This is what a real tailings dam looks like:

Note the lack of flood gates and power house, because, you know, it's a tailings dam.

The article accompanying the AI image was also AI generated and notes, "Globally, an estimated 13 billion square tonnes of tailings are generated at mine sites each year…".

How many tonnes of gold are there in a square tonne of gold?

If you don't know what a tailings dam is, here:

en.wikipedia.org

en.wikipedia.org

Gold mining tailings dam. AI-generated stock image.

This is what a real tailings dam looks like:

Note the lack of flood gates and power house, because, you know, it's a tailings dam.

The article accompanying the AI image was also AI generated and notes, "Globally, an estimated 13 billion square tonnes of tailings are generated at mine sites each year…".

How many tonnes of gold are there in a square tonne of gold?

If you don't know what a tailings dam is, here:

Tailings dam - Wikipedia

Last edited:

Kaido

Elite Member & Kitchen Overlord

View attachment 133624

Gold mining tailings dam. AI-generated stock image.

This is what a real tailings dam looks like:

View attachment 133625

Well that brought up a 28-year-old memory...

TRENDING THREADS

-

Discussion Zen 5 Speculation (EPYC Turin and Strix Point/Granite Ridge - Ryzen 9000)

- Started by DisEnchantment

- Replies: 25K

-

Discussion Intel Meteor, Arrow, Lunar & Panther Lakes + WCL Discussion Threads

- Started by Tigerick

- Replies: 25K

-

Discussion Intel current and future Lakes & Rapids thread

- Started by TheF34RChannel

- Replies: 24K

-

-