Everyone is drowning in pages and pages of technical details. As a hardware lover for about 25 years, there are a few questions I would like to ask or examine, and that is;

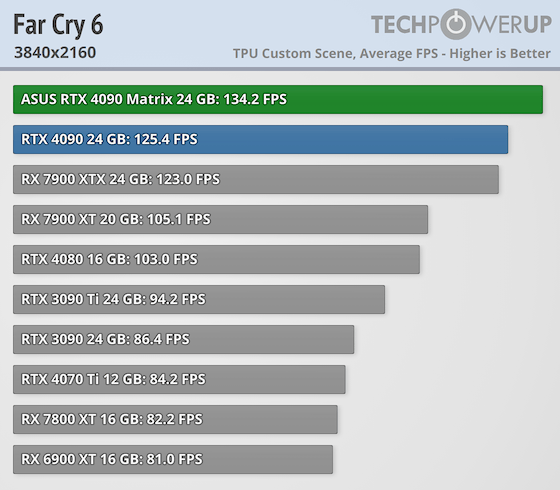

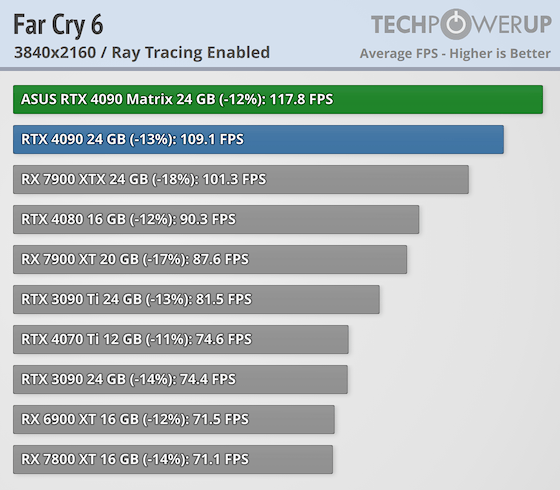

Why does AMD, which has done great work with RDNA 2 on the console side, fall behind its rival when it comes to the PC side? I think these should be questioned because of games with disgraceful optimization. Graphics in games have now reached such a point that there is no longer a huge difference between the game running at the lowest setting and the highest setting. Most importantly, does the technology called Ray Tracing have an effect on the texture quality other than the light hitting the surfaces (in puddles, on walls and glass surfaces, or the light beams of the sun hitting the sky or the light beams coming from the lighting) So in general, does it provide depth in the textures of the games in the game's texture quality? No.

The main problem is that it is behind its rival in calculating Ray Tracing. For this reason, they added AI cores in the 7000 series, but it is still not established on the software side. I will not mention the DLSS/FSR issue at all. Because both sides were going through a period when the power of the cards they produced was not fully mature, they invented this technology software to provide extra FPS. Just like the Hyper Threading (HT) technology, which they introduced in order to show more processor cores when CPUs do not have real physical cores, they are trying to use software interleaved image frame creation technology to make the FPS appear high. This seems a bit fraudulent to me. I used Nvidia for about 5 years. Although DLSS / FSR technology is a useful thing for the player, I rarely used this technology. Frankly, this is nothing more than cheating.

It's a software thing that emerged when the real computing power of the cards was not enough and was invented to get more FPS. That's why DLSS / FSR comparisons are not important.

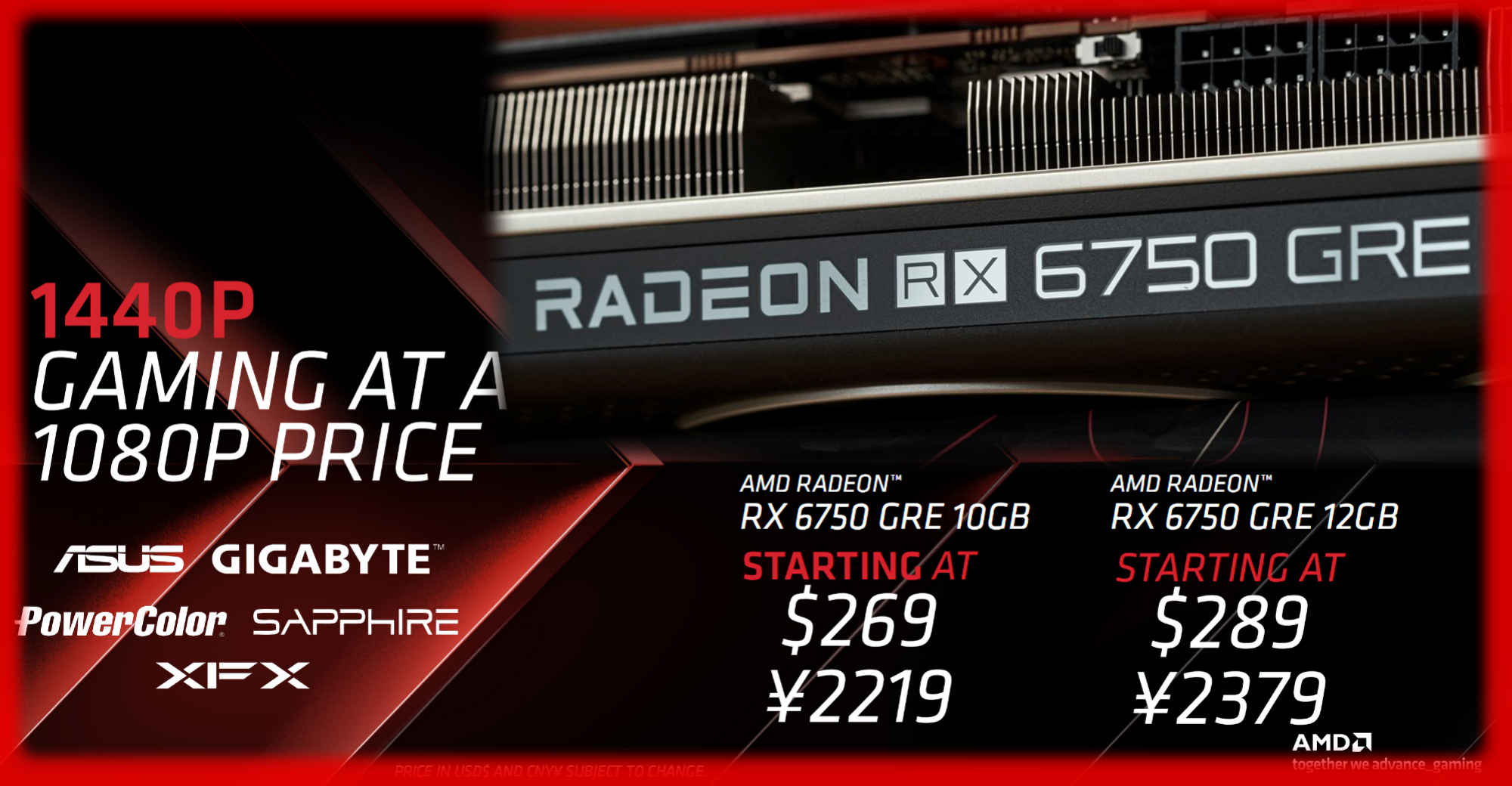

As someone who switched from RTX 3070TI to RX 7800XT and used legendary ATI Cards such as XT1950XTX in the past, my only expectation from AMD is to further improve software optimization. More performance like Nvidia CUDA in third party applications (3DMAX, Photoshop, Vray, Autocad, etc.). And it is more stable in games and can use all the hardware features of the card.

When it achieves this, the Radeon series will truly compete with its rival.

Regarding RDNA 3, it is a really good GPU, but AMD needs to provide better optimization. In order to overcome the RTX 4000 Series in terms of performance (FSR, Frame Generation and Ray Tracing).